Executive Summary

As the financial sector considers how to integrate emerging AI risks into its governance, valuable lessons can be drawn from past oversight failures. The Global Financial Crisis (GFC) provides a benchmark case for understanding how weak risk management, poor board engagement, and failure to anticipate systemic threats can amplify financial instability

As artificial intelligence (AI) rapidly reshapes the labour market, a key question arises: do directors of Australian corporate lenders have a duty of care—under section 180 of the Corporations Act 2001 (Cth)—to manage the financial risks that AI-driven job disruption may pose to borrowers, shareholders, or the company itself? This report explores that question, focusing on how AI-related income volatility may affect long-term loan repayment and what legal and regulatory frameworks are relevant.

The analysis draws on three main frameworks:

- Statutory duty of care and diligence (*s 180 Corporations Act*)

- Responsible lending obligations under the *National Consumer Credit Protection Act 2009 (Cth)* and ASIC’s *Regulatory Guide 209* (RG 209)

- Prudential standards under APRA’s *APS 220/APG 220*

The growing impact of AI on employment is a foreseeable risk that fits squarely within these existing legal obligations. Historical parallels—such as the prudential oversight failures highlighted in ASIC v Healey during the Global Financial Crisis—reinforce the need for directors to ensure that risk and compliance systems are updated accordingly. Lenders must now consider both borrower protection and the long-term financial health of their institutions.

Key Recommendations

Adopt AI-responsive loan models. Use the Future Proof Mortgage framework—an open innovation design that allows repayment terms to adjust automatically when a borrower’s income is disrupted by AI-driven changes in employment. This model offers lenders a viable path to meeting evolving duty-of-care standards in an AI-disrupted economy (Open Innovation Edition, Cosstick, 8 April 2025).

Enhance creditworthiness assessments. Incorporate forward-looking tools such as occupational risk analytics and AI scenario testing into borrower evaluations to anticipate potential job displacement.

Align with existing regulations. Ensure internal lending policies are consistent with ASIC RG 209 (which focuses on assessing an individual borrower’s suitability) and APRA APS 220 (which requires system-level stress testing), explicitly integrating AI-related risks into both borrower-level and portfolio-wide assessments. [18][22].

Establish “red flag” triggers. Identify high-risk occupations that are particularly vulnerable to automation and develop processes for more detailed review or tailored borrower support when these are flagged.

Strengthen governance and training. Invest in AI risk education for credit officers, risk managers, and governance committees. Ensure that any new models or data sources used in lending decisions are transparent, explainable, and thoroughly validated.

Introduction: AI, Job Disruption, and the Evolving Risk Landscape for Lenders

Context Setting

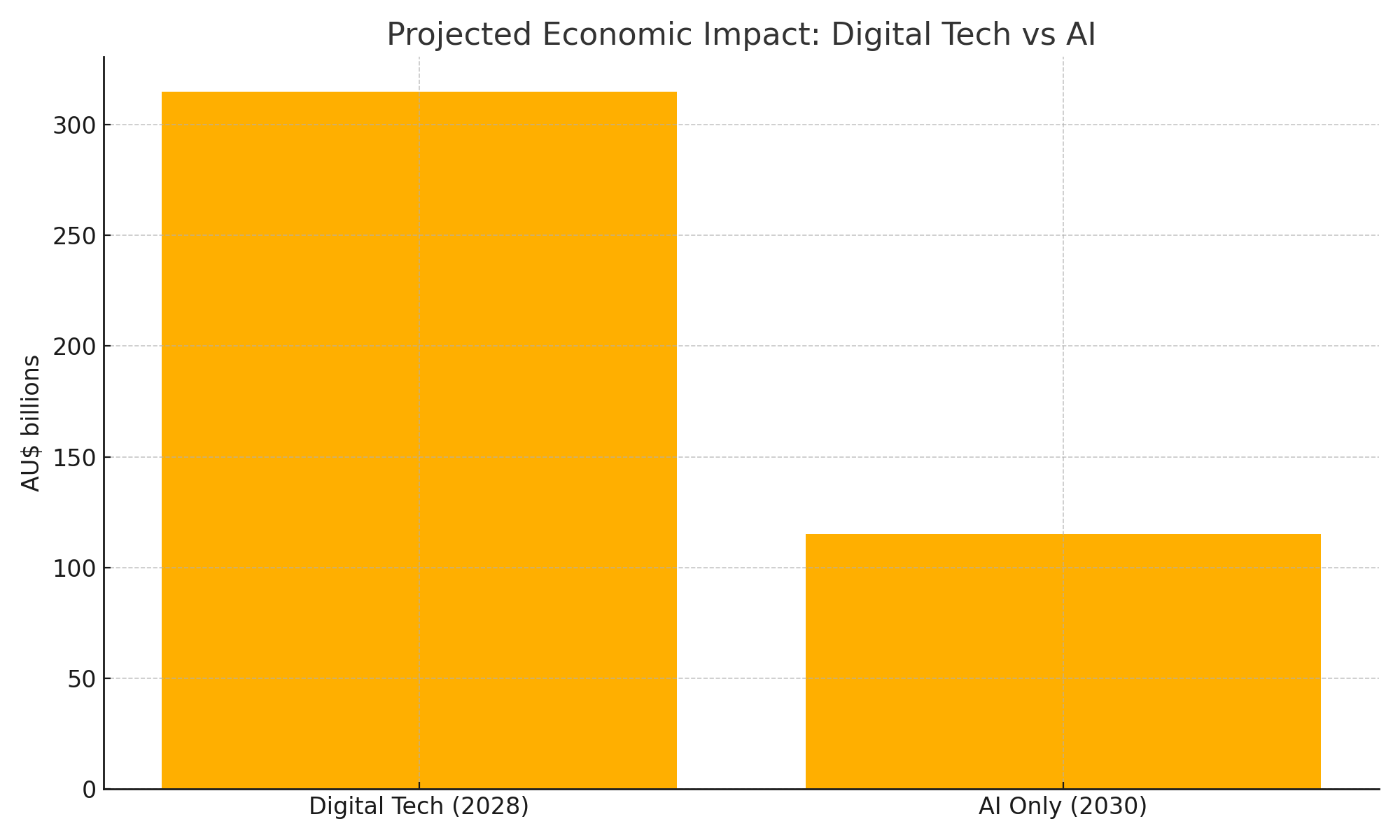

Artificial Intelligence (AI) is now a central force in reshaping the global economy. No longer confined to experimental projects, AI tools are becoming essential across almost every sector.1 In Australia alone, digital technologies—including AI—are expected to contribute AU$315 billion to the economy by 2028,1 with AI accounting for as much as AU$115 billion per year in productivity gains by 2030.5 Globally, the economic boost from AI could run into the trillions.1

This transformative growth is powered by AI’s ability to automate tasks, process and interpret enormous datasets, improve decision-making, and spark innovation across industries such as manufacturing, finance, logistics, and healthcare.4

Figure 1: Projected Economic Impact of Digital Technologies vs AI on the Australian Economy (AU$ billion)

Source: CSIRO Data61 (2019) ; Tech Council of Australia & Microsoft (2023)

The Emerging Challenge

While AI brings the promise of major productivity gains,² it also introduces significant disruption to how people work.⁵ A growing body of research suggests that many current tasks across various jobs could either be fully automated or significantly changed by AI.³ This raises serious concerns—particularly about job losses, wage stagnation in affected sectors, and the urgent need for large-scale workforce reskilling.⁵

The effects won’t be felt equally. Certain industries—like administration, logistics, customer service, and even creative professions—are more vulnerable, and some demographic groups may face disproportionate impact.⁵

For corporate lenders, especially those offering long-term loans such as home mortgages, this creates a new and complex risk. These financial products depend on borrowers being able to reliably earn income over decades. But AI-related job disruption threatens this assumption and introduces a future-oriented credit risk that traditional models may not be equipped to handle.

Framing the Core Question

This report explores how Australian law and regulatory frameworks address this emerging risk. At its core is one critical question:

Do directors of corporate lenders in Australia have a duty of care—whether to the company, its shareholders, or even indirectly to borrowers—to proactively identify and manage the risk that AI-driven job disruption could undermine the long-term stability of their loan portfolios?

To answer this, we examine directors’ statutory duties, the lender-borrower relationship, how foreseeable AI risk is, the robustness of current risk frameworks, and legal precedents from past financial crises.

Legal Foundation of Duty: Directors’ Obligations Under Australian Law

Directors’ responsibilities in Australia are grounded in the Corporations Act 2001 (Cth) and supported by common law and equitable principles. Understanding these duties is essential to evaluating directors’ potential liability when it comes to emerging risks like AI-driven job disruption.

The Statutory Duty of Care and Diligence (Corporations Act s.180)

Section 180(1) of the Corporations Act places a key obligation on directors: to exercise their powers with the level of care and diligence that a reasonable person would apply in their position, taking into account the company’s circumstances and the director’s responsibilities.¹¹

This standard is objective but context-sensitive—it considers the company’s size, complexity, and the specific role of the director.¹² Whether this duty is breached depends on whether a foreseeable risk was ignored and whether any benefit from the action was worth the risk.¹³ Harm isn’t limited to financial loss; it can include legal penalties and reputational damage.¹³

Directors are expected to be informed and engaged.¹⁶ They should understand the company’s financial and operational position and follow up on important issues.¹¹ Delegating tasks is allowed, but it doesn’t absolve directors from oversight and independent judgment.¹¹

Importantly, the standard under s.180 evolves. It adjusts with the company’s environment, technology, and society’s expectations.¹⁹ Given today’s landscape, where AI’s economic and social impacts are well documented,¹ the duty of a reasonable director arguably includes understanding the risks that AI poses to borrower income and loan viability. Ignoring such a risk—especially with regulators’ increasing focus on technological disruption²¹—could fall below the standard expected under the law.¹⁶

Duty to Act in Good Faith and for a Proper Purpose (s.181)

Section 181 of the Corporations Act requires directors to act in good faith, in the best interests of the company, and for a proper purpose.¹¹ This focuses on integrity and avoiding misuse of power or conflicts of interest. While separate from the duty of care, a serious failure in risk oversight—especially if it’s reckless or indifferent to the company’s wellbeing—could overlap with a breach of this duty.²⁴ Intentional breaches can even lead to criminal penalties under s.184.¹¹

To Whom Are Duties Owed?: The Company as Primary Beneficiary

Directors’ duties under Australian law are primarily owed to the company as a separate legal entity.¹¹ These duties are not typically owed to individual shareholders, creditors, or borrowers.²⁷ So, if directors mismanage AI-related borrower risks, any liability would arise from their breach of duty to the company itself—not to external parties.

The Business Judgement Rule (s.180(2))

Section 180(2) gives directors some protection—called the business judgment rule. It says that directors won’t be liable for breaching their duty of care if they:

- Make the decision in good faith and for the proper purpose

- Have no material personal interest in it

- Inform themselves to the extent they reasonably believe is appropriate

- Rationally believe it’s in the company’s best interest.¹¹

This protection applies only to active business decisions. It doesn’t cover situations where directors fail to act or fail to oversee emerging risks. And if they don’t properly investigate foreseeable threats—like AI-driven borrower instability—they may not be able to rely on this rule. Its use as a defense in “stepping stone” liability cases is also limited.¹³

Stepping Stone Liability: Director Accountability for Corporate Contraventions

Stepping stone liability is a legal doctrine allowing regulators like ASIC to hold directors personally liable when their failure to act diligently leads to the company breaking the law.¹³

ASIC has used this approach in cases involving non-financial risks, such as the landmark ASIC v RI Advice Group case, where directors were criticized for failing to manage cybersecurity risks.¹⁴ While courts have cautioned that not every company breach means director liability,¹³ the stepping stone doctrine gives regulators a way to hold directors accountable if their inaction or poor oversight enables corporate misconduct.¹³ ²⁹

This concept is especially relevant in the AI era. If AI-driven tools (like automated credit scoring or customer engagement systems) are not properly governed, and that leads to breaches of lending laws, privacy rules, or anti-discrimination laws,²² directors could face liability. For instance, issuing unsuitable loans based on AI decisions could breach responsible lending obligations.³⁰ If those breaches result from directors failing to assess or manage AI risks, stepping-stone liability may apply.

Broadening Scope of Foreseeable Risk

What’s considered a “foreseeable risk” has evolved. Boards are no longer expected to only focus on financial threats. Today, they must also oversee major non-financial risks.

Climate change is a clear example—directors are now expected to assess its business impact.²⁰ Similarly, cyber risk is treated as a board-level concern.¹⁴ Broader ESG (Environmental, Social, Governance) factors are also considered material to corporate value and reputation.¹³

AI fits squarely into this new category of systemic, complex risk.¹ With mounting evidence of AI’s disruptive economic and social effects, and with regulators issuing clear warnings about its governance,²² directors must now treat AI-driven job disruption as a serious and foreseeable risk.

For lenders whose business models rely on borrowers’ long-term income—such as mortgage providers—this risk is especially critical. Meeting their duty of care under s.180 now arguably requires boards to engage with the challenges posed by AI in the same way they are expected to address climate or cybersecurity threats.

Table 1: Summary of Key Australian Director Duties

| Feature | NCCPA / ASIC RG 209 | APRA APS 220 / APG 220 | Relevance to AI Income Risk |

| Primary Objective | Consumer protection; prevent unsuitable loans causing substantial hardship | Prudential safety & soundness of ADI; financial system stability | Both frameworks require assessment of repayment capacity, which is directly impacted by AI job disruption. |

| Scope | Consumer credit (personal, domestic, household purpose; residential investment property) | All credit exposures of an ADI (household, business, corporate etc.) | NCCPA directly covers mortgages/personal loans most likely affected. APS 220 covers the entire portfolio risk. |

| Core Requirement | Assess if loan is “not unsuitable” (ability to repay without substantial hardship; meets requirements/objectives) | Maintain robust credit risk management framework; sound assessment of repayment capacity | Both necessitate evaluating long-term borrower viability. |

| Assessment Timeframe | Forward-looking (“likely” inability/hardship) | Forward-looking (risk appetite, strategy, provisioning for expected losses, stress testing) | Both require anticipating future conditions, making them theoretically applicable to AI risk. |

| Consideration of Future | Explicit requirement to make reasonable inquiries about income consistency and consider “reasonably foreseeable changes” | Implicit requirement via strategy considering cycles/shifts, identifying vulnerable sectors, stress testing, expected loss provisioning | RG 209 is more explicit on inquiring about future individual circumstances. APS 220 focuses more on portfolio‐level and systemic factors, but implicitly requires considering major future shifts impacting credit quality. |

| Methodology Guidance | Scalable inquiries/verification; cautions on benchmarks; identify “red flags” | Scalable assessment; experienced judgement; use of models, scenario analysis, stress testing encouraged; focus on model risk management | APS 220 framework is more geared towards sophisticated modelling (scenario analysis) needed for systemic risks like AI, but RG 209’s “red flag” concept could trigger deeper individual assessment for AI‐vulnerable borrowers. |

| Potential Gaps for AI Risk | Guidance may lack specificity on how to assess long-term tech disruption risk; reliance on borrower disclosure for future intent. | Framework is principles-based; relies on ADI interpretation for novel risks; traditional models may be inadequate without specific adaptation for AI. | Neither framework currently provides explicit, detailed guidance on assessing AI job disruption risk specifically. Clarity on expected methodologies (e.g., use of occupational risk data, scenario parameters) would be beneficial. |

Exploring the Duty of Care in the Lender-Borrower Relationship

Although directors owe their primary duties to the company, the nature of the lending business means it’s essential to also examine the legal obligations governing the lender–borrower relationship. This is especially important when considering whether lenders—and, by extension, their directors—are responsible for protecting borrowers from unsuitable loans and related risks.

The Limits of Common Law Duties

Under Australian common law, courts have traditionally resisted imposing a general tortious duty of care on lenders toward borrowers when it comes to lending decisions. The lender-borrower relationship is viewed as primarily contractual and commercial in nature.²⁷ Unless unusual circumstances exist—such as the lender stepping into an advisory or fiduciary role—lenders are usually entitled to act in their own commercial interests.

One key factor courts consider in determining whether a duty of care should apply in cases of pure economic loss is the concept of vulnerability. But in most commercial lending situations, especially where the borrower is a sophisticated party, courts have held that borrowers can protect themselves through negotiation and due diligence.²⁷

The principle was reinforced in cases such as Anchor Mortgages Ltd (in liq) v Arrium Ltd (in liq) [NSWSC 141], which upheld the importance of contractual risk allocation. These decisions affirm that it’s highly unlikely a general duty will be found requiring lenders to protect borrowers from future risks—such as those posed by AI-driven job disruption—under existing common law doctrines.²⁷

Statutory Intervention: The National Consumer Credit Protection Act 2009 (NCCPA)

Recognising the imbalance in power and information in consumer lending, the Australian Parliament introduced the National Consumer Credit Protection Act 2009 (NCCPA) in the wake of the Global Financial Crisis.³⁰ This law forms the foundation of responsible lending in Australia and applies to credit offered primarily for personal, domestic, or residential investment purposes.³⁶

Entities that hold an Australian Credit Licence (ACL), including lenders and brokers, must meet specific obligations under the Act.³¹ The centrepiece of these obligations is the responsible lending requirement: lenders must not enter into, suggest, or assist with a loan if it is unsuitable for the consumer.³¹

ASIC Regulatory Guide 209 (RG 209): Interpreting Responsible Lending

ASIC’s Regulatory Guide 209 (RG 209) explains how lenders should meet their obligations under the NCCPA.³¹ It adopts a scalable, principles-based approach, meaning the depth of inquiry and verification depends on the borrower’s situation and the product’s risk.⁴²

Some key provisions relevant to long-term lending include:

- Inquiries into Income: Lenders must assess not just the borrower’s current income, but also how stable and reliable that income is over the loan’s term.⁴⁴

- Verification: Pay slips, bank statements, and other documents are often required. While benchmarks like the Household Expenditure Measure (HEM) may help, they are not a substitute for understanding a borrower’s actual circumstances.³⁰ ⁴³

- Foreseeable Changes: Lenders must consider reasonably foreseeable changes to the borrower’s situation—such as variable income, casual employment, or approaching retirement.⁴⁴

- Red Flags: Inconsistencies in financial data, low disposable income, or a pattern of financial distress are all signs that warrant deeper investigation.⁴³

- Forward-looking assessment: Lenders must determine if the borrower will likely face hardship—not just today, but over the loan’s entire duration.⁴⁵

While RG 209 doesn’t specifically mention AI, its logic clearly applies. Lenders are required to assess whether income is likely to remain consistent—⁴⁴ and to identify foreseeable risks.⁴⁵ For borrowers in sectors vulnerable to automation, AI-driven job loss is a foreseeable risk. Ignoring it could lead to unsuitable lending practices, which would breach the NCCPA’s responsible lending provisions.

Could Lender Obligations Expand in the Future?

Though the common law is currently restrictive,²⁷ future developments could expand lenders’ duties—particularly if AI-driven lending tools introduce extreme information asymmetry or opacity. But for now, the NCCPA provides the clearest path to holding lenders accountable for managing foreseeable long-term risks in credit decisions.

AI-Driven Job Disruption as a Foreseeable Systemic Risk

Major Australian and global reports agree: AI is poised to profoundly reshape the workforce. Digital technologies, with AI at the core, could contribute up to AU$315 billion to Australia’s economy by 2028.¹ Generative AI alone may boost GDP by AU$115 billion annually by 2030.⁵

While AI may drive productivity,⁶ it also threatens employment stability. Studies estimate that up to 62% of Australian work time could be automated by AI.⁶ Though new jobs will be created—particularly in tech and AI integration roles¹ ⁵—many existing roles will change dramatically or disappear.

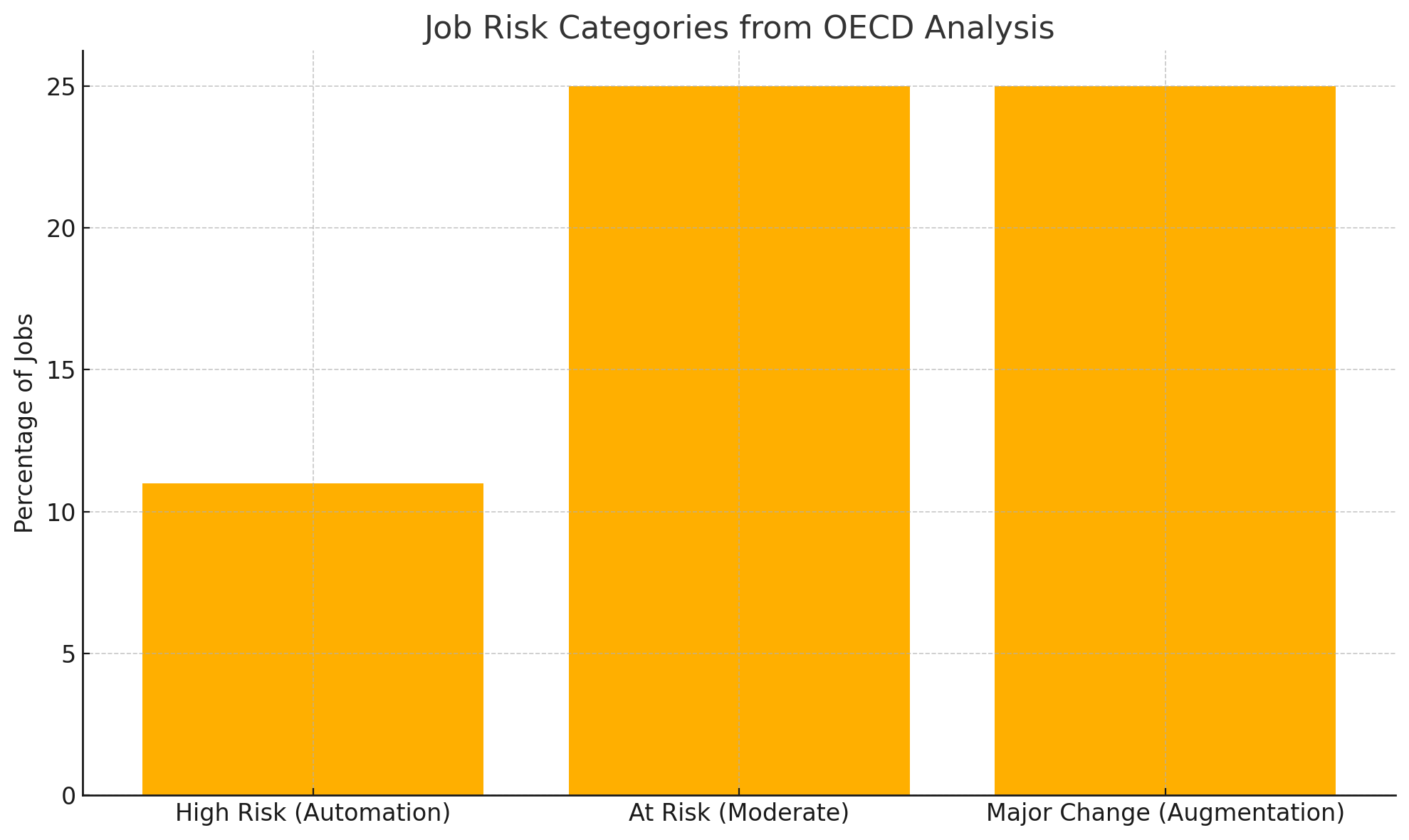

Assessing Potential Scale and Nature

This disruption won’t only involve job losses. Many roles will be restructured through task-level automation, causing wage pressure in affected sectors.³ Reskilling and job transitions will be critical. The Bank for International Settlements (BIS) notes that AI could lead to either smooth productivity gains or disruptive labour displacement, depending on how risks are managed.⁹

Figure 2: OECD Job Risk Categories – High Automation, Moderate Risk, and Major Change (% of Jobs)

Source: Nedelkoska & Quintini (2018) ; OECD Employment Outlook 2014

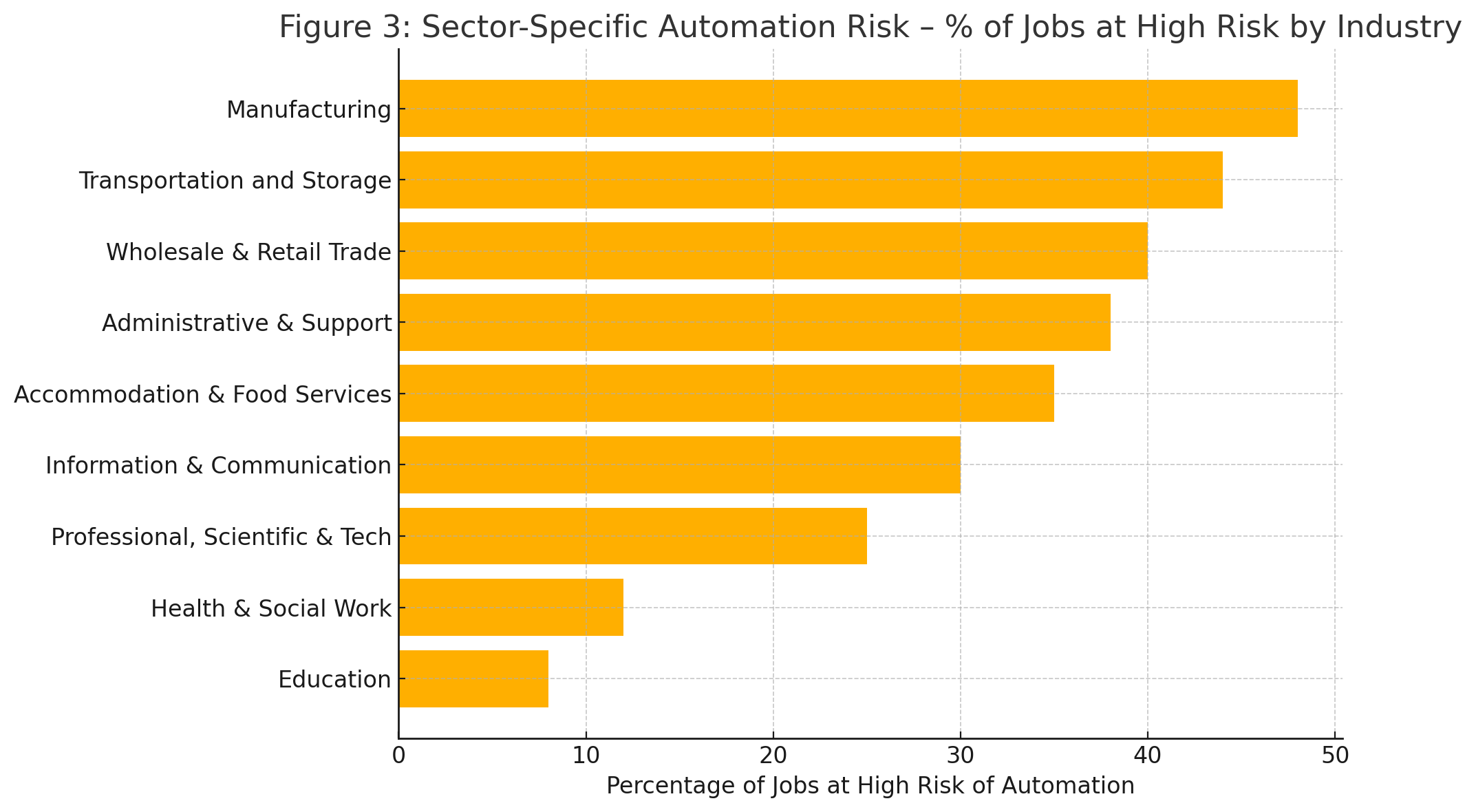

Figure 3: Sector‑Specific Automation Risk – Percentage of Jobs at High Risk by Industry

Source: Nedelkoska & Quintini (2018) ; PwC “Will Robots Really Steal Our Jobs?” (2018)

The consistency and origin of these projections – emanating from national science agencies (CSIRO 1), economic policy bodies (Productivity Commission 6), parliamentary inquiries 2, international financial institutions (BIS 9), and major consultancies 2 – are critical. This extensive body of evidence elevates the potential for AI-driven job disruption beyond mere speculation. In a legal context relevant to directors’ duties, it firmly establishes the foreseeability of this risk. A ‘reasonable director’ exercising care and diligence (as required by s.180 [12] cannot credibly claim ignorance of a potential shift of this magnitude, documented by official and expert sources. It represents a known potential future state that warrants consideration in strategic planning and risk assessment, particularly for businesses like long-term lenders whose viability is tied to future income streams.

Identifying Vulnerable Sectors and Loan Portfolios

AI will not impact all industries equally. Research highlights that jobs in software, customer service, marketing, logistics, administration, and creative sectors are more likely to experience disruption [5]. Roles requiring lower formal qualifications may also be more affected, potentially exacerbating existing economic inequalities. Even within financial services, automation is already reshaping operational roles [47].

For lenders, this uneven impact presents portfolio-level risks. Borrowers in high-automation-risk jobs present a materially different credit profile than those in more secure occupations. Prudent risk management—aligned with APRA’s expectations under APS 220 and APG220 [49]—requires more than general awareness. It demands detailed, data-driven portfolio segmentation that considers borrowers’ employment sectors and AI risk exposure. Ignoring this level of granularity could result in compliance failures and director oversight lapses.

Translating Macro Trends to Micro-Level Borrower Risk

The long-term nature of many loans (e.g., 20-30 years) means lenders must look beyond historical default data. AI-related disruptions are novel, structural, and not yet reflected in past trends [51]. Directors and boards cannot rely on uncertainty as an excuse for inaction. Regulatory standards increasingly expect the use of tools like stress testing and scenario analysis to capture these future-facing risks [52].

Risk Management Frameworks: APRA Prudential Standards & ASIC Guidelines

The existing regulatory framework in Australia, primarily through APRA’s prudential standards and ASIC’s responsible lending guidance, sets expectations for how financial institutions, particularly ADIs, manage credit risk. Assessing the adequacy of these frameworks for addressing AI-driven borrower risk is crucial.

APRA’s Prudential Framework: APS 220 Credit Risk Management

APRA’s Prudential Standard APS 220 requires ADIs to maintain a comprehensive and forward-looking credit risk framework [49]. This includes credit risk appetite statements, risk assessment processes, systems for ongoing monitoring, and early identification of emerging problems [57]. The Board is directly accountable for approving these frameworks and ensuring they adapt to emerging risks [49].

APRA’s Prudential Practice Guide APG 220 adds further clarity. It emphasises setting internal exposure limits for higher-risk borrowers, factoring in economic shifts, and using scenario analysis to assess systemic vulnerability [49]. APRA expects ADIs to assess repayment capacity over time and apply experienced judgment to emerging threats like technological disruption [50].

While neither APS 220 nor APG 220 explicitly mention AI, the underlying principles clearly apply. If AI is impacting job stability and, by extension, repayment capacity, then boards are expected to ensure this is captured in both policy and modelling.

APRA Guidance (APG 220): Good Practice

Prudential Practice Guide APG 220 provides guidance on meeting the requirements of APS 220, outlining APRA’s view of sound practice [49]. Relevant aspects include:

- Portfolio Risk Management: APG 220 highlights the importance of establishing internal limits to manage portfolio-level risks. This includes setting prudent limits on exposures to higher-risk borrowers, specific products, activities, industry sectors, and geographical regions, calibrated against the ADI’s overall risk appetite [49]. These limits should be reviewed regularly (e.g., annually) to account for changes in the external environment and strategy [49].

- Forward-Looking Strategy: The credit risk management strategy should consider economic and credit cycles and anticipate potential shifts in the composition and quality of the credit portfolio over time [59].

- Credit Assessment: While allowing for a scalable and flexible approach to credit assessment depending on the exposure’s nature and size [61], APG 220 emphasizes the need for experienced credit judgment [58]. APRA expects ADIs to have processes to capture economic uncertainty and identify credit deterioration in vulnerable sectors, integrating these into loss estimates [50].

- Board Engagement: APG 220 reinforces the Board’s oversight role, expecting directors to be alert to pressures on lending standards and potential credit deterioration, and to actively challenge management on credit risk matters [59].

While APS 220 and APG 220 do not explicitly mention “AI-driven job disruption,” their principles-based and forward-looking nature creates an implicit requirement to address such risks. The mandate to manage all material credit risks [57], establish limits for higher-risk sectors and borrowers [49], consider economic shifts [59], and identify vulnerable sectors [50] collectively point towards the need for ADIs to incorporate significant, foreseeable technological disruptions impacting borrower capacity into their risk frameworks.

AI job disruption clearly fits this description, affecting specific sectors and borrower groups [5] and thus influencing overall portfolio quality. A prudent application of the existing prudential framework necessitates that ADIs, under Board oversight, integrate this emerging risk into their strategies, assessments, limit setting, and potentially provisioning models.

Assessing Repayment Capacity (Linking APS 220 and RG 209)

A point of convergence exists between APRA’s prudential requirements and ASIC’s responsible lending rules. APS 220 mandates sound processes for assessing borrower repayment capacity as part of credit risk management. [57]. ASIC’s RG 209 provides detailed guidance on the “reasonable inquiries” and “verification” needed under the NCCPA to assess suitability, specifically including consideration of future income stability and foreseeable changes [43].

Although their primary objectives differ – APRA focusing on institutional safety and soundness, ASIC on consumer protection and preventing hardship [62] – both frameworks ultimately require lenders to form a view on the borrower’s long-term ability to service the debt. Persistent borrower hardship inevitably translates into credit losses for the lender, linking the two objectives. Therefore, managing the risk of AI-driven income disruption is relevant under both regulatory lenses. APRA itself has noted the need to assess repayment capacity without substantial hardship, bridging the gap [62].

Challenges in Modelling and Integrating Novel Risks

A significant practical challenge is that traditional credit risk models, typically built on historical data reflecting past economic cycles and default patterns, are poorly suited to assessing the impact of unprecedented, structural technological shifts like widespread AI adoption [51]. Historical data simply does not contain analogues for this type of disruption.

This necessitates exploring and integrating more sophisticated, forward-looking risk assessment techniques. Scenario analysis, which models the potential impact of different plausible future states (e.g., varying levels of job displacement in specific sectors), becomes essential [52]..

Stress testing frameworks also need to be adapted to incorporate such technological disruption scenarios [52]. Lenders may need to leverage alternative data sources and advanced analytical techniques, potentially including AI and machine learning (AI/ML) itself, to better identify and predict these risks 47 However, the use of AI/ML in risk modelling introduces its own challenges, including model risk (e.g., opacity or “black box” issues, potential bias, validation difficulties) which regulators like APRA are increasingly focused on.22 Ensuring appropriate governance, transparency (explainability), and validation of AI models used in credit assessment is critical.22

This gap between the nature of the risk and the limitations of traditional tools presents a critical governance challenge for boards. Directors cannot simply rely on existing models and processes if they are inadequate for assessing a foreseeable and material risk like AI disruption. Discharging their duty of care likely requires directors to actively probe management on how this specific risk is being assessed and modelled.

Questions regarding the capabilities of current models, the use of alternative methodologies like scenario analysis 52, investment in necessary data and analytical capabilities, and the management of risks associated with using AI tools themselves become central to diligent oversight. Relying passively on potentially inadequate tools or analysis for a foreseeable risk could be construed as a failure to exercise reasonable care and diligence.

Regulatory Focus on Non-Financial and Technological Risks

Recent actions and communications from both ASIC and APRA underscore the increasing regulatory focus on technological and other non-financial risks. APRA’s introduction of CPS 230 Operational Risk Management, effective July 2025, aims to ensure institutions can maintain critical operations through disruptions, including those related to technology dependencies.23

Both regulators have highlighted cybersecurity resilience as a key priority.14 ASIC has explicitly warned financial services licensees that their adoption of AI technologies may be outpacing necessary updates to risk and compliance frameworks, reminding directors of their duties concerning AI adoption and oversight.22 Both agencies are concerned about risks stemming from reliance on critical third-party technology providers, a risk amplified by AI adoption.21 APRA is also developing system-wide stress tests to explore risk transmission channels, including those involving technology and interconnections between banking and superannuation.23

This clear and escalating regulatory attention toward technological disruption, operational resilience, AI governance, and associated risks sends an unambiguous signal to boards and directors. It reinforces the expectation that these areas form a critical part of their oversight responsibilities.

This regulatory posture significantly strengthens the argument that failing to adequately address the foreseeable risks associated with AI – including the downstream impact on borrower creditworthiness via job disruption – falls short of the expected standard of care and diligence under section 180. Regulators are effectively placing these issues squarely on the board’s agenda, making claims of ignorance or lack of responsibility difficult to sustain.

Table 2: Regulatory Frameworks for Assessing Borrower Risk (NCCPA vs. APS 220)

| Feature | NCCPA / ASIC RG 209 | APRA APS 220 / APG 220 | Relevance to AI Income Risk |

| Primary Objective | Consumer protection; prevent unsuitable loans causing substantial hardship | Prudential safety & soundness of ADI; financial system stability | Both frameworks require assessment of repayment capacity, which is directly impacted by AI job disruption. |

| Scope | Consumer credit (personal, domestic, household purpose; residential investment property) | All credit exposures of an ADI (household, business, corporate etc.) | NCCPA directly covers mortgages/personal loans most likely affected. APS 220 covers the entire portfolio risk. |

| Core Requirement | Assess if loan is “not unsuitable” (ability to repay without substantial hardship; meets requirements/objectives) | Maintain robust credit risk management framework; sound assessment of repayment capacity | Both necessitate evaluating long-term borrower viability. |

| Assessment Timeframe | Forward-looking (“likely” inability/hardship) | Forward-looking (risk appetite, strategy, provisioning for expected losses, stress testing) | Both require anticipating future conditions, making them theoretically applicable to AI risk. |

| Consideration of Future | Explicit requirement to make reasonable inquiries about income consistency and consider “reasonably foreseeable changes” | Implicit requirement via strategy considering cycles/shifts, identifying vulnerable sectors, stress testing, expected loss provisioning | RG 209 is more explicit on inquiring about future individual circumstances. APS 220 focuses more on portfolio‑level and systemic factors, but implicitly requires considering major future shifts impacting credit quality. |

| Methodology Guidance | Scalable inquiries/verification; cautions on benchmarks; identify “red flags” | Scalable assessment; experienced judgement; use of models, scenario analysis, stress testing encouraged; focus on model risk management | APS 220 framework is more geared towards sophisticated modelling (scenario analysis) needed for systemic risks like AI, but RG 209’s “red flag” concept could trigger deeper individual assessment for AI‑vulnerable borrowers. |

| Potential Gaps for AI Risk | Guidance may lack specificity on how to assess long-term tech disruption risk; reliance on borrower disclosure for future intent. | Framework is principles-based; relies on ADI interpretation for novel risks; traditional models may be inadequate without specific adaptation for AI. | Neither framework currently provides explicit, detailed guidance on assessing AI job disruption risk specifically. Clarity on expected methodologies (e.g., use of occupational risk data, scenario parameters) would be beneficial. |

Key Causes

The GFC stemmed from a complex interplay of factors, primarily originating in the US subprime mortgage market but rapidly spreading globally. Key contributors included [67]:

- Deterioration of Lending Standards: Aggressive lending practices, particularly in the US, involved originating high-risk “subprime” mortgages often with inadequate income verification, low documentation, and features like adjustable rates that quickly became unaffordable [67]. Competition incentivised volume over quality, and the originate-to-distribute model, where lenders sold off loans via securitization, reduced incentives for careful underwriting [67].

- Failures in Risk Management and Governance: Financial institutions failed to adequately understand or manage the risks embedded in complex financial products like Mortgage-Backed Securities (MBS) and Collateralized Debt Obligations (CDOs) [69]. Risk management functions were often siloed or lacked sufficient influence [70]. Boards failed in their oversight duties, not fully comprehending the risks being taken or challenging management effectively [70].

- Financial Innovation and Complexity: The creation of complex, opaque securitized products (MBS, CDOs) and derivatives (Credit Default Swaps) made it difficult for investors and regulators to assess underlying risks.67 Flawed credit ratings assigned to these products provided false comfort.67

- Regulatory Gaps and Failures: Insufficient regulation and supervision in areas like subprime lending, securitization, and the “shadow banking” system allowed risks to accumulate unchecked.68 Capital requirements proved inadequate for the actual risks being run.73While Australia’s banking system proved more resilient due to more conservative regulation (e.g., stricter capital rules, limits on risky activities) and bank management practices 73, it was not immune. Australia experienced stress through its reliance on international wholesale funding markets, which froze during the crisis, and saw the failure of several non-bank lenders and highly leveraged investment companies that relied on rising asset prices or short-term funding.75

Impact on Borrowers

Globally, the GFC had devastating consequences for borrowers. Falling house prices combined with rising interest rates (on adjustable-rate mortgages) led to widespread defaults, foreclosures, and significant negative equity [68]. This resulted in immense financial hardship, loss of homes, and long-term damage to creditworthiness for millions. While the direct impact on Australian borrowers was less severe due to the absence of a widespread subprime crisis and a stronger economy [75], the tightening of credit conditions and economic slowdown still caused difficulties [75].

Director Liability and Governance Failures Post-GFC

The GFC led to heightened scrutiny of director conduct and corporate governance, particularly concerning risk oversight. In Australia, the landmark case of ASIC v Healey (2011) 196 FCR 291 (the ‘Centro’ case) became highly influential.71 The directors (including non-executives) of the Centro Property Group were found to have breached their section 180 duty of care and diligence by approving financial statements that failed to correctly classify billions of dollars of short-term debt during the GFC.71 Middleton J held that directors could not simply rely on management and auditors; they were expected to have sufficient financial literacy to understand the accounts, read them carefully, and make further inquiries if necessary. The decision underscored the expectation that directors apply an independent and inquiring mind to their responsibilities, even in complex areas.71

The GFC also brought the duty to prevent insolvent trading (section 588G) into sharp focus, as economic stress increased the risk of corporate failure.35 More broadly, inquiries and reviews following the crisis highlighted systemic weaknesses in risk management governance, board oversight, and risk culture within financial institutions globally and, to some extent, in Australia.70 The APRA Prudential Inquiry into the Commonwealth Bank of Australia (CBA) in 2018, while triggered by later events, identified cultural and governance failings, particularly in managing non-financial risks, that echoed lessons from the GFC era regarding inadequate board oversight, unclear accountabilities, and slow responses to emerging risks.72

The GFC and subsequent cases like Centro provide a crucial precedent regarding director oversight. They demonstrate that directors cannot abdicate responsibility for understanding and overseeing material risks, even when those risks are complex and managed by experts or internal teams. A failure to engage critically, ask probing questions, and ensure adequate systems are in place for managing foreseeable risks can lead to personal liability for breaching the duty of care.

This precedent is directly relevant to the challenges posed by AI. Given the complexity and potential opacity of AI systems and their associated risks (including borrower impact modeling), directors cannot simply defer to technology teams or AI models. The Centro standard implies a duty to actively oversee, question assumptions, understand limitations, and ensure robust governance is applied to this new category of risk. Passive acceptance without critical scrutiny could mirror the oversight failures highlighted during and after the GFC.

Lessons for Managing Systemic Financial Risk

The GFC prompted significant global regulatory reforms aimed at strengthening financial system resilience. Key measures included the Basel III accords, which increased the quantity and quality of bank capital, introduced new liquidity requirements (like the Liquidity Coverage Ratio), and established frameworks for addressing systemically important financial institutions.73

Regulators also gained new macroprudential tools (like countercyclical capital buffers and loan-to-value ratio limits) designed to address system-wide risks proactively.65 Australia adopted these reforms, often implementing them more conservatively than international minimums.73 The crisis underscored the critical importance of robust prudential regulation, active supervision, and a continuous focus on identifying and mitigating emerging systemic vulnerabilities.21

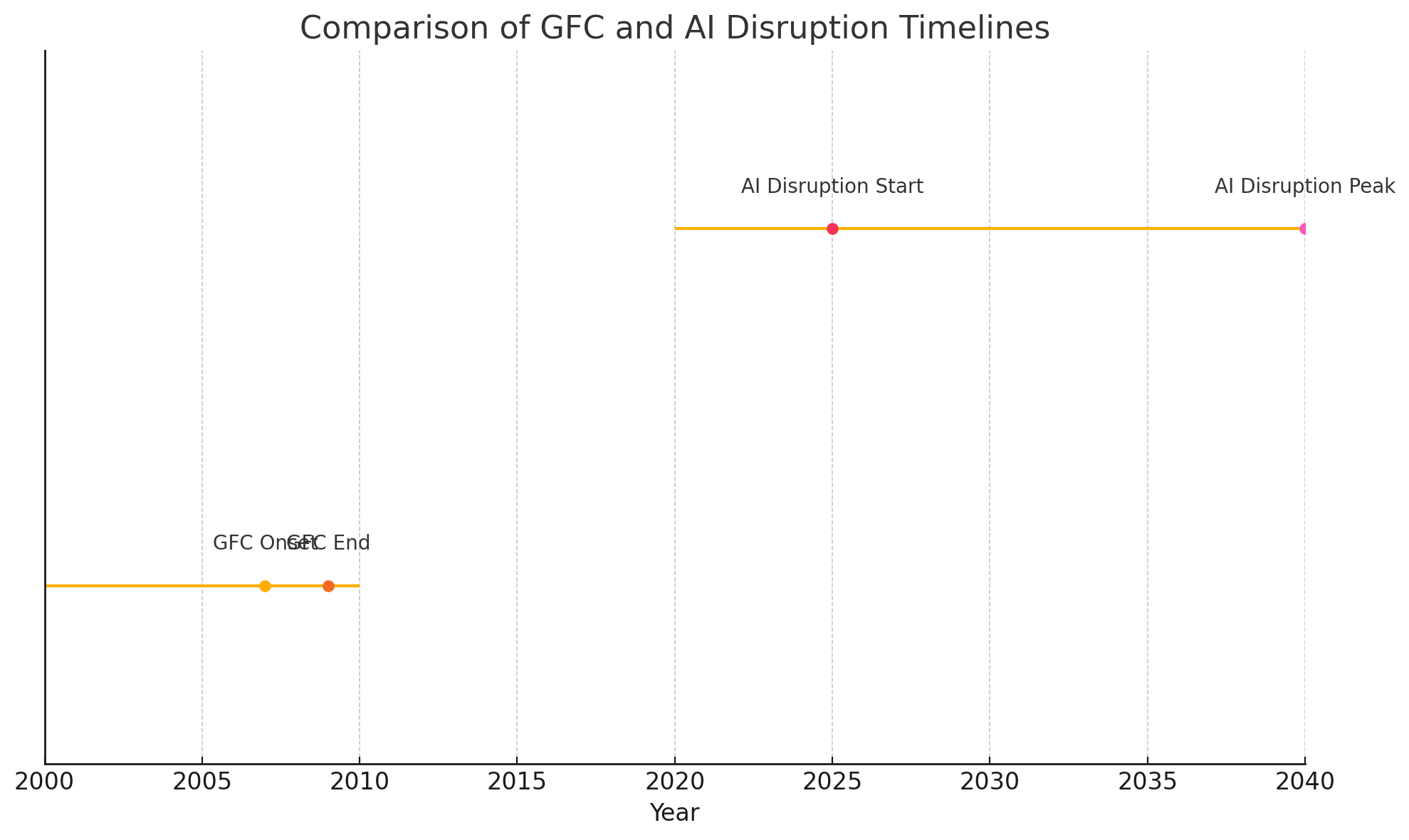

Comparative Analysis: Global Financial Crisis vs. AI-Driven Job Disruption

While both the GFC and the potential disruption from AI represent systemic risks with implications for financial stability, their underlying drivers, mechanisms, and potential impacts differ significantly. Understanding these differences is crucial for assessing the adequacy of existing risk management frameworks and regulatory tools.

Figure 4: Timeline Comparison – Global Financial Crisis (2007–2009) vs AI‑Driven Job Disruption (2025–2040)

Source: Reserve Bank of Australia (RBA) Explainer – Global Financial Crisis ; Productivity Commission “Adopting Artificial Intelligence” (2021)

Contrasting Risk Drivers

The GFC was fundamentally a financial crisis, driven by factors internal to the financial system and housing market dynamics. Key drivers included the bursting of a credit-fueled US housing bubble, excessive leverage within financial institutions, poor lending standards (particularly subprime), and the proliferation of complex, poorly understood financial instruments (MBS, CDOs) that spread risk opaquely throughout the global system.67

In contrast, the potential crisis stemming from AI-driven job disruption is rooted in a technological shift impacting the real economy – specifically, the labour market. The primary driver is the deployment of AI technology that automates tasks previously performed by humans or fundamentally alters job requirements across numerous sectors.5 The risk to the financial system arises indirectly, primarily through the potential widespread erosion of borrowers’ fundamental income-generating capacity, which underpins their ability to service long-term debt.55

Potential Differences in Impact

The GFC manifested relatively rapidly, triggered by liquidity freezes in short-term funding markets, sharp declines in asset values (particularly housing and MBS/CDOs), and the failure or near-failure of major financial institutions.68 The impact on borrowers, while severe, was often linked to specific triggers like interest rate resets on adjustable mortgages or job losses resulting from the ensuing recession.

The impact of AI job disruption may unfold differently. While sudden shocks are possible (e.g., rapid automation in a specific sector), the process is more likely to be gradual but potentially more structural and persistent.9 It could involve a slow erosion of income for certain occupations due to automation or wage pressure, or difficulties in finding re-employment after displacement, leading to a gradual increase in loan stress and defaults over time, rather than a sudden wave linked to a market event.

The GFC directly imperiled the solvency and liquidity of financial institutions; AI’s primary impact on lenders is anticipated to be indirect, manifesting as deteriorating credit quality within their loan books due to borrower income stress.55

Implications for Risk Profiles

The GFC highlighted vulnerabilities related to counterparty risk (fear that other institutions would default), liquidity risk (inability to meet short-term obligations as funding dried up), market risk (falling asset prices), and valuation risk (difficulty pricing complex securities).68

AI-driven job disruption presents a different risk profile for lenders. The primary manifestation is heightened credit risk, potentially correlated across borrowers within specific occupations or industries deemed vulnerable to automation.55

It introduces a significant long-term income stability risk for borrowers that is not well captured by traditional credit scoring based on past performance. Furthermore, the use of AI in lending processes introduces new forms of model risk (bias, lack of explainability, performance issues) 22 and operational risk (system failures, cybersecurity vulnerabilities, reliance on third-party AI providers).21

Table 3: Comparison of GFC vs. Potential AI Job Disruption Risks

| Dimension | Global Financial Crisis (GFC) | Potential AI Job Disruption Risk |

| Primary Driver | Financial/Market Dynamics: Housing bubble burst, subprime lending, excessive leverage | Technological Change: AI adoption leading to labour market transformation |

| Affected Asset/System | Financial assets (MBS, CDOs), Housing market, Financial institutions’ balance sheets | Borrower income streams, Labour market structure, Loan portfolio credit quality |

| Key Vulnerability | Liquidity mismatch, Counterparty risk, Asset valuation uncertainty, Securitization complexity | Borrower long‑term income stability, Occupational/sectoral concentration risk, AI model risk, Operational resilience |

| Timescale | Relatively rapid onset triggered by market events, followed by prolonged recession | Potentially gradual erosion over years/decades, but with potential for faster sectoral shocks |

| Borrower Impact Mechanism | Defaults triggered by interest‑rate resets, job losses (recession), negative equity | Gradual income erosion, job displacement, wage stagnation, difficulty servicing long‑term debt |

| Primary Lender Risk | Direct balance‑sheet impact (write‑downs, capital depletion), Liquidity failure | Indirect impact via increased credit losses in loan portfolio due to borrower defaults |

| Likely Regulatory Focus | Capital adequacy, Liquidity standards, Systemic institution oversight, Market transparency | Responsible lending (income‑stability assessment), Credit‑risk modelling (scenario analysis), AI governance, Operational risk |

This comparison highlights a necessary shift in the systemic risk lens. While both events threaten financial stability, the GFC demanded analysis focused on financial contagion pathways, asset valuation, and institutional liquidity. Effectively managing the risks associated with AI job disruption requires a different focus: understanding labour market structures, forecasting technological adoption rates and impacts across diverse economic sectors, assessing long‑term income trends, and managing the novel risks introduced by AI systems themselves.

This implies that risk management frameworks and supervisory approaches need to evolve beyond traditional credit‑cycle analysis to incorporate methodologies capable of assessing these complex socio‑technological dynamics and their impact on creditworthiness over extended time horizons.

Evaluating Directors’ Duty of Care Regarding AI‑Induced Borrower Risk

Dimension Global Financial Crisis (GFC) Potential AI Job Disruption Risk

Primary Driver Financial/Market Dynamics: Housing bubble burst, subprime lending, excessive leverage 67 Technological Change: AI adoption leading to labour market transformation 5

Affected Asset/System Financial assets (MBS, CDOs), Housing market, Financial institutions’ balance sheets 68 Borrower income streams, Labour market structure, Loan portfolio credit quality 55

Key Vulnerability Liquidity mismatch, Counterparty risk, Asset valuation uncertainty, Securitization complexity 68 Borrower long-term income stability, Occupational/sectoral concentration risk, AI model risk, Operational resilience 21

Timescale Relatively rapid onset triggered by market events, followed by prolonged recession 68 Potentially gradual erosion over years/decades, but with potential for faster sectoral shocks 9

Borrower Impact Mech. Defaults triggered by interest rate resets, job losses (recession), negative equity 68 Gradual income erosion, job displacement, wage stagnation, difficulty servicing long-term debt 5

Primary Lender Risk Direct balance sheet impact (write-downs, capital depletion), Liquidity failure 73 Indirect impact via increased credit losses in loan portfolio due to borrower defaults 55

Likely Regulatory Focus Capital adequacy, Liquidity standards, Systemic institution oversight, Market transparency 73 Responsible lending (income stability assessment), Credit risk modeling (scenario analysis), AI governance, Operational risk 22

This comparison highlights a necessary shift in the systemic risk lens. While both events threaten financial stability, the GFC demanded analysis focused on financial contagion pathways, asset valuation, and institutional liquidity. Effectively managing the risks associated with AI job disruption requires a different focus: understanding labor market structures, forecasting technological adoption rates and impacts across diverse economic sectors, assessing long-term income trends, and managing the novel risks introduced by AI systems themselves.

This implies that risk management frameworks and supervisory approaches need to evolve beyond traditional credit cycle analysis to incorporate methodologies capable of assessing these complex socio-technological dynamics and their impact on creditworthiness over extended time horizons.

Evaluating Directors’ Duty of Care Regarding AI-Induced Borrower Risk

Synthesizing the legal duties, regulatory frameworks, the nature of the AI risk, and lessons from the GFC allows for an evaluation of the extent to which directors of corporate lenders have, or could be argued to have, a duty of care regarding AI-induced borrower risk.

This section brings together the legal, regulatory, and practical elements discussed so far to evaluate whether directors have a legal obligation to manage the risk of AI-driven borrower instability proactively.

Is AI Disruption a “Foreseeable Risk of Harm”?

The first critical question is whether AI-driven job disruption impacting borrower repayment capacity constitutes a “foreseeable risk of harm” that directors must consider under their section 180 duty of care. The evidence presented in Section 5, drawn from numerous credible governmental, academic, and industry sources 1, overwhelmingly indicates that significant labour market transformation driven by AI is not just possible, but widely anticipated. This body of evidence establishes foreseeability in the legal sense.

Furthermore, the increasing recognition by courts and regulators that directors’ duties encompass oversight of other systemic, non-financial risks like climate change 20 and cybersecurity 14 provides a strong analogy.19 Given that long-term borrower income stability is fundamental to a lender’s business model, a foreseeable, systemic threat to that stability, such as AI disruption, logically falls within the scope of risks requiring director attention.

The potential harm to the company is clear: increased loan defaults, reduced profitability, potential write-downs, reputational damage, and possible regulatory action.13 Therefore, it is strongly arguable that AI-driven job disruption is a foreseeable risk of harm relevant to the Section 180 duty of care for directors of lending institutions.

The Nexus between Prudent Credit Risk Management (APS 220) and Director Duty

APRA’s prudential standard APS 220 mandates that ADIs implement and maintain a robust, forward-looking credit risk management framework.49 Ensuring the company complies with such fundamental prudential requirements is a core element of a director’s duty of care and diligence. This includes ensuring the framework is not static but adapts to emerging risks.50

Given that AI job disruption poses a foreseeable threat to credit quality, directors must oversee management’s efforts to ensure the APS 220 framework adequately identifies, assesses, monitors, and mitigates this specific risk. This involves ensuring appropriate methodologies are used (potentially beyond traditional models, incorporating scenario analysis 52), that risk appetite statements reflect this risk, and that adequate resources are allocated.50

A failure in board oversight leading to an inadequate or outdated risk management framework ill-equipped to handle the foreseeable impacts of AI could constitute a breach of the Section 180 duty.

Potential Breach of Duty to Company/Shareholders

A demonstrable failure by the board to ensure the lender adequately identifies, assesses, and manages the risks posed by AI job disruption to its loan portfolio could lead to allegations of breaching the duty of care under section 180(1). If the failure was particularly egregious or reckless, potentially showing a disregard for the company’s interests in the face of clear warnings, it might even raise questions under section 181 (good faith).24 Such breaches could expose directors to personal liability, potentially through civil penalty proceedings brought by ASIC 13 or, in certain circumstances, through derivative actions brought by shareholders.

The GFC provided precedents where directors were held liable for oversight failures related to risk management and financial reporting during a crisis 71, and similar principles could apply to failures in governing AI-related risks. Regulatory interventions, such as those seen in the CBA Prudential Inquiry citing governance and risk management shortcomings 72, or ASIC’s actions concerning AI governance 22, further highlight the potential consequences.

Indirect Impact via Responsible Lending (NCCPA/RG 209)

While directors’ duties are primarily owed to the company, the responsible lending obligations under the NCCPA create an indirect pathway linking director oversight to borrower outcomes. As argued in Section 4, the NCCPA’s requirement to assess loan suitability based on a borrower’s likely ability to repay without substantial hardship, considering foreseeable changes to their financial situation 30, arguably necessitates factoring in the risk of AI-driven income disruption for relevant borrowers seeking long-term credit.

A systemic failure by the lender to incorporate this foreseeable risk into its suitability assessments, potentially leading to the issuance of unsuitable loans on a large scale, would constitute a breach of the NCCPA by the company. If this corporate contravention resulted from inadequate oversight, poor governance, or deficient systems attributable to a lack of care and diligence at the board level, directors could face personal liability under the ‘stepping stone’ principle.13

This mechanism effectively holds directors accountable for ensuring the company meets its statutory obligations designed to protect borrowers, thereby creating an indirect duty related to borrower welfare in this specific context.

The Role of Governance, Oversight, and Disclosure

Demonstrating adequate governance is paramount for directors seeking to mitigate liability risk in the face of emerging challenges like AI disruption. This requires more than passive acceptance of management reports. It involves active engagement at the board level, including:

- Ensuring AI-related risks (including borrower impact) are explicitly discussed and considered in strategic planning and risk management frameworks.16

- Critically evaluating and challenging management’s assumptions and methodologies for assessing and modeling these risks.49

- Ensuring the board possesses or has access to, sufficient technological literacy to understand the implications of AI.14

- Allocating adequate resources for developing necessary risk assessment capabilities, potentially including new data sources or modelling techniques like scenario analysis.51

- Reviewing and updating the company’s Risk Appetite Statement to reflect the board’s tolerance for AI-related credit risks.49

- Ensuring transparent and meaningful disclosure to shareholders and the market regarding how these emerging risks are being identified, governed, and managed.20

The increasing focus of institutional investors and proxy advisors on how companies navigate technological disruption, ESG factors, and other long-term risks adds another layer of pressure.3 These influential stakeholders expect proactive governance and transparent disclosure on how boards are overseeing emerging challenges like AI.

Engagement priorities often include risk management, strategic resilience, and board oversight.81 A perceived failure by the board to adequately address the foreseeable risks of AI disruption could attract negative attention from investors, potentially leading to voting dissent, challenges to director appointments, reputational damage, and impacts on share price.80

This market pressure effectively reinforces the legal duty of care, raising the practical standard expected of directors regarding their engagement with significant emerging risks like AI. Ignoring the issue becomes not only a potential legal failing but also a significant governance and market relations risk.

Recommendations for Directors and Regulators

Based on the analysis of directors’ duties, regulatory requirements, the nature of AI-driven job disruption risk, and lessons from the GFC, the following recommendations are proposed for boards of corporate lenders and regulators.

For Boards of Corporate Lenders:

Enhance Risk Identification & Scenario Analysis:

o Direct management to develop specific, plausible scenarios modelling the potential impact of varying degrees and types of AI-driven job disruption on the lender’s loan portfolio quality over the medium to long term.

o Ensure these scenarios incorporate analysis segmented by industry, occupation, geography, and potentially borrower demographics identified as potentially vulnerable based on credible external research.5

o Integrate the findings from scenario analysis and stress testing into the institution’s Internal Capital Adequacy Assessment Process (ICAAP) 50 and overall strategic planning.

o Invest in the necessary data infrastructure and analytical capabilities (potentially including AI/ML tools, subject to robust model risk management 51) to support these forward-looking assessments.52

Strengthen Governance & Oversight:

o Review board composition to ensure adequate collective understanding of technology, digital transformation, and AI-related risks, potentially through director training or recruitment.14

o Formally assign oversight responsibility for AI strategy and associated risks (including credit risk impacts) to a specific board committee (e.g., Risk Committee) or the full board, ensuring regular reporting and discussion.

o Actively challenge management’s assumptions regarding the potential impact of AI disruption, the methodologies used for risk assessment, the adequacy of mitigation strategies, and the validation of any AI models employed in the process.49

o Review and update the company’s Risk Appetite Statement to explicitly address the board’s tolerance for credit risk arising from long-term technological disruption and AI impacts on borrowers.49 Document board deliberations on these matters.

Review Underwriting Standards & Responsible Lending Practices:

o Evaluate whether current underwriting standards and processes for assessing long-term loan applications (particularly mortgages) adequately capture potential future income volatility or instability linked to AI disruption risk for borrowers in vulnerable occupations/sectors.

o Consider incorporating additional factors or inquiries into the assessment process, consistent with RG 209’s principles of reasonable inquiries into income consistency and foreseeable changes 44, potentially using data on occupational automation risk where reliable sources exist.

o Ensure robust compliance with NCCPA responsible lending obligations, paying particular attention to the forward-looking aspects of the suitability assessment for long-term loans in the context of potential AI disruption.

Enhance Disclosures:

o Improve the transparency and quality of disclosures to shareholders and the market regarding the governance, identification, assessment, and management of AI-related risks, including potential impacts on the loan portfolio and credit quality.

o Consider addressing these risks explicitly in relevant sections of the Annual Report, such as the Operating and Financial Review (OFR), consistent with regulatory expectations for disclosing material risks.20

o Align disclosures with evolving expectations from institutional investors and proxy advisors regarding oversight of technological and long-term strategic risks.80

For Regulators (ASIC/APRA):

1. Provide Clearer Guidance:

o Issue joint or coordinated guidance clarifying regulatory expectations for how lenders should incorporate the risks of long-term technological disruption, specifically AI-driven job displacement, into their credit risk management frameworks (under APS 220) and responsible lending suitability assessments (under NCCPA/RG 209).

o Guide acceptable methodologies for assessing and modeling these novel, forward-looking risks, potentially including expectations around the use and validation of scenario analysis, stress testing, or alternative data sources. Address the management of model risk associated with using AI/ML tools in these assessments.50

2. Supervisory Focus:

o Increase supervisory scrutiny of how institutions are identifying, assessing, governing, and mitigating AI-related risks, including the potential impact on borrower repayment capacity and overall portfolio quality. This should cover risk management frameworks, underwriting practices, modelling techniques, and board oversight.

o Conduct thematic reviews focused on AI risk management in lending and share findings and examples of better practice with the industry to lift standards.22

o Incorporate AI disruption scenarios into future stress testing exercises for ADIs.23

3. Collaboration:

o Maintain and strengthen collaboration between ASIC and APRA on the regulation and supervision of AI-related risks in the financial sector.63 Ensure consistent messaging and avoid regulatory duplication where possible, balancing prudential safety objectives with consumer protection mandates.

Conclusion

The duty of care and diligence imposed on directors under section 180 of the Corporations Act 2001 (Cth) requires them to navigate the evolving risk landscape pertinent to their company’s specific circumstances. This duty is owed primarily to the company itself.11

Artificial Intelligence stands as a transformative technology with the documented potential to significantly disrupt labour markets and impact the long-term income stability of individuals across various sectors.1 For corporate lenders whose business models rely heavily on the long-term repayment capacity of borrowers (e.g., mortgage providers), this disruption constitutes a material and foreseeable risk. The extensive reporting by government bodies and research institutions solidifies its status beyond speculation, demanding strategic consideration.1

While Australian common law generally does not impose a direct duty of care from lenders to borrowers regarding loan suitability 27, the statutory responsible lending framework under the NCCPA fills a significant part of this gap for consumer credit. The Act mandates a forward-looking assessment of a borrower’s ability to repay without substantial hardship, requiring consideration of foreseeable changes to their financial situation.30 This statutory obligation arguably compels lenders to factor in the potential impact of AI job disruption on vulnerable borrowers seeking long-term credit.

Furthermore, APRA’s prudential standard APS 220 requires ADIs to maintain robust credit risk management frameworks capable of identifying, assessing, and mitigating all material risks, including emerging and systemic ones.49 Overseeing the adequacy and adaptation of this framework is a core component of a director’s section 180 duty.

Therefore, while directors do not owe a direct duty to borrowers regarding AI risk under the current general law, a compelling legal and regulatory imperative exists for them to ensure their organizations address this challenge. This imperative arises from the confluence of:

- The director’s fundamental duty of care to the company (s.180), demands oversight of foreseeable risks impacting the business.

- The need to ensure the company complies with statutory responsible lending obligations (NCCPA), which implicitly require consideration of long-term income stability risks like AI disruption.

- The need to ensure the company meets prudential requirements (APS 220) for managing credit risk, including adapting frameworks for novel systemic threats.

A failure by directors to demonstrate adequate oversight, critical inquiry, and strategic adaptation concerning the impact of AI on borrowers and loan portfolio quality could expose them to liability for breaching their duty of care and diligence. This liability risk is amplified by the ‘stepping stone’ principle, where inadequate director oversight facilitating corporate breaches (e.g., of the NCCPA) can lead to personal accountability.13

The standard of care expected of directors is not static; it evolves with technological change and regulatory focus.19 In the age of AI, proactive governance and diligent risk management regarding its foreseeable impacts are no longer optional, but essential components of directorial responsibility in the financial sector.

Inline citations in order of appearance:

[1] Making the most of the AI opportunity – pc.gov.au

[2] Chapter 3 – Developing the AI industry in Australia – aph.gov.au

[3] Chapter 4 – Impacts of AI on industry, business and workers – aph.gov.au

[4] Intelligent financial system: how AI is transforming finance – bis.org

[5] Artificial intelligence: What directors need to know – pwc.com.au

[6] ASIC REP 798 – natlawreview.com

[7] Financial Stability in Focus – bankofengland.co.uk

[8] Financial Stability Review – rba.gov.au

[9] Directors’ Duties in Australia – Baker McKenzie

[10] General Duties of Directors – lawhandbook.sa.gov.au

[11] Section 180 Director Guide – deiterate.com

[12] Stepping-Stones to Liability – Federal Law Review

[13] ASIC v RI Advice Group [2022] FCA 496

[14] Cassimatis v ASIC [2020] FCAFC 52

[15] ASIC v Healey [2011] FCA 717, 196 FCR 291

[16] Responsible lending conduct – ATO

[17] National Consumer Credit Protection Act 2009 – AustLII

[18] RG 209 Responsible Lending – ASIC

[19] Responsible Lending Guide – Gadens

[20] Arrium and Director Duties – Baker McKenzie

[21] AI and Prices – AllianceBernstein

[22] APS 220 Credit Risk Management – apra.gov.au

[23] APG 220 Credit Risk Guide – apra.gov.au

[24] APS 220 Proposed Reforms – allens.com.au

[25] Directors’ Liability Risks – Liberty Specialty Markets

[26] GFC Overview – rba.gov.au

[27] GFC in Australia – citeseerx

[28] Financial System Inquiry Submission – rba.gov.au

[29] APRA Inquiry into CBA – apra.gov.au

[30] Securitization and the GFC – Investopedia

[31] Foreseeable Risk and Duty – Gadens

[32] Stepping Stone Liability – Hamilton Locke

[33] AI Stability Impacts – fsb.org

[34] Parliamentary Review of the GFC – aph.gov.au

[35] ASIC Effectiveness Review – fraa.gov.au

[36] CSIRO AI Roadmap – https://www.csiro.au/…

[37] Tech Council AI Opportunity – https://techcouncil.com.au/…

[38] Nedelkoska & Quintini, OECD Working Paper 202 – https://doi.org/10.1787/1815199x

[39] OECD Employment Outlook 2014 – https://doi.org/10.1787/empl_outlook-2014-6-en

[40] RBA: Global Financial Crisis Explainer – rba.gov.au

[41] Productivity Commission AI Report – pc.gov.au

[42] PwC: Will Robots Really Steal Our Jobs? – pwc.com

Legal Disclaimer

This article is provided for informational and educational purposes only and does not constitute legal advice. While every effort has been made to ensure the accuracy and completeness of the information, readers should consult a qualified legal professional before acting on any matters discussed herein. The author and publisher disclaim any liability for losses or damages arising from reliance on the content of this article. Use of this material is at your own risk.

Open Innovation License

This analysis — “AI and Directors’ Duty of Care: Managing Job Disruption Risk” —

is released under Creative Commons Attribution-NonCommercial 4.0 International

by John Richard Cosstick, June 2025.

Part of ongoing open innovation contributions to AI governance, including

submission to UN AI for Good Impact Initiative. Shown here:

📋 You may: Share, adapt, and build upon this work

🎯 You must: Provide attribution

❌ You cannot: Use it commercially without permission

Full license: https://creativecommons.org/licenses/by-nc/4.0/

Photo by Etactics Inc on Unsplash