Executive Summary

Artificial intelligence is no longer an experimental edge case in financial planning—it is now embedded in everyday workflows. Global research from the Financial Planning Standards Board (FPSB) shows that a large majority of planners now use, or plan to use, AI tools for client analytics, portfolio construction, and workflow automation.[1] At the same time, regulators and professional bodies are clear: AI does not dilute a planner’s duty of care. Existing obligations around best interests, suitability, documentation, and governance apply regardless of whether advice is human, hybrid, or robo-delivered.[2–7]

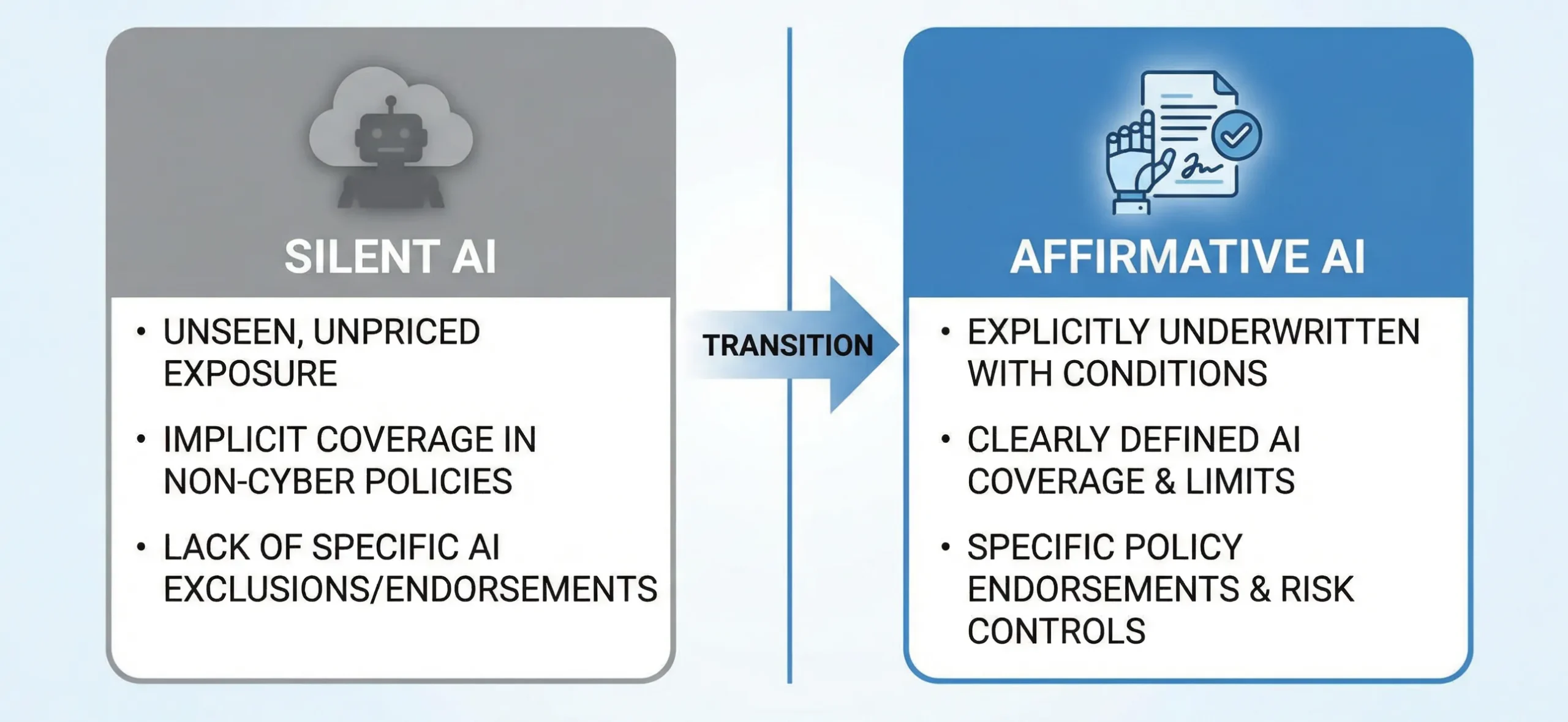

This creates a growing gap between rapid AI adoption and lagging governance. Professional indemnity (PI) insurers warn about “silent AI”—unintended coverage of AI-driven losses under legacy policies—while starting to offer “affirmative AI” covers to firms that can demonstrate strong controls and audit-ready logs.[8–10] Macro-level research from the IMF, BIS, OECD, central banks and the World Bank links AI-driven job disruption to income volatility, retirement-savings risk, and potential mortgage-default pressure, particularly for mid-career professionals in automatable occupations.[11–16]

This article proposes a practical response tailored for planners, licensees, lenders, and boards:

- Verifiable Human Contribution (VHC) – audit-ready evidence that a suitably qualified human has reviewed, challenged and documented AI-assisted advice before it reaches the client.

- AI Management Systems (AIMS) – an ISO/IEC 42001-style governance framework that inventories AI tools, controls their lifecycle, and embeds VHC into day-to-day operations.[17–19]

Together, VHC and AIMS turn AI from an opaque liability into a governed, insurable, and director-oversight-ready capability, improving long-term resilience of retirement strategies and housing-loan serviceability in an era of structural labour-market change.

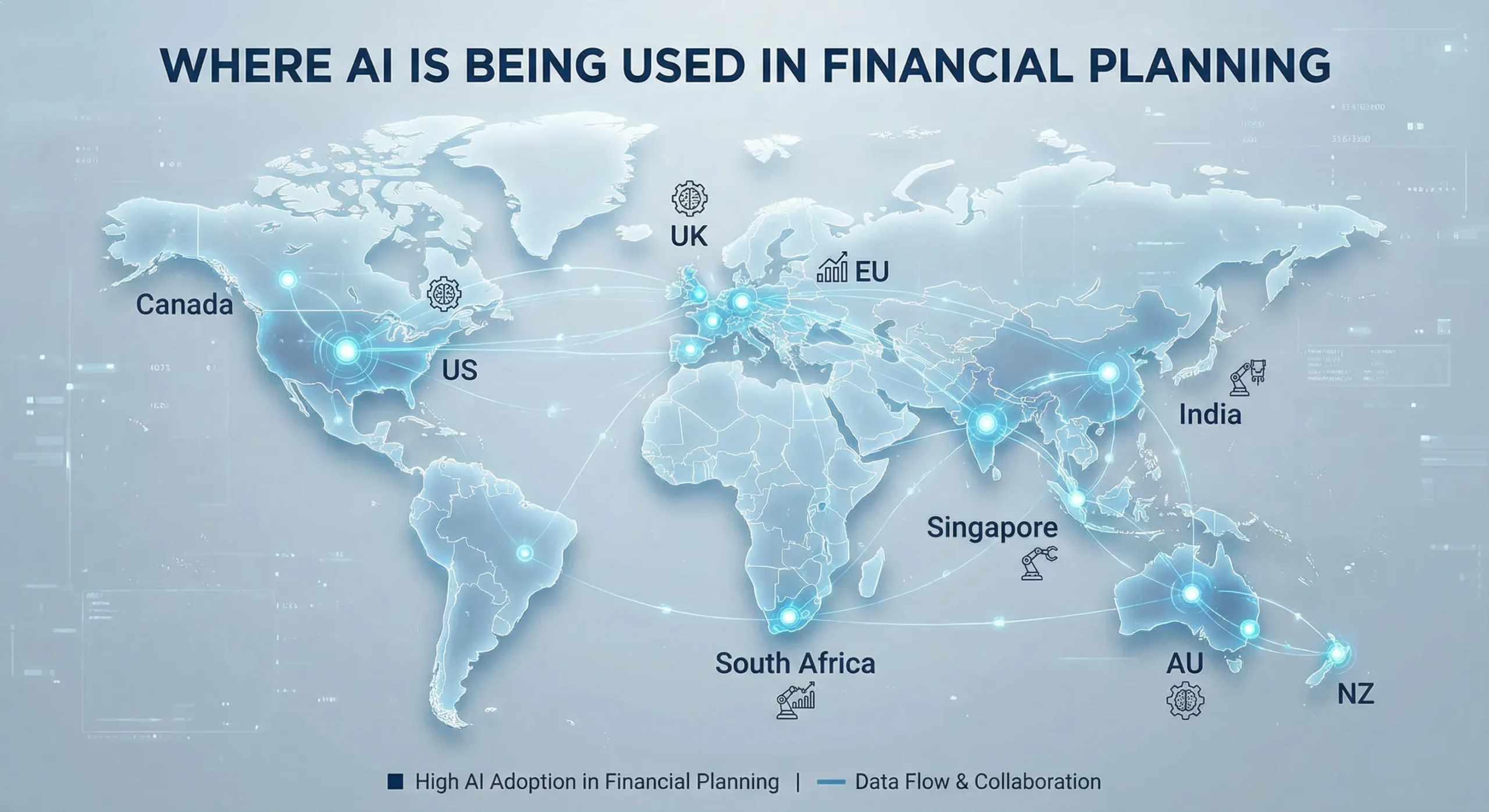

1. How AI Is Changing Financial Planning Around the World

A. Current use cases in advice firms

Recent FPSB global research and national surveys (Australia, India, South Africa and others) show that AI is now used across the advice lifecycle, especially in: client segmentation, risk-profiling engines, cash-flow and retirement simulations, portfolio optimisation, and document drafting for Statements of Advice (SoAs) or suitability letters.[1],[20],[21]

Common use cases include:

- Data aggregation and analytics – consolidating account and behavioural data to identify advice opportunities.

- Goal-based planning engines – AI-supported tools that convert client inputs into recommended savings patterns and asset allocations.

- Robo- and hybrid advice – algorithm-driven model portfolios with optional human review.

- LLM-assisted drafting – using large language models to draft SoAs, emails and educational material, which advisers then edit.

- Compliance and monitoring – AI tools that flag outlier advice files, missing disclosures or inconsistent risk profiles.[22],[23]

B. Benefits—and why they are not “free.”

Professional bodies and regulators acknowledge real upside:

- Productivity and cost – planners can serve more clients and close the “advice gap” with faster modelling and documentation.[1],[2]

- Personalisation – AI supports more granular, scenario-based recommendations, especially for complex household structures.[3],[21]

- Risk detection – anomaly- and pattern-detection algorithms can highlight suitability issues earlier.[22]

But these benefits come with material risks:

- Hallucinations and opacity – LLMs can produce plausible but incorrect explanations or numbers, often without clear lineage.[24]

- Bias and fairness – training data can under-represent certain demographics, skewing recommendations or credit outcomes.[2],[25]

- Documentation gaps – if firms cannot explain how a model arrived at a recommendation, they struggle to demonstrate best-interest and suitability compliance.[4–7]

- Over-reliance on “auto-pilot” – staff may assume tools are “right by default,” weakening professional scepticism.[26]

The core challenge is not whether to use AI, but how to govern it so that human responsibility remains visible and defensible.

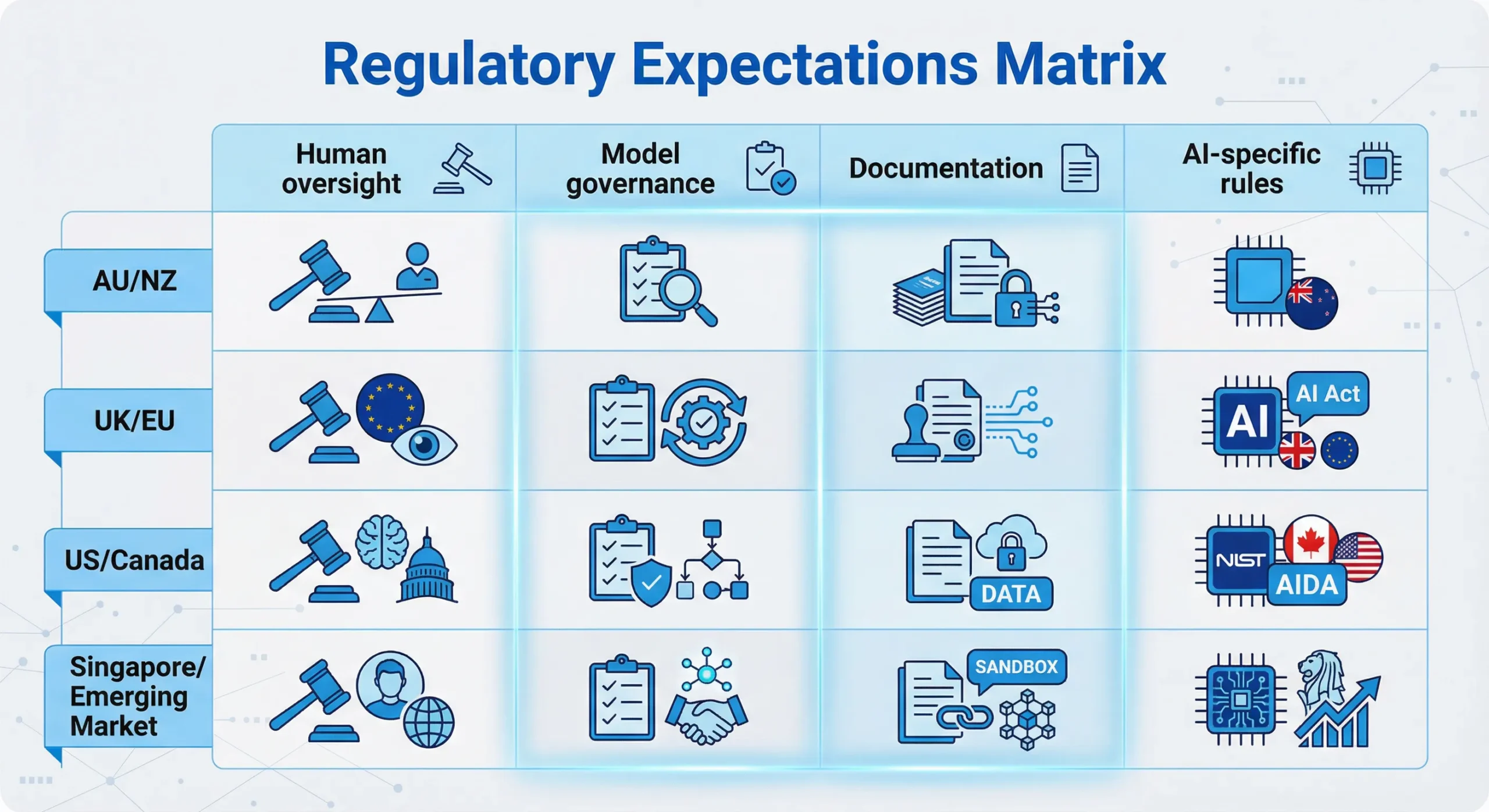

2. Regulatory Expectations: “Old Duties, New Tools.”

Across the target jurisdictions, regulators have taken a consistently technology-neutral approach: established duties continue to apply, while additional guidance emphasises algorithmic governance and human oversight.

A. Australia and New Zealand

In Australia, ASIC’s regulatory guide on digital advice (RG 255) makes it clear that licensees and advisers remain fully responsible for advice quality when using algorithms.[4] They must:

- Hold appropriate licences,

- Ensure algorithms are designed and tested to produce useful advice, and

- Monitor performance, updating or withdrawing tools where necessary.

More broadly, ASIC’s advice obligations—best-interest duty, appropriate advice, and record-keeping—apply identically to AI-assisted SoAs and projections.[5]

New Zealand’s Financial Markets Authority (FMA) allows personalised digital advice under a licensing regime, but stresses competence, governance and client-interest obligations that apply regardless of whether advice is delivered by a human or a digital channel.[6]

B. UK and EU

The UK FCA’s guidance on automated and streamlined advice confirms that fact-finding, suitability and disclosure rules apply to robo-advice in the same way as traditional advice.[7] Firms must validate data inputs, test and monitor algorithms, and keep adequate records showing how recommendations were generated.

At the EU level, ESMA has warned firms against relying on “public” AI tools to deliver investment recommendations because such tools are not bound by client-interest duties.[24] The EU AI Act adds a risk-based overlay, treating certain AI systems used in credit, employment and financial services as “high-risk,” requiring human oversight, logging, and quality management.[27]

C. US and Canada

The US SEC has clarified that robo-advisers owe the same fiduciary duties of care and loyalty as human advisers, including a duty to provide advice aligned with client objectives and constraints.[28] FINRA’s report on AI in the securities industry calls for robust model-governance frameworks, supervision, and testing where AI tools affect customer outcomes.[22]

In Canada, the Office of the Superintendent of Financial Institutions (OSFI) has released Guideline E-23 on model-risk management, explicitly covering AI and machine-learning models used by regulated financial institutions.[9] Canadian securities regulators likewise emphasise that online and digital advice platforms must meet know-your-client (KYC), know-your-product (KYP) and suitability requirements, with appropriate human oversight.[29]

D. Singapore and an Emerging Market Lens

Singapore’s Monetary Authority of Singapore (MAS) has been an early mover on AI governance. Its FEAT principles—Fairness, Ethics, Accountability, Transparency—and subsequent AI risk-management consultation papers require firms to test for bias, ensure clear lines of accountability, and provide explanations appropriate to clients.[2],[25]

In emerging markets such as India and South Africa, regulators are beginning to treat robo- and AI-enabled advice as a form of regulated investment advice, with licensing, disclosure and suitability requirements like those in developed markets.[20],[21]

C. Implicit Verifiable Human Contribution

Across all these regimes, three themes are remarkably consistent:

- A human professional remains responsible for advice quality.

- Firms must explain and evidence their reasoning, including where algorithms are used.

- Models must be governed, tested, and monitored within a risk-management framework.

In other words, regulators are already demanding Verifiable Human Contribution, even if they use phrases like “human oversight,” “professional judgement” or “accountability” instead.[11],[17]

3. PI Insurance: From “Silent AI” to “Affirmative AI.”

PI and D&O insurers are moving quickly to understand how AI changes loss patterns and reserving needs:

- Legal and broker commentary in markets such as Australia, the UK and the EU notes that existing PI policies can already respond to AI-assisted advice errors, but only if those errors fit within the insuring clause and are not carved out by exclusions.[8],[10]

- Reinsurers such as Swiss Re describe AI as a new class of “silent exposure,” analogous to early “silent cyber” risks, where policies unintentionally cover large accumulations of AI-driven losses.[9]

- Specialist covers like Munich Re’s aiSure™ illustrate the trajectory towards affirmative AI insurance, where model performance and algorithm failures are explicitly underwritten, often conditional on strong governance and testing.[10]

For financial planners and mortgage intermediaries, this translates into three questions PI underwriters increasingly ask:

- Where is AI used in your advice process?

- What controls and human sign-offs exist?

- Can you reconstruct decisions if there is a claim or regulatory investigation?

A firm that can answer these questions with structured logs, documented VHC workflows, and an AIMS-style framework will be viewed very differently from one that relies on untracked tools and informal practices.

4. AI, Job Disruption and the New Serviceability Risk

A. Labour-market shocks in “safe” occupations

A substantial body of research now highlights how AI reshapes labour markets:

- IMF work on AI and inequality suggests that high-skill workers may gain while mid-skill and some lower-skill workers risk displacement or wage stagnation.[11]

- BIS and ECB analyses show that a large share of jobs in advanced economies are highly exposed to AI, particularly in knowledge-intensive services, finance, and professional occupations.[12],[13]

- OECD and World Bank reports emphasise that AI-related adjustments could increase income volatility, especially for workers in automatable tasks who are unable to reskill quickly.[14–16]

The important point for planners is that many of the clients traditionally considered “prime” borrowers and investors—lawyers, accountants, managers, software developers—now face non-trivial automation risk over a 20- to 30-year horizon.

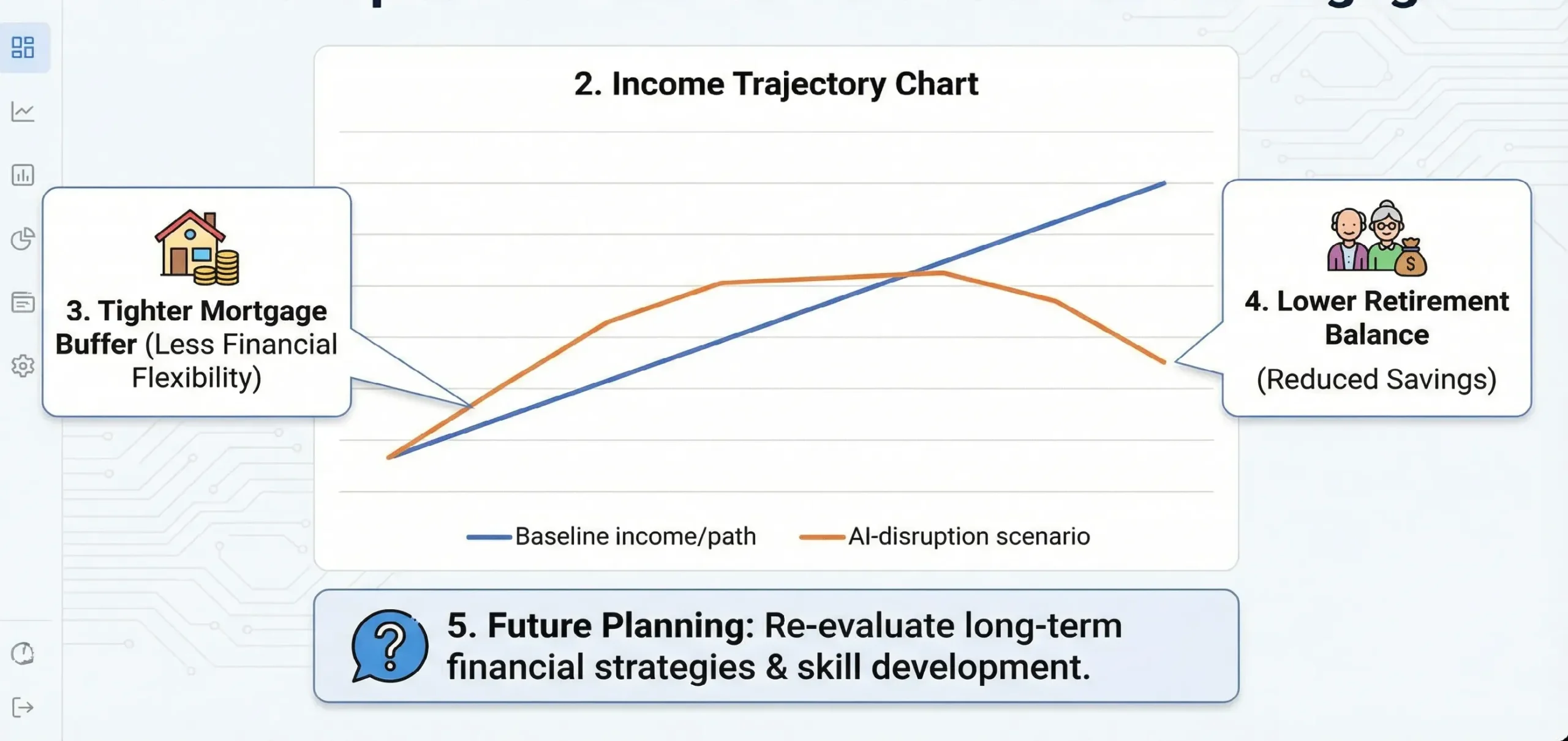

B. Implications for retirement adequacy

If earnings paths for exposed occupations become more volatile or peak earlier than expected, traditional assumptions about wage growth, contribution rates and retirement balances may be optimistic. IMF and OECD stress-testing work already explores how demographic and productivity trends affect pension systems; the next step is explicitly modelling AI-driven employment shocks.[11],[14]

For individual clients, planners should consider:

- Scenario analysis where income falls or stagnates mid-career.

- The value of reskilling and upskilling investments, and how these affect savings capacity.

- Adjusting retirement ages and draw-down assumptions to reflect a more uncertain earnings profile.

C. Mortgage serviceability and housing-market resilience

Central bank and IMF analysis on housing bubbles and systemic risk shows how income shocks translate into default risk, particularly when households carry high debt-to-income ratios and limited buffers.[15] While few studies have yet isolated AI as the sole driver, AI-driven sectoral disruptions can clearly be one of the underlying shock channels.

Mortgage brokers, planners, and bank credit teams should therefore:

- Integrate employment-sector automation risk into serviceability assessments for long-dated loans.

- Stress test for temporary or permanent reductions in income, not just interest-rate shocks.

- Consider encouraging more conservative leverage and higher buffers for clients in highly exposed roles.

All of this strengthens the case for VHC-documented human stress-testing on top of automated credit and affordability models.

5. Defining Verifiable Human Contribution (VHC) in Practice

A. Working definition

For this article:

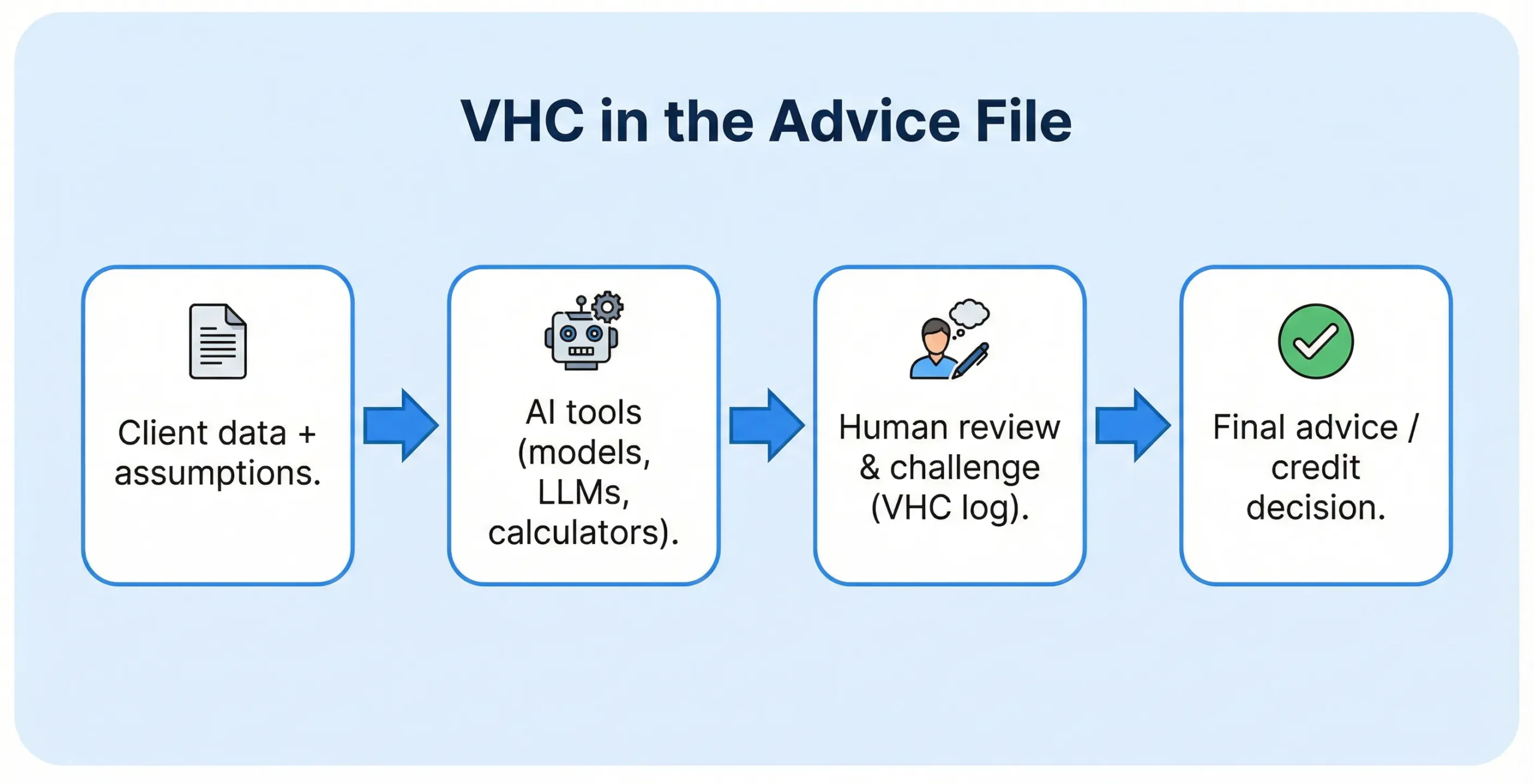

Verifiable Human Contribution (VHC) is audit-ready evidence that a qualified human professional has reviewed, challenged and documented AI-assisted outputs before they become financial advice or credit decisions.

Verifiable Human Contribution (VHC) is not a marketing slogan; it is a patent-pending proposed operational standard—AU 2025220863 and PCT/IB2025/058808—designed to provide regulators, insurers, and courts with a verifiable way to confirm that real human judgment shaped an AI-assisted decision.

B. Where VHC is non-negotiable

High-impact decisions that should always carry VHC evidence include:

- Comprehensive retirement strategies and decumulation plans.

- Superannuation/pension fund selection and switching recommendations.

- Gearing, margin loans and leveraged investment strategies.

- Residential and investment-property mortgage approvals, refinancing and restructures.

- Debt-reduction and consolidation strategies affecting housing security.

C. File-level artefacts that prove VHC

Practical artefacts could include:

- AI Interaction Log – capturing prompts, data inputs, model versions and key outputs used in the advice process.

- VHC Review Note – a structured note where the adviser documents:

- What the AI suggested,

- What they accepted, adjusted or rejected, and

- Why the final recommendation better suits the client’s circumstances.

- Stress-Test Summary – where planners record how AI-disruption scenarios were considered for income and serviceability.

- Client-communication record – confirming that AI’s role in the process was explained in plain language and that the client had an opportunity to ask questions.

When consistently applied, these artefacts give licensees, insurers and boards tangible proof that humans—not models—made the final call.

6. AI Management Systems (AIMS) and ISO/IEC 42001

A. What is an AIMS?

Definition – AI Management Systems (AIMS)

An AI Management System (AIMS) is a structured set of policies, processes and controls—aligned with ISO/IEC 42001—for governing the lifecycle of AI systems, including risk assessment, monitoring, incident management and continuous improvement.[17–19]

ISO/IEC 42001 is the first international standard specifically for AI management systems. It extends familiar management-system logic (ISO 9001, ISO 27001) into AI, making governance auditable and certifiable.[17]

B. Core components for planners, licensees and lenders

For financial planning and mortgage businesses, an AIMS implementation typically includes:

- Governance and leadership – board-approved AI risk appetite, clear roles and escalation paths.[19],[30]

- AI inventory – a register of all models and tools used in advice and lending, including purpose, owners, data feeds and risk ratings.[18]

- Model lifecycle management – design reviews, validation, performance monitoring, and retirement criteria, consistent with OSFI E-23 and NIST AI Risk Management Framework.[9],[23]

- Data governance and privacy – ensuring lawful basis for data use, quality checks, and protection of client information.[25],[31]

- Training and competence – evidence that staff understand AI limitations, bias risks and VHC obligations.[30]

- Incident management – documented process for handling AI-related errors, near misses, or complaints, and feeding lessons back into model design.

C. Embedding VHC inside AIMS

AIMS provides the structural scaffolding, while VHC defines the non-delegable human touchpoints:

- For each AI tool in the inventory, the AIMS should specify:

- Whether human review is required,

- At which stage (data input, model output, final recommendation), and

- What documentation must be captured to prove that the review occurred?

- Workflow systems should enforce VHC steps—for example, preventing an SoA from being finalised or a loan from being approved until a VHC field is completed.

Certification or “certification-ready” alignment with ISO/IEC 42001 can become a market differentiator, signalling to clients, regulators and insurers that AI is being used responsibly.[17–19]

7. Implications for Directors and Boards of Licensees

Stanford HAI – “Understanding Liability Risk from Using Healthcare AI Tools”

Stimson Center / Washington Foreign Law Society – “AI, Liability & Risk in Generative AI”

Although these videos focus on healthcare and general AI liability, the governance lessons translate directly to financial planning and credit.

A. Why AI in advice is now a board-level issue

Global guidance aimed at directors (for example, PwC Australia and the Australian Institute of Company Directors) now treats AI as a discrete strategic and risk-governance topic.[30],[31] Directors of licensees, banks and superannuation trustees are expected to:

- Understand how AI affects the firm’s business model and risk profile.

- Oversee implementation of appropriate governance frameworks (such as AIMS).

- Ensure culture and incentives do not encourage uncritical reliance on AI outputs.

In many jurisdictions, directors’ duties of care and diligence would be interpreted considering these expectations.[30–32]

B. Key questions boards should ask management

- Inventory and usage: “Do we have an up-to-date inventory of all AI and algorithmic tools that influence advice or lending decisions?”

- VHC controls: “Where is human sign-off mandatory, and how is it evidenced at file level?”

- Model-risk oversight: “Who is accountable for model validation, monitoring and retirement?”

- PI and D&O coverage: “How do our insurance policies treat AI-related losses, and what additional information do underwriters need from us?”

- Scenario planning: “How are AI-driven labour-market risks being incorporated into our stress tests for retirement adequacy and mortgage portfolios?”

C. How VHC and AIMS support director defences

A board that can point to a well-documented AIMS, VHC-enforced workflows, and regular reporting on AI usage and incidents is better placed to demonstrate that it has taken reasonable steps to manage AI risk. This is directly relevant in any regulatory or civil liability context where director conduct is questioned.[31],[32]

8. Practical Roadmap for Firms

- Discovery and mapping – complete an AI inventory; identify where tools influence client outcomes.

- Design VHC checkpoints – decide which decisions require mandatory human sign-off and what evidence is captured.

- Build an AIMS aligned with ISO/IEC 42001 – start with governance, risk register, and model-lifecycle controls; scale up toward certification.[17–19]

- Engage PI and D&O insurers – present your VHC/AIMS framework to shift from “silent AI” exposure to affirmatively underwritten cover.[9],[10]

- Monitor and learn – track AI usage, overrides, incidents and client feedback; update controls as models and regulations evolve.

Firms that move early will be better positioned to win client trust, negotiate favourable insurance terms, and satisfy regulators as expectations tighten.

Frequently Asked Questions (FAQs)

Q1. Is AI-generated financial advice legally different from traditional advice?

No. In every major jurisdiction you’re writing for, existing rules on best interests, suitability, disclosure and record-keeping apply regardless of whether the advice was drafted by a human, a robo-adviser, or an LLM. The firm and the licensed adviser remain responsible for the outcome and the reasoning behind it.

Q2. What exactly is Verifiable Human Contribution (VHC) in this context?

Verifiable Human Contribution (VHC) means audit-ready proof that a qualified human has reviewed, challenged, and documented AI-assisted outputs before they become advice or lending decisions. In practical terms, VHC shows who checked the AI’s work, what they changed or overrode, and why – all time-stamped in the client file.

Q3. What is an AI Management System (AIMS) for an advice licensee or lender?

An AI Management System (AIMS) is a structured governance framework – aligned with standards such as ISO/IEC 42001 – for how an organisation designs, procures, validates, monitors and retires AI systems. In an advice context, it links policy, risk appetite, model governance, training and incident response to day-to-day tools used by planners, brokers and credit teams.

Q4. Does using AI reduce the professional’s duty of care?

No. Regulators and professional bodies are very clear that AI is a tool, not a delegation of responsibility. A planner who blindly accepts AI-generated recommendations without applying their own judgement may increase their liability, because they have failed to perform the human role that clients and regulators expect.

Q5. How does VHC help with professional indemnity (PI) insurance?

PI underwriters want to know where AI is used, how it is controlled, and how easily a firm can reconstruct decisions. A robust VHC trail – combined with an AIMS framework – makes AI use visible and explainable, which supports underwriting, may reduce the risk of coverage disputes, and strengthens the firm’s position if a claim arises.

Q6. What is “silent AI” and why is it risky?

“Silent AI” describes AI use that is not explicitly recognised in policies, contracts or controls – like “silent cyber” in earlier insurance debates. If a firm is using AI heavily but can’t show insurers or regulators where and how, it risks mis-priced cover, unexpected exclusions, or allegations of non-disclosure.

Q7. How should advisers factor AI-driven job disruption into retirement and mortgage advice?

Advisers should treat AI-related job risk as another scenario to stress-test: What happens if this client’s income falls or becomes volatile earlier than planned? That may mean recommending larger buffers, faster debt reduction, reskilling plans, or more conservative retirement assumptions for clients in highly automatable roles.

Q8. Can a firm rely purely on robo-advice or LLM tools without human review?

For high-stakes decisions affecting retirement outcomes or long-dated mortgages, the safer – and increasingly expected – position is “AI-assisted, human-led”. Robo-advice may handle simpler cases, but licensees should define thresholds where human review and VHC sign-off are mandatory.

Q9. Do VHC and AIMS replace existing compliance frameworks?

No. They sit on top of and alongside existing compliance, risk, and quality-assurance frameworks. Think of VHC as a new kind of evidence inside the file, and AIMS as the AI-specific overlay that plugs into your current risk, audit and governance structures.

Q10. Is this article legal, financial, or insurance advice?

No. The article – and these FAQs – are educational and informational only. Readers should obtain tailored legal, financial, actuarial or insurance advice in their own jurisdiction before changing policies, advice processes or product designs.

Conclusion

AI is transforming how financial planners and lenders deliver advice, but it does not transform who is responsible. In every jurisdiction surveyed, the same message echoes: the duty of care remains human, and the ability to explain and evidence decisions is more important than ever.[2–7]

By operationalising Verifiable Human Contribution and implementing an AI Management System aligned with ISO/IEC 42001, advice businesses can:

- Harness AI’s productivity and personalisation benefits.

- Provide regulators with clear, auditable trails of human judgment.

- Present PI insurers with a governance story that justifies affirmative coverage.

- Better prepare clients’ retirement plans and housing loans for AI-driven labour-market shocks.

In short, VHC and AIMS turn AI from a black-box risk into a strategic asset that is governable, insurable and resilient.

References (indicative, non-exhaustive)

- Financial Planning Standards Board (FPSB), Global Research on AI’s Impact on Financial Planning, 2025.

- Monetary Authority of Singapore (MAS), Principles to Promote Fairness, Ethics, Accountability and Transparency (FEAT), 2018–2024.

- FPSB India, Journal of Financial Planning in India, June 2025 (AI special issue).

- Australian Securities and Investments Commission (ASIC), RG 255: Providing digital financial product advice to retail clients.

- ASIC, Regulatory guidance on financial advice obligations (including record-keeping and best-interest duty).

- Financial Markets Authority (FMA, NZ), guidance on personalised digital advice and licensing.

- UK Financial Conduct Authority (FCA), guidance on automated/streamlined advice and Consumer Duty.

- Hogan Lovells, Insuring AI Risks: Is Your Business (Already) Covered?

- OSFI (Canada), Guideline E-23 – Model Risk Management and related backgrounders.

- Swiss Re, Munich Re and related industry commentary on “silent AI” and aiSure™.

- International Monetary Fund (IMF), Artificial Intelligence and its Impact on Financial Markets and Financial Stability and related pieces on AI and inequality.

- Bank for International Settlements (BIS), Managing Explanations: How Regulators Can Address AI Explainability and AI-labour market analyses.

- European Central Bank (ECB), speeches and blogs on AI adoption and employment prospects.

- OECD, The Impact of Artificial Intelligence on the Labour Market.

- IMF, Global Financial Stability Report chapters on housing, household leverage and stress testing.

- World Bank, Artificial Intelligence in the Public Sector: Maximizing Opportunities, Managing Risks and related AI/labour reports.

- ISO/IEC 42001 – AI Management System standard (summaries by BSI, LRQA, EY, Deloitte, A-LIGN).

- Nemko Digital, AI Management Systems: ISO/IEC 42001 Implementation & Certification.

- Multiple ISO 42001 checklists and guidance (Rhymetec, Vanta, LRQA, Sprinto).

- FPSB South Africa & India, Global research reveals how AI is revolutionizing financial planning.

- Hymans Robertson LLP, written evidence to UK Parliament AI in finance inquiry.

- IOSCO, Artificial Intelligence in Capital Markets: Use Cases, Risks, and Challenges.

- NIST, AI Risk Management Framework.

- ESMA, statements and warnings on the use of AI in investment services.

- MAS consultation paper on AI risk-management guidelines.

- PwC, Responsible AI in Finance: 3 Key Actions to Take Now.

- Linklaters, AI in Financial Services 3.0 – Global overview of the EU AI Act and finance.

- US SEC, guidance on robo-advisers and predictive analytics in brokerage and advisory services.

- Canadian Securities Administrators, staff notices on online advice and digital engagement practices.

- PwC Australia, Artificial Intelligence: What Directors Need to Know.

- Australian Institute of Company Directors (AICD), A Director’s Guide to AI Governance.

- Baker McKenzie and AJG Jersey, guidance on directors’ duties and AI governance.

Mandatory TLF Disclosure Block (including patent disclosure)

This article reflects AI, regulatory, insurance and professional-services practices as at 1 December 2025 (AEST). Readers should confirm whether subsequent guidance has been issued by their regulators, professional bodies, insurers, or standard-setting organisations.

Content on TechLifeFuture.com is for educational and informational purposes only and does not constitute legal, accounting, financial, credit or insurance advice. It is not a substitute for tailored professional advice in your jurisdiction.

Some links on this page may be affiliate or referral links (including, where relevant, Educative.io, Mindhive.ai, or other partners). If you purchase through these links, TechLifeFuture.com may earn a small commission at no extra cost to you.

Conflict-of-interest and IP disclosure: The author, John Richard Cosstick, is the named inventor on the following pending patent and PCT applications related to concepts discussed in this article:

– AIMS: Australian patent application 2025271387 – “System And Method For Cryptographic Telemetry Normalization And Immutable Event Logging In Probabilistic AI Architectures.”

– VHC: Australian patent application **2025220863 – “Verifiable Human Contribution,” international application number PCT/IB2025/058808.

These applications formed the conceptual framing of Verifiable Human Contribution (VHC) and AI Management Systems (AIMS) in this article.