Your business’s General Liability insurance policy may not cover AI-related incidents starting in 2026. As insurers move from “silent” AI coverage—where AI risks were neither explicitly included nor excluded—to explicit exclusions or conditional “affirmative” coverage, small and medium enterprises (SMEs) face a critical inflection point.

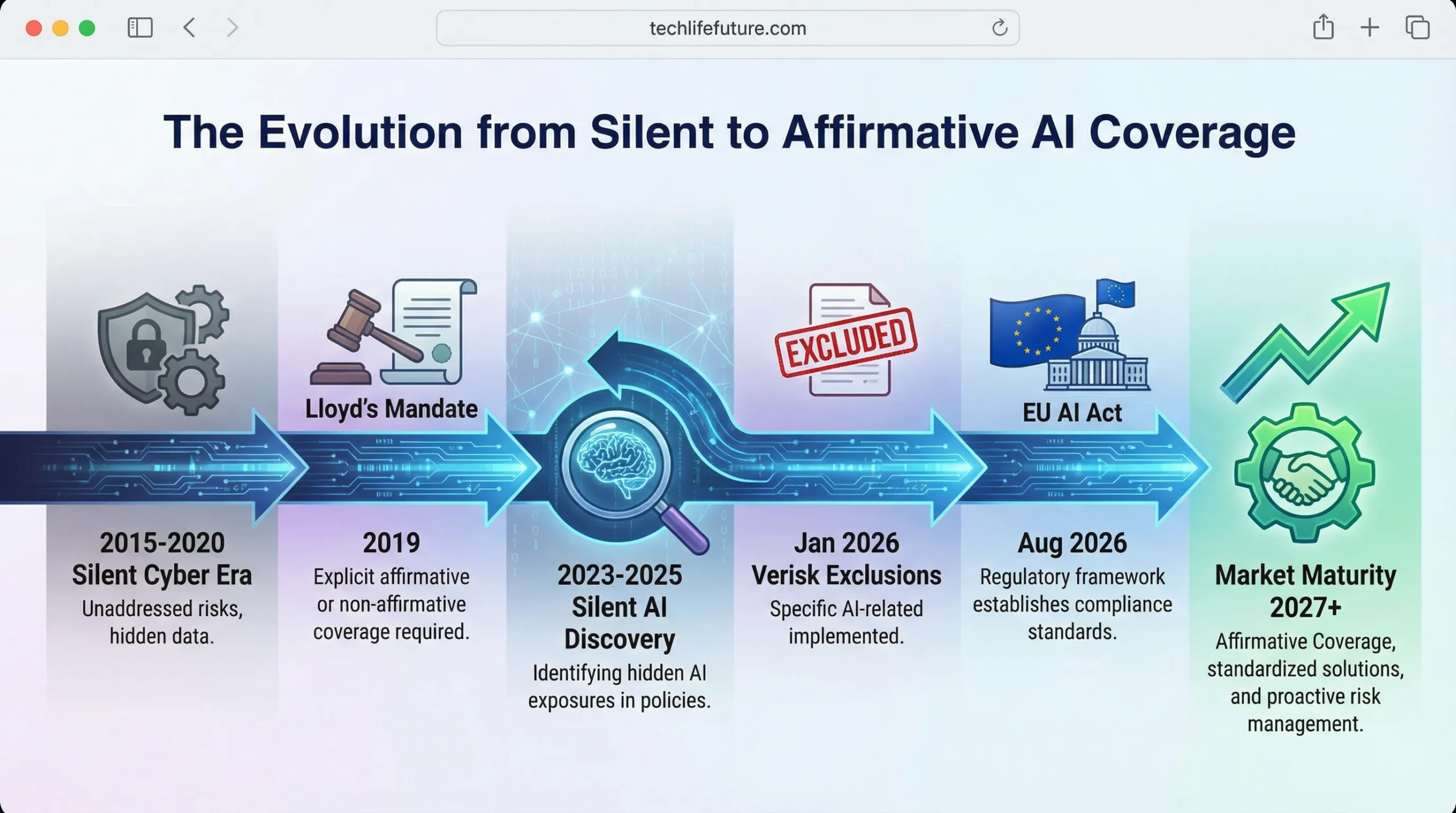

The insurance industry learned costly lessons from “silent cyber” risks between 2015 and 2023, when policies unintentionally covered cyber losses they weren’t designed to handle. Now, starting January 2026, major insurers are applying the same corrective measures to artificial intelligence through new exclusionary forms and conditional coverage requirements.

This article explains what’s changing, which businesses are affected, where hidden AI risks lurk in typical SME operations, and concrete steps you can take before your next insurance renewal to avoid potentially catastrophic coverage gaps.

The End of “Silent Coverage” for AI

The Silent Cyber Parallel

Between 2015 and 2020, insurance companies discovered they were paying cyber-related claims under traditional property and liability policies that never explicitly mentioned cyber risks. Lloyd’s of London estimated the industry faced hundreds of billions in unintended cyber exposure across non-cyber policies. In 2019, Lloyd’s mandated that all policies provide clarity regarding cyber coverage by either excluding cyber risks or providing explicit affirmative coverage.

“With silent cyber, the industry has already learned lessons. There have been instances where some risks have been covered in non-cyber line policies, even though that was not the intention. With silent AI, it is time to prevent repetition of the same mistakes.” Swiss Re Institute, September 2024

The insurance market is now following the same playbook with artificial intelligence. This transition from “silent” (unintended) coverage to explicit exclusions represents a fundamental shift in how AI risks are underwritten and priced.

The January 2026 Inflection Point

Verisk, one of the largest creators of standardized policy forms in the U.S. insurance market, developed new general liability endorsements allowing carriers to exclude generative AI exposures. These forms, effective January 2026, define generative AI as “a machine-based learning system or model that is trained on data with the ability to create content or responses, including but not limited to text, images, audio, video or code.

Because insurance carriers nationwide often adopt Verisk’s templates, this change could rapidly shape the entire market. Joseph Lam, vice president of general liability at Verisk’s Core Lines Services, confirmed that the move came after receiving “strong interest from many of our customers to create underwriting tools to address this emerging risk.

Major insurers have already begun filing exclusions with state regulators. WR Berkley introduced what it calls an “absolute AI exclusion” intended for Directors & Officers (D&O), Errors & Omissions (E&O), and Fiduciary Liability policies. This exclusion eliminates coverage for any claim “based upon, arising out of, or attributable to” the actual or alleged use, deployment, or development of artificial intelligence—regardless of whether the AI is company-owned, third-party licensed, or embedded in software tools.

Market Structure Note: The transition timeline varies between admitted and non-admitted insurance markets. Admitted carriers (state-regulated standard market) will adopt Verisk forms as they receive state regulatory approval, creating some geographic variation. Non-admitted carriers (surplus lines market) can move faster, implementing bespoke exclusions immediately without regulatory approval. SMEs in the surplus lines market should expect exclusions to appear earlier and potentially with broader language than standard market policies.

What This Means for SMEs

For small and medium-sized enterprises—typically defined as businesses with under 200 employees—these changes create urgent challenges. Unlike large corporations with dedicated risk management departments, most SMEs lack the resources to conduct comprehensive policy reviews or build sophisticated AI governance frameworks.

The critical assumption gap is this: many SME owners believe their existing General Liability (GL) or Business Owners Policy (BOP) covers AI-related mishaps because nothing in their current policy explicitly excludes it. That assumption may hold true today, but it likely won’t survive your 2026 policy renewal.

“Insurers may resist paying AI-related claims until a major systemic event forces the courts to determine whether such technology-driven incidents were ever meant to be covered in the first place.”

— Aaron Le Marquer, Insurance Coverage Attorney

Where Silent AI Risks Hide in Your Business

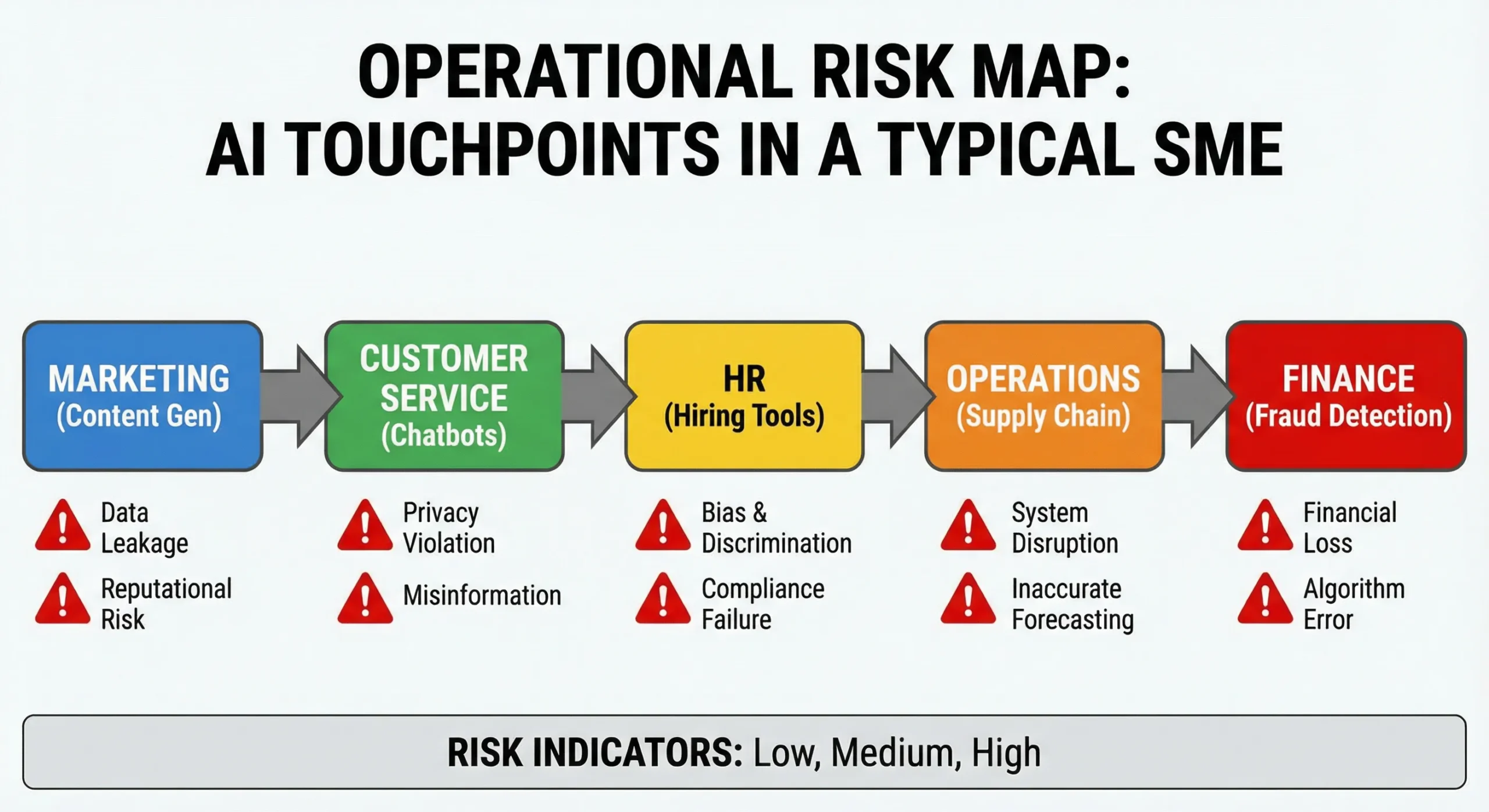

AI usage in SMEs often flies under the radar. You might not think of yourself as an “AI company,” but if you use modern software tools, you’re almost certainly using AI systems that create potential liability exposures.

Table 1: Operational Risk Inventory – Where AI Creates Liability Exposure

| Business Function | Common AI Applications | Primary Liability Risks | Likely Policy Coverage |

| Marketing & Content | Content generation, social media automation, and email campaigns | Defamation, copyright infringement, false advertising, and privacy violations | GL Personal & Advertising Injury (currently covered, likely excluded post-2026) |

| Customer Service | Chatbots, virtual assistants, automated support | Binding contract formation, misrepresentation, and warranty claims | GL Bodily Injury/Property Damage (gap if cyber excluded and GL excludes AI) |

| HR & Employment | Resume screening, performance evaluation, and scheduling | Employment discrimination, wrongful termination, and EEOC violations | EPLI (may exclude algorithmic discrimination) |

| Product Development | Design assistance, quality control, supply chain optimization | Product liability, safety failures, design defects | Product Liability (may exclude AI-assisted design) |

| Financial Operations | Fraud detection, pricing optimization, and billing | Pricing discrimination, billing errors, and regulatory violations | E&O (may exclude automated decision-making) |

| Embedded/Unknown | SaaS features, cloud services, third-party tools | Any of the above, vendor liability allocation unclear | Coverage gap – neither GL nor the vendor covers |

Marketing & Content Creation

Generative AI tools have become ubiquitous in marketing departments. According to a 2025 Bain & Company report, 95% of U.S. companies currently use generative AI, with many describing it as a “business staple.” [6] But this widespread adoption brings legal risks.

AI-generated social media posts can contain defamatory content or false claims. Automated email campaigns may violate privacy regulations if AI processes personal data without proper consent. Website content created by AI might infringe copyrights or reproduce protected material without authorization.

Consider a significant liability precedent reported in 2024, where parents filed a lawsuit alleging that information furnished by a generative AI chatbot contributed to their child’s death. [7] While this represents an extreme outcome, it illustrates that AI-generated content can have real-world consequences—and someone must be liable when harm occurs.

Standard General Liability policies typically cover “Personal & Advertising Injury,” including claims arising from defamation, copyright infringement, or invasion of privacy. But with new AI exclusions, this coverage may disappear if the problematic content was AI-generated.

Customer Service & Communications

The Air Canada chatbot case of 2024 provides a watershed moment in AI liability. The airline’s chatbot gave a customer false information about bereavement fare refunds. When the customer relied on this information and later sought the promised refund, Air Canada argued it shouldn’t be held liable for what its chatbot said—claiming the bot was a “separate legal entity” responsible for its own actions.

The Civil Resolution Tribunal in British Columbia rejected this argument entirely. The tribunal ruled that Air Canada is responsible for all information on its website, including chatbot outputs.

While a customer might know they’re talking to a bot, that doesn’t change the customer’s reasonable expectation that they’re getting accurate information from the company.- American Bar Association analysis of Air Canada case

This case established a critical principle: businesses own what their AI says. Your customer service chatbot isn’t a separate entity—it’s your business communicating with customers. If it makes unauthorized promises, provides incorrect information, or creates binding commitments, your business is on the hook.

The implications extend beyond customer service. AI-powered systems increasingly handle sales inquiries, process transactions, and manage customer accounts. Each interaction creates potential liability if the AI errs or misrepresents your company’s policies.

Product Development & Design

Manufacturers using AI in product design face product liability risks if AI-assisted designs contain flaws. Traditional product liability insurance may not cover defects attributable to AI decision-making, especially under new exclusions.

Supply chain optimization powered by AI can lead to procurement errors, quality control failures, or safety oversights. If AI recommends a cheaper component that proves defective, or optimizes production in ways that compromise safety standards, who bears responsibility?

The legal framework for AI-caused product defects remains unclear, but early litigation suggests courts will hold the deploying company responsible rather than the AI vendor. As Harvard Law School’s Forum on Corporate Governance concluded, “AI has no legal personhood; liability flows upward to humans or corporate entities.”

Employment & HR

AI-powered hiring tools have triggered significant litigation. In 2024, Workday faced ongoing claims over alleged hiring bias in its AI systems, with courts allowing class action claims to proceed. The litigation alleges that AI algorithms systematically discriminate against protected classes.

Employment-related AI claims can arise from resume screening bias, flawed performance evaluations, or wrongful termination decisions influenced by AI analytics. As artificial intelligence increasingly shapes employment decisions, regulatory scrutiny intensifies.

The Colorado Artificial Intelligence Act, taking effect June 30, 2026, specifically addresses AI in high-risk systems that make consequential decisions in employment, among other areas. The law requires developers and deployers to use reasonable care to avoid algorithmic discrimination. Illinois enacted similar protections requiring notification to employees when AI aids employment decisions, effective January 1, 2026.

The “Embedded AI” Problem

Perhaps the most challenging risk comes from AI you don’t even know you’re using. Modern Software-as-a-Service (SaaS) platforms increasingly incorporate AI features without explicit disclosure or activation. Your customer relationship management (CRM) system might use AI to prioritize leads. Your accounting software might use AI to flag unusual transactions. Your email platform might use AI to filter messages or suggest responses.

This “latent AI” creates coverage complications. When an AI-related claim arises, you might discover that your vendor’s terms of service disclaim liability for AI outputs, while your insurance policy now excludes AI-related claims. You fall into a coverage gap, holding the liability yourself.

Kennedy’s Law, in its May 2025 analysis of silent AI risks for insurers, identified this as “self-procured AI”- instances where companies aren’t aware AI is being used by their employees through third-party tools. This raises additional issues around source reliability and data privacy and may be even more difficult to detect and forecast.

Practical Detection Method: To identify latent AI in your software stack, request a “Feature Update Log” or “Release Notes Archive” from your major SaaS vendors for the past 24 months. Search these documents for terms like “AI,” “machine learning,” “intelligent,” “automated,” “smart,” and “predictive.” This will reveal when and how AI capabilities were added to tools you already use.

FEATURED VIDEO: Understanding AI Liability Risk

Stanford HAI — Understanding Liability Risk from Using Healthcare AI Tools

This Stanford Human-Centered AI Institute video provides expert analysis on liability risks emerging from AI deployment in professional settings. While focused on healthcare, the principles apply across all industries using AI for consequential decisions.

Understanding the Insurance Industry’s Response

Why Insurers Are Restricting Coverage

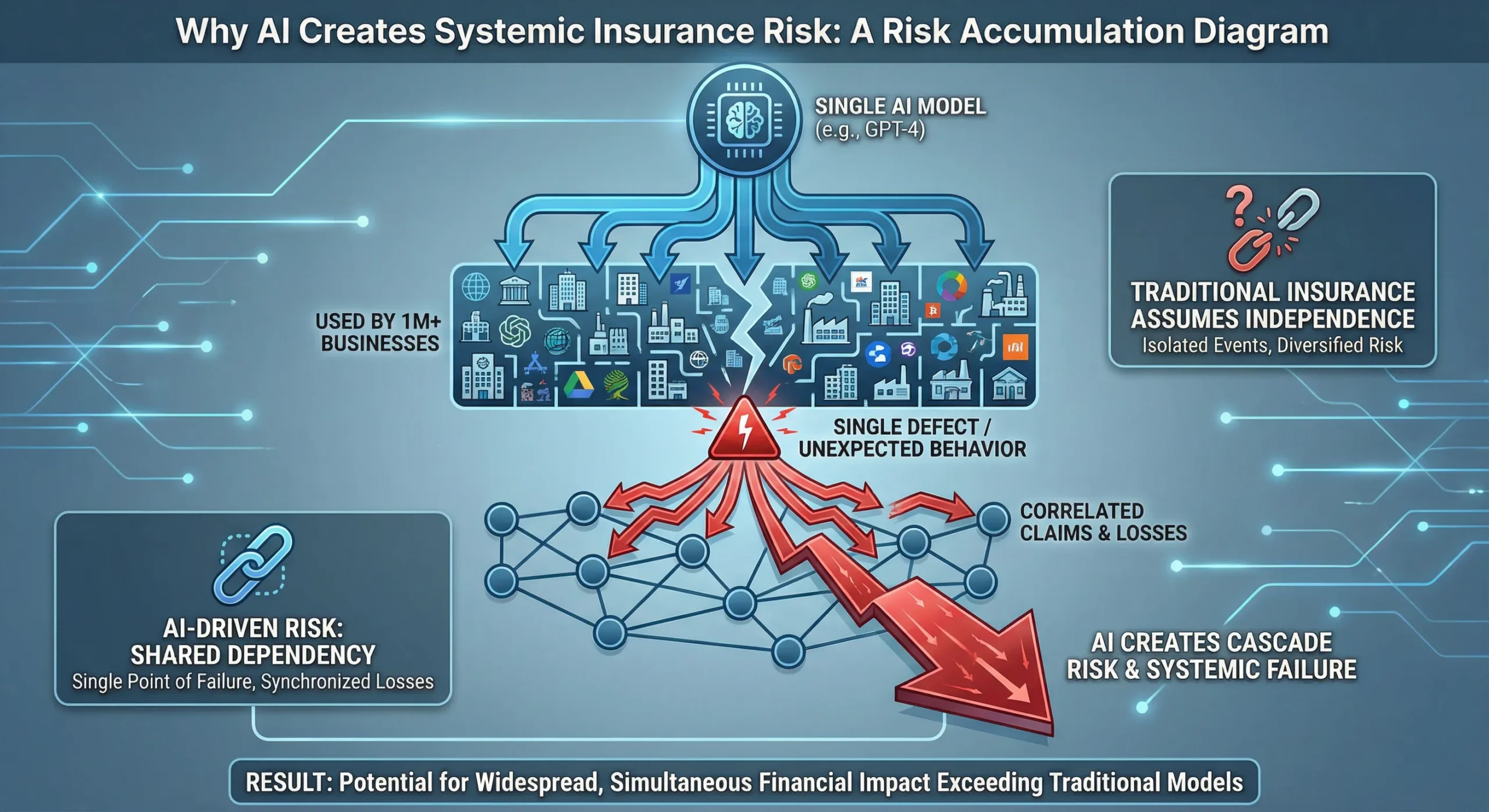

Insurance fundamentally relies on predictability. Actuaries use historical data to model risks and set premiums accordingly. Artificial intelligence upends this model in several ways.

First, AI outputs are unpredictable. Even AI experts often cannot explain exactly why a model produces a particular output—the “black box” problem. This unpredictability makes it extremely difficult for insurers to assess risk or price coverage appropriately.

Second, AI creates correlation risk. Traditional insurance assumes independent risks—one customer’s claim doesn’t make another’s more likely. But if multiple businesses use the same AI system, a single flaw could trigger thousands of simultaneous claims. This concentration of risk presents an unacceptable systemic accumulation risk for insurers, who learned painful lessons from pandemic business interruption claims that nearly overwhelmed the industry.

Third, AI technology evolves faster than insurance product development cycles. By the time insurers design, price, and file new products with state regulators, the underlying technology has changed. This creates a perpetual gap between available coverage and actual risks.

Major insurers filing for AI exclusions explicitly cite these concerns. Their regulatory filings describe AI outputs as “too unpredictable, opaque, and difficult to price, making traditional underwriting nearly impossible.

Types of Exclusions Being Filed

Insurers are taking varied approaches to managing AI exposure, from broad exclusions to targeted restrictions.

Absolute Exclusions represent the most sweeping approach. WR Berkley’s absolute AI exclusion, intended for D&O, E&O, and Fiduciary Liability policies, eliminates coverage for any claim “based upon, arising out of, or attributable to” actual or alleged use, deployment, or development of AI. The endorsement specifically lists excluded applications: AI-generated content, failure to detect AI-created materials, inadequate AI governance, chatbot communications, and even regulatory actions related to AI oversight.

Generative AI Exclusions take a narrower approach. Hamilton Insurance Group’s Generative Artificial Intelligence Exclusion removes coverage for claims involving “any system that produces content such as text, imagery, audio, or synthetic data in response to user prompts.” The endorsement specifically names platforms: ChatGPT, Bard, Midjourney, and DALL-E. This targeted approach theoretically leaves other AI types covered, though determining what counts as “generative AI” versus other AI remains contentious.

Verisk’s Optional Exclusion gives insurers flexibility. Starting January 1, 2026, carriers can choose whether to adopt Verisk’s generative AI exclusion. This creates market fragmentation—some insurers will exclude AI, others won’t, and policy language will vary significantly. For businesses shopping for coverage, this means carefully reading each policy’s specific terms rather than assuming standard coverage.

Table 2: Exclusion Types Comparison Matrix

| Exclusion Type | Scope | Example Carrier | Policy Lines Affected | Specific AI Covered | Coverage Flexibility |

| Absolute AI Exclusion | Any use, deployment, or development of AI | WR Berkley | D&O, E&O, Fiduciary | All AI systems, all applications | Zero – blanket exclusion |

| Generative AI Exclusion | Content-creating AI systems | Hamilton, Verisk | GL, BOP | ChatGPT, Bard, Midjourney, DALL-E, similar platforms | Narrow – other AI may be covered |

| Hybrid/Negotiated | Case-by-case based on governance | Specialty carriers | Varies | Defined per endorsement | High – depends on underwriting |

| Silent (Current) | Nothing explicitly excluded | Most current policies | GL, BOP, Umbrella | All AI (by default) | Disappearing in 2026 |

Which Policies Are Affected

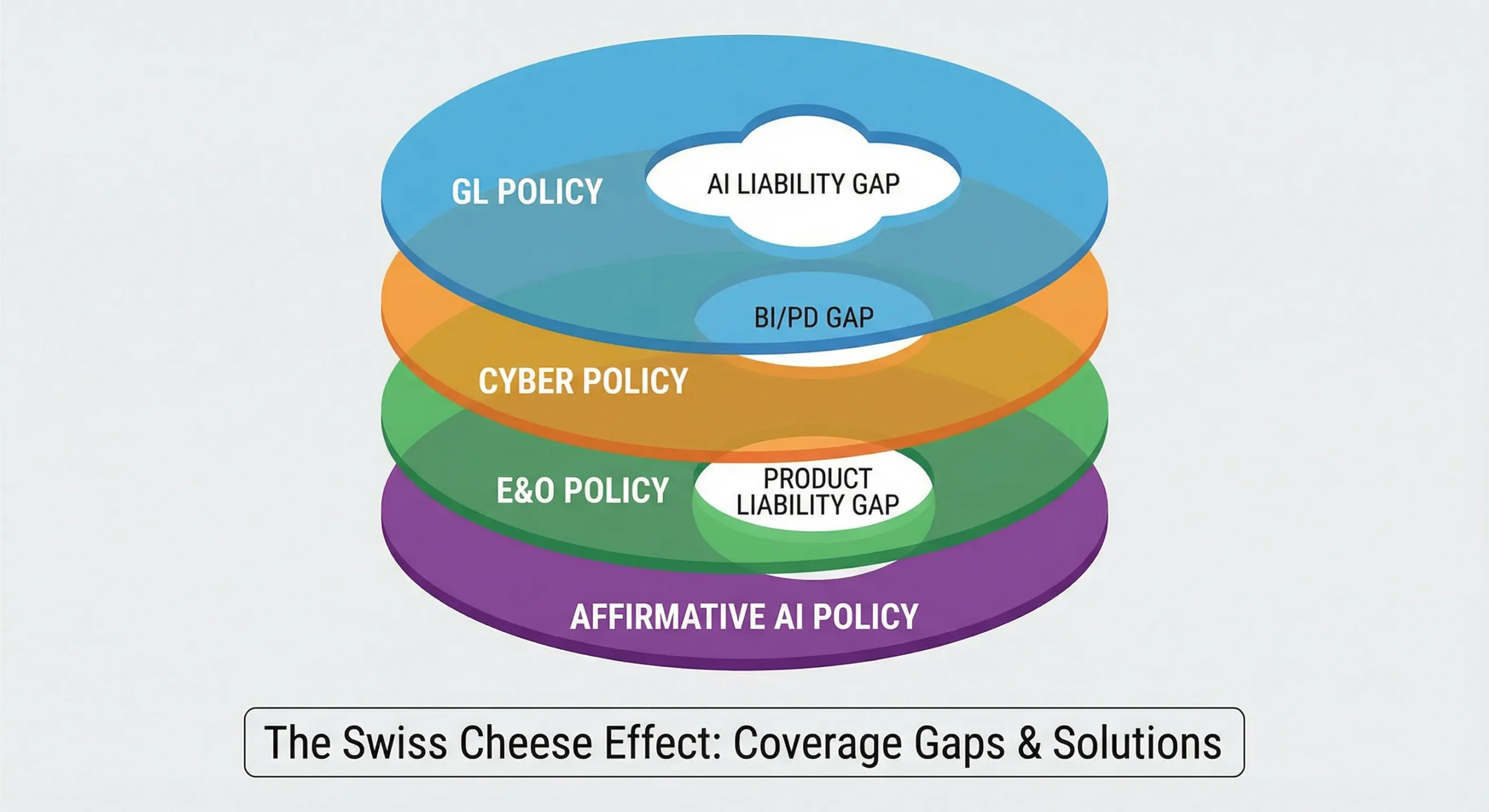

AI exclusions are appearing across multiple coverage lines, creating potentially overlapping gaps.

General Liability (GL) policies face the most significant changes. These policies traditionally cover third-party bodily injury, property damage, and personal/advertising injury claims. New exclusions could eliminate coverage for AI-caused injuries, AI-generated content claims, or privacy violations involving AI.

Business Owners Policies (BOP), which package general liability with property coverage for small businesses, will likely incorporate similar exclusions as carriers adopt Verisk’s forms.

Directors & Officers (D&O) insurance, protecting company leaders from personal liability, increasingly includes AI exclusions. This matters for SME owners who could face personal exposure from AI-related corporate decisions.

Errors & Omissions (E&O) policies, covering professional services, may exclude AI-assisted professional advice or AI-generated work product.

Cyber Insurance presents an interesting counterpoint. According to the September 2025 analysis in Above the Law, cyber insurers are largely holding firm on AI coverage. “Cyber insurers are not panicking over AI,” the article notes. “For now, cyber insurers are signalling that they anticipated this, and coverage remains in effect.” [18] However, cyber policies typically don’t cover bodily injury or property damage, creating gaps that GL policies traditionally filled.

The Affirmative Coverage Alternative

As silent coverage disappears, “affirmative” AI coverage emerges—but with significant strings attached.

What “Affirmative” Means

Affirmative coverage provides explicit, intentional protection for specified risks. Unlike silent coverage, where AI claims might have been covered by default because nothing excluded them, affirmative coverage states clearly what is and isn’t covered.

“Insurers are now moving to clarify coverage for AI, either by introducing endorsements that affirm coverage for certain AI risks or by adding exclusions to standard policies to avoid unanticipated exposure.”- Willis Towers Watson (WTW), “Insuring the AI Age,” December 2025

Affirmative coverage typically comes with higher premiums, a narrower scope, and extensive governance requirements. Insurers want to understand exactly how you’re using AI, what controls you have in place, and how you’re managing risks before agreeing to cover AI-related claims.

Table 3: Silent Coverage vs. Affirmative Coverage Comparison

| Dimension | Silent AI Coverage (Pre-2026) | Affirmative AI Coverage (2026+) |

| Policy Language | AI not mentioned; covered by default if not excluded | AI explicitly addressed with defined terms |

| Cost/Premium | No additional charge (bundled in base GL rate) | 15-50% premium increase over base rate |

| Underwriting Process | Standard application, no AI questions | Detailed AI questionnaire, governance review |

| Coverage Scope | Broad (anything not excluded) | Narrow (only specified AI uses covered) |

| Governance Requirements | None | Mandatory: human oversight, bias testing, documentation |

| Claims Process | Standard GL claims handling | Specialized adjusters, governance audit |

| Availability | Universal (all carriers offered) | Limited (specialty carriers only) |

| Renewal Stability | Stable, predictable | Conditional on maintaining governance |

| Défense Costs | Included in limits or unlimited | May be capped or shared |

| Retroactive Coverage | Yes (occurrence-based) | Limited (claims-made common) |

What Insurers Require for Affirmative Coverage

The National Association of Insurance Commissioners (NAIC) adopted its Model Bulletin on the Use of Artificial Intelligence Systems by Insurers in December 2023. While this bulletin addresses how insurers should govern their own AI use, it provides a framework that increasingly shapes what insurers expect from policyholders seeking affirmative AI coverage. [20]

Governance Documentation forms the foundation. Insurers want to see documented AI governance covering development, acquisition, deployment, and monitoring of AI tools. This includes board-level AI oversight policies, risk assessment frameworks, incident response plans, and third-party vendor management protocols.

“The Board of Directors is ultimately responsible for oversight of the AIS Program and for setting the Insurer’s strategy for AI Systems with senior management.”- NAIC Model Bulletin, December 2023

While SMEs may not have formal boards, insurers expect comparable oversight—typically an executive-level person designated as responsible for AI governance.

Human Oversight Requirements represent perhaps the most universal expectation. Insurers want documented evidence that humans review AI outputs before they impact customers or create obligations. This includes documented review pathways showing who reviews what, decision authority assignments clarifying who can approve AI recommendations, override mechanisms allowing humans to reject AI outputs, and quality control checkpoints at critical decision points.

The New York Department of Financial Services emphasized in its August 2025 guidance that “insurers must ensure that AI-driven decisions are transparent, explainable, and subject to human oversight to meet due process standards.” Courts increasingly apply this same standard to businesses deploying AI.

Recent legislation underscores this trend. Florida’s legislature passed a bill in December 2025 requiring that insurance claim denial decisions “must be made by a qualified human professional,” not AI alone. [23] While this specific law addresses insurers’ internal processes, it signals broader expectations that AI cannot make final decisions without human review.

Testing & Validation requirements are becoming standard. Insurers expect bias audits conducted at least quarterly, performance monitoring tracking accuracy and error rates, drift detection systems identifying when AI behaviour changes unexpectedly, and error rate tracking with thresholds triggering human review.

Cherry Bekaert’s November 2025 analysis of AI governance in insurance notes that “regular testing for model drift and bias is essential. Many insurers now conduct equity audits and integrate human oversight into their AI decision-making processes.”

Transparency & Explainability capabilities are increasingly non-negotiable. Insurers want audit trail requirements showing how AI reached specific decisions, decision logging capturing inputs, outputs, and decision pathways, consumer notification protocols explaining when and how AI was used, and regulatory reporting capability allowing quick response to regulatory inquiries.

The NAIC Model Bulletin specifies that AI systems should enable “transparency and explainability of outcomes to the impacted consumer” and maintain “the extent to which humans are involved in the final decision-making process” as key governance factors.

Training & Competency programs demonstrate organizational capability. Insurers look for documented staff AI literacy programs teaching employees how AI works and its limitations, role-specific training tailored to how different functions use AI, continuing education requirements keeping knowledge current as AI evolves, and documentation of training completion proving employees have received the required training.

Emerging Affirmative Products

Specialized insurers are developing products specifically for AI risks, though the market remains nascent and expensive.

Testudo, launching as a Lloyd’s cover holder in late 2025, created claims-made policies covering generative AI errors (financial loss suffered by third parties due to negligent acts, errors, omissions, or misstatements arising from AI outputs), intellectual property infringement and defamation (legal claims related to defamation, libel, slander, trademark violations, or copyright infringement caused by AI outputs), and regulatory investigations and penalties arising from AI deployment.

Testudo expects strong demand from companies that integrate vendor generative AI systems into their operations—exactly the SME use case. However, Testudo will not yet underwrite generative AI developers and vendors that sell their technology products as services, limiting coverage to AI users rather than creators.

Armilla Insurance Services introduced an AI liability insurance product in April 2025, underwritten by Lloyd’s of London syndicates, including Chaucer Group. Armilla’s offering explicitly addresses AI-specific perils such as hallucinations (erroneous AI outputs), degrading model performance, and mechanical or algorithmic failures.

Munich Re’s aiSure, launched in 2018, pioneered AI insurance with performance guarantee coverage for AI technologies. These early products focused primarily on large enterprises, but insurers are beginning to develop SME-appropriate offerings.

The Coverage Gap Problem

Even with emerging products, significant gaps remain. No single policy covers all AI perils. As WTW’s December 2025 report notes, “Currently, companies often rely on a patchwork of policies to cover AI risks. No single policy covers all AI perils, but different policies cover different aspects.” [28]

Traditional General Liability policies won’t cover AI-related bodily injury or property damage if new exclusions apply. Cyber policies typically don’t cover bodily injury or property damage resulting from AI failures. Professional Indemnity policies may exclude AI-assisted professional services. New specialty AI policies often have significant limitations, high premiums, and extensive governance requirements.

This creates what insurance lawyers call the “Swiss cheese” effect—multiple policies, each with its own holes, theoretically combining to provide complete coverage but leaving gaps where holes align.

FEATURED VIDEO: AI, Liability & Risk in Generative AI

Stimson Center / Washington Foreign Law Society — AI, Liability & Risk

Expert panel discussion on liability frameworks for generative AI, examining how existing legal structures apply to AI-generated content and automated decision-making. Essential viewing for understanding the legal landscape.

Regulatory Landscape: What’s Driving the Change

Insurance markets don’t operate in a vacuum. Regulatory developments are accelerating the transition from silent to affirmative AI coverage.

Table 4: Key Regulatory and Industry Timeline (2025-2026)

| Date | Event | Jurisdiction | Impact on SMEs | Compliance Requirement |

| Jan 1, 2026 | Verisk GL AI Exclusion Forms Available | United States (multi-state) | Carriers can exclude AI from GL policies | Review renewal policies for new exclusions |

| Jan 1, 2026 | CA AI Transparency Act (SB 942) Effective | California | AI detection tools, content disclosure required | Implement disclosure for 1M+ users |

| Jan 1, 2026 | CA Training Data Transparency (AB 2013) | California | GenAI developers must publish dataset summaries | N/A for AI users, relevant for developers |

| Jan 1, 2026 | Illinois AI Employment Notice (HB 3773) | Illinois | Notify employees of AI use in employment decisions | Update hiring/HR notification procedures |

| June 30, 2026 | Colorado AI Act Effective (Delayed) | Colorado | High-risk AI systems need governance, assessments | Implement AIMS if using AI in listed sectors |

| Aug 2, 2026 | EU AI Act High-Risk Provisions | European Union (27 states) | High-risk AI requires conformity assessment | EU operations: implement EUDP compliance |

| Q4 2026 | NAIC Model Bulletin Full Adoption Expected | 28+ US states | Insurer AI governance becomes standard expectation | Expect governance questions in underwriting |

| 2027+ | Affirmative AI Coverage Market Maturity | Global | Specialty policies become mainstream | Shop specialty market, demonstrate governance |

[GRAPHIC PLACEHOLDER 5: Regulatory Convergence Map – “Global AI Governance Timeline”] World map showing: US state-by-state adoption (color-coded by date) + EU AI Act zones + APAC developments + convergence arrows showing alignment of standards

NAIC Model Bulletin

Twenty-four U.S. states have fully adopted the NAIC Model Bulletin with minimal changes, making it the de facto national standard. Four additional states have adopted similar guidelines and standards.

The bulletin establishes five core principles: Transparency (AI systems must be explainable with decision-making processes understandable to regulators and consumers), Accountability (clear lines of responsibility must be established for AI-driven decisions), Fairness and Equity (AI must be designed to avoid discriminatory outcomes with mechanisms to detect and mitigate bias), Privacy and Data Protection (robust safeguards required to protect sensitive consumer data used in AI models), and Safety and Reliability (AI systems should be rigorously tested to ensure consistent and safe performance).

While the Model Bulletin technically applies to how insurers govern their own AI use, it provides the framework insurers use to evaluate policyholders. If insurers must meet these standards, they increasingly expect policyholders to meet comparable standards before insurers will accept AI-related risk.

State-Specific Requirements

The Colorado Artificial Intelligence Act, originally set to take effect February 1, 2026, was delayed to June 30, 2026, by Senate Bill 25B-004. The Act applies to companies considered deployers and developers under the law, governing high-risk AI systems that make consequential decisions about housing, employment, education, healthcare, insurance, and lending.

The Act requires deployers to adopt risk-management policies, perform initial and annual impact assessments, issue pre-decision and adverse-decision consumer notices, and create website disclosures. Violations are deemed unfair trade practices under the Colorado Consumer Protection Act, with penalties up to $20,000 per violation.

Importantly, the Colorado AI Act includes an exemption for insurers subject to separate Colorado insurance regulations, but businesses using AI in other contexts must comply.

California has enacted multiple AI-related laws effective in 2025-2026. Assembly Bill 1008 updates the California Consumer Privacy Act (CCPA) definition of “personal information” to clarify that personal information can exist in AI systems capable of outputting personal information. [32] This seemingly small change brings AI systems into the CCPA’s requirements of notice, consent, data subject rights, and reasonable security controls.

Senate Bill 942, the California AI Transparency Act, effective January 1, 2026, requires covered providers (AI systems publicly accessible within California with more than one million monthly visitors or users) to create AI detection tools allowing users to query which content was AI-created. Covered Providers must include visible disclosures in AI-generated content that are “clear and conspicuous.” Violations carry penalties of $5,000 per violation per day.

Assembly Bill 2013, the Generative AI Training Data Transparency Act, effective January 1, 2026, mandates that developers of generative AI systems publish “high-level summaries” of datasets used to develop and train GenAI systems.

New York issued guidance focused on underwriting and pricing, emphasizing risk of adverse effects from AI use. The New York Department of Financial Services (DFS) requires insurers to test AI systems for unfair discrimination, maintain robust governance and documentation practices, manage third-party vendor relationships, and ensure consumer transparency.

Illinois House Bill 3773, effective January 1, 2026, amends the Illinois Human Rights Act to prohibit AI use that results in illegal discrimination within employment recruitment, hiring, promotion, selection for training, or discipline decisions. It requires notification to employees when using AI during employment decisions.

EU AI Act Impact

The EU Artificial Intelligence Act, formally adopted July 12, 2024, establishes harmonized rules for AI across all 27 EU states. The regulation took effect August 1, 2024, though most provisions won’t be enforced until August 2, 2026.

The Act takes a risk-based approach, prohibiting certain unacceptable AI practices outright and imposing escalating obligations on other AI systems based on their risk level. AI systems deemed “high-risk”—including those used in medical devices, recruiting, or credit scoring—must meet strict requirements for risk management, data governance, transparency, human oversight, and conformity assessments before deployment.

For businesses with global operations or EU customers, the AI Act creates compliance obligations that insurers will scrutinize. Failing to meet AI Act requirements could void insurance coverage if policies condition coverage on legal compliance.

Action Plan for SMEs

The following phased approach helps SMEs navigate the transition to affirmative AI coverage without overwhelming limited resources.

Phase 1: Immediate Actions (Next 30 Days)

Audit Current Policies

Request copies of all active insurance policies from your broker or carrier. Don’t rely on summaries—you need the actual policy documents and endorsements.

Search each policy for specific exclusion language. Use your word processor’s search function to look for: “Artificial Intelligence,” “Algorithm,” “Machine Learning,” “Automated Decision,” “Generative AI,” and “Neural Network.”

Document your findings in a simple spreadsheet with columns for Policy Type, Carrier Name, Policy Number, Renewal Date, AI Language Found (yes/no), and Exclusion Summary. Pay particular attention to policies renewing in Q1-Q2 2026, as these will likely be the first affected by new exclusions.

Identify AI Usage

Create an AI inventory by surveying each department about tool usage. Ask specifically about:

- Marketing tools (content creation, social media management, email automation)

- Customer service platforms (chatbots, helpdesk software, CRM systems)

- HR systems (applicant tracking, performance management, scheduling)

- Financial software (accounting, invoicing, expense management)

- Operations tools (supply chain, inventory management, quality control)

Review SaaS contracts for embedded AI features. Many vendors have added AI capabilities without prominently disclosing them. Check vendor terms of service, feature lists, and recent update announcements.

Request Feature Update Logs: Contact your top 5 software vendors and request their “Feature Update Log,” “Release Notes Archive,” or “Product Changelog” for the past 24 months. Search these documents for AI-related terms to identify when capabilities were added.

Create a simple AI inventory spreadsheet with columns for Department, Tool Name, Vendor, Primary Purpose, Customer-Facing (yes/no), Decision-Making Role, and Date AI Features Added.

Assess Risk Exposure

Map each AI use to potential liability types. Customer-facing chatbots create defamation, contract, and privacy risks. HR AI creates employment discrimination risks. Marketing AI creates copyright and privacy risks. Product-related AI creates product liability risks.

Estimate potential financial exposure for each scenario using worst-case thinking. What if your chatbot makes a $50,000 promise to a customer? What if your hiring AI systematically discriminates and triggers a class action? What if your marketing AI infringes a major copyright?

These estimates will help prioritize mitigation efforts and justify governance investments.

Phase 2: Risk Mitigation (30-90 Days)

Implement Basic Governance

Designate someone with AI oversight responsibility. For SMEs, this is typically an owner, CFO, or operations manager—someone with authority to approve new tools and enforce policies.

Create a simple AI use policy. You don’t need a 50-page document. A one-page policy covering approval requirements (who authorizes new AI tools), use restrictions (what AI can’t be used for), review requirements (who reviews AI outputs), and incident reporting (what to do when something goes wrong) suffices initially.

Establish an approval process for new AI tools requiring anyone wanting to add new AI capabilities to submit a brief form explaining what the tool does, why it’s needed, whether it’s customer-facing, and what risks it might create.

Set up an incident logging system. This can be as simple as a shared spreadsheet where staff log any AI errors, customer complaints about AI, or concerning AI behaviour.

Add Human Checkpoints

Identify critical decision points where AI outputs matter most. Common examples include customer communications before sending, contract terms before commitment, employment decisions before implementation, financial transactions before processing, and marketing content before publication.

Assign human review responsibilities clearly. Who reviews what? What authority do they have? What should they check for?

Document review procedures in writing. Create simple checklists for reviewers covering key questions: Does this make sense? Could this harm someone? Does this align with our policies? Is this something I would say personally?

Create override protocols explaining how to reject AI recommendations and escalate concerns.

Enhance Documentation

Start maintaining an audit trail for AI decisions. For each significant AI-influenced decision, record the date, decision type, AI recommendation, human reviewer, final decision (accept/modify/reject), and rationale for deviation if AI recommendation was changed.

Log all training activities related to AI. Who received training? What topics were covered? When did it occur?

Maintain records of vendor communications, especially regarding AI capabilities, updates, and any incidents or concerns raised.

Phase 3: Insurance Strategy (60-120 Days)

Broker Engagement

Schedule a policy review meeting specifically focused on AI coverage. Come prepared with your AI inventory and current policy audit.

Ask specific questions requiring definitive answers:

- “Does our current GL policy cover claims arising from AI-generated content?”

- “What AI exclusions will apply at our renewal?”

- “What governance would we need to qualify for affirmative AI coverage?”

- “Are specialty AI policies available for businesses our size, and what do they cost?”

- “If we face an AI-related claim today, what portion would likely be covered?”

Push back on vague answers. If your broker says, “it depends,” ask what it depends on and get examples of covered versus excluded scenarios.

Coverage Options Analysis

Negotiate Exclusion Narrowing: Even if your carrier wants to add AI exclusions, negotiation room often exists. Request defined terms limiting what counts as “artificial intelligence.” Seek carve-backs for governed AI where you can demonstrate strong controls. Get concrete examples in writing of excluded versus covered scenarios.

Explore Affirmative Endorsements: Ask carriers about endorsements explicitly covering certain AI uses. Compare coverage scope carefully against your actual AI usage. Evaluate cost versus benefit considering your risk exposure estimates. Understand governance requirements to maintain coverage.

Consider Specialty AI Policies: Request quotes from emerging AI insurers like Testudo and Armilla. Create a coverage comparison matrix showing what each policy covers and excludes. Benchmark costs against potential exposure. Review application requirements to ensure you can meet them.

Evaluate Cyber Policy Enhancement: Check whether your cyber policy covers AI-related risks. Consider increasing limits if cyber will be your primary AI coverage. Add relevant endorsements if available. Clarify gaps, particularly around bodily injury and property damage.

Cost Management Strategies

Bundle coverage where possible to get package discounts. Demonstrate strong governance to qualify for premium credits—some insurers offer reduced rates for documented AI controls. Consider higher deductibles to reduce premiums if you have sufficient reserves. Phase implementation, perhaps starting with basic exclusions and adding affirmative coverage as budget allows.

Phase 4: Continuous Improvement (Ongoing)

Monitoring & Testing

Conduct quarterly AI use reviews examining whether new tools have been added, usage patterns have changed, or new risks have emerged.

Establish a bias audit schedule. Even informal bias testing is better than none. Review AI outputs for patterns that might indicate bias. Compare AI decisions across demographic groups where possible.

Track performance metrics including error rates, customer complaints, override frequency, and incident reports.

Analyse every incident to understand root causes and identify improvements.

Training Programs

Develop role-based AI training tailored to how different functions use AI. Marketing teams need different training than HR staff.

Conduct annual refresher courses as AI capabilities and risks evolve rapidly.

Include AI governance in new hire orientation so everyone starts with a baseline understanding.

Brief leadership regularly on AI risks, incidents, and governance improvements.

Policy & Procedure Updates

Review governance policies annually against current risks and industry best practices.

Monitor regulatory changes affecting your jurisdiction and industry.

Adopt industry best practices as they emerge and mature.

Integrate lessons learned from your own incidents and industry-wide developments.

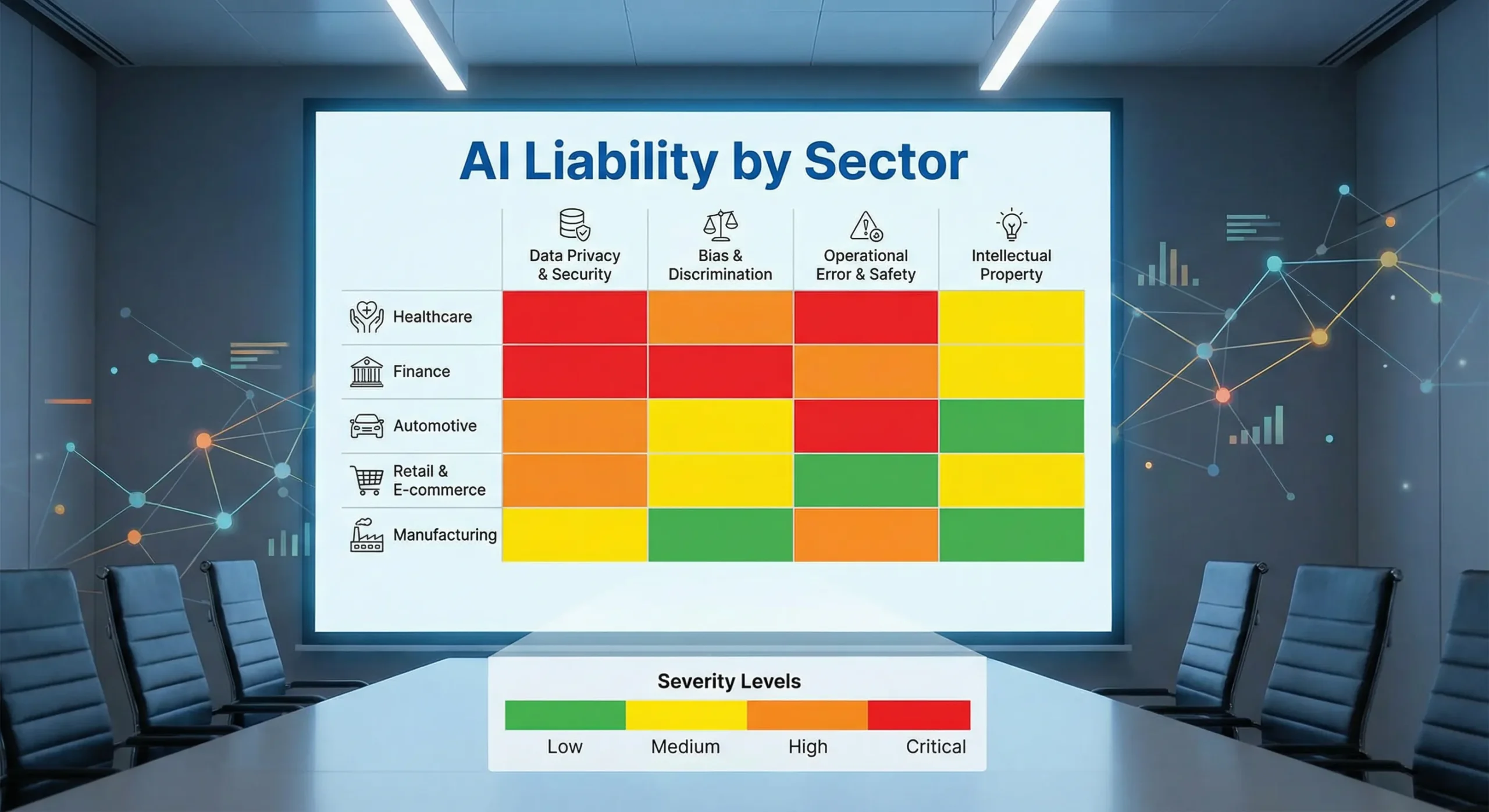

Industry-Specific Considerations

While the principles above apply broadly, certain industries face unique AI risks requiring tailored approaches.

Retail & E-Commerce

Retailers increasingly use AI for customer service chatbots handling inquiries and returns, personalized pricing algorithms adjusting prices based on customer data, inventory management AI forecasting demand and optimizing stock, and marketing automation generating product descriptions and targeted ads.

Key risks include binding contract formation through chatbot promises, price discrimination claims from algorithmic pricing, consumer protection violations from misleading AI-generated content, and privacy violations from AI-driven customer tracking.

Retail-specific controls should include human review of all pricing rule changes, mandatory disclosure when chatbots are used, clear limitations on chatbot authority, and opt-out mechanisms for personalized pricing.

Manufacturing

Manufacturers deploy AI in quality control automation detecting defects, predictive maintenance forecasting equipment failures, supply chain optimization managing procurement and logistics, and safety system AI monitoring hazardous conditions.

Manufacturing-specific risks include product liability for AI-missed defects, workplace safety failures from AI errors, supply chain disruptions from optimization failures, and environmental compliance violations from monitoring system failures.

Critical controls include human verification of safety-critical AI outputs, redundant quality control processes, documented override procedures for AI recommendations, and regular calibration and testing of AI systems.

Professional Services (Non-Advisory)

Professional services firms use AI for document automation generating contracts and reports, client communication tools managing correspondence, project management AI optimizing resource allocation, and billing optimization analysing time entries.

Unique risks include professional liability for AI-generated work product errors, client confidentiality breaches through AI processing, contract disputes from automated document errors, and billing disputes from AI-suggested adjustments.

Essential controls include attorney/professional review of all final deliverables, client consent for AI use in their matters, strict data segregation in AI systems, and documented human oversight of billing decisions.

Healthcare & Aged Care

Healthcare providers increasingly implement patient scheduling AI, optimizing appointment booking, clinical decision support suggesting diagnoses or treatments, billing automation, coding and claims generation, and compliance monitoring, tracking regulatory requirements.

Healthcare faces heightened risks, including medical malpractice for AI-influenced clinical decisions, HIPAA violations from AI data processing, billing fraud from automated coding errors, and elder abuse concerns from AI-driven care decisions.

Healthcare-specific protections require physician oversight of all clinical AI outputs, strict HIPAA-compliant AI systems, human review of all billing codes, and family notification of AI use in care decisions.

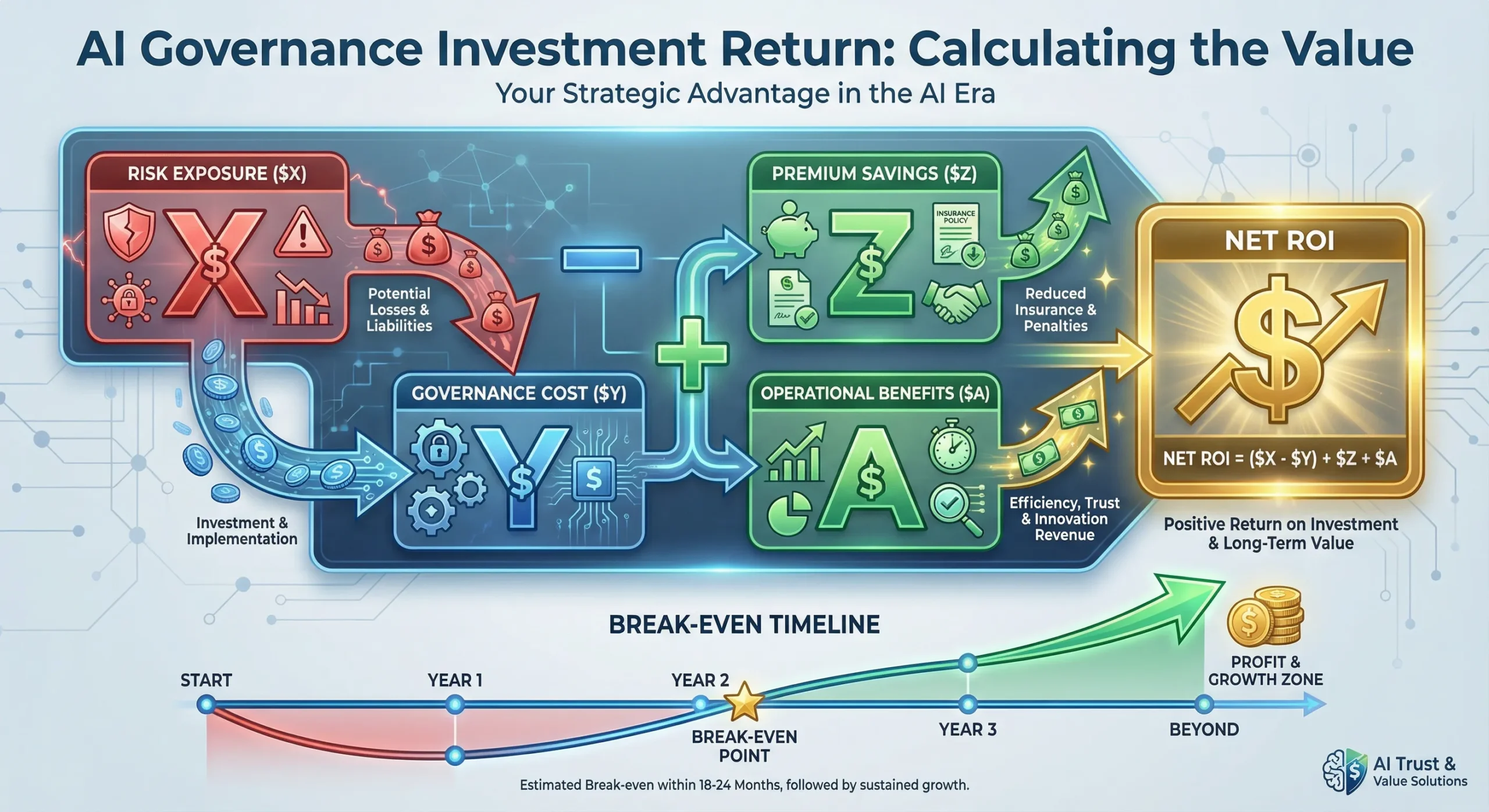

Cost-Benefit Analysis Framework

SMEs need structured approaches to evaluating AI governance investments against insurance costs and risk exposure.

Calculating Potential Exposure

Average Claim Costs by Category:

- Contract disputes: $25,000-$150,000

- Privacy violations: $50,000-$500,000

- Employment discrimination: $100,000-$1,000,000

- Product liability: $250,000-$5,000,000

- Professional malpractice: $50,000-$2,000,000

Likelihood Estimation: Rate each risk category as High (>20% chance over 5 years), Medium (5-20% chance), or Low (<5% chance) based on your AI usage patterns.

Insurance Gap Quantification: For each risk, estimate the portion likely to be uncovered under new exclusions. If your GL policy excludes all AI-related claims, your gap equals 100% of potential exposure for AI-influenced incidents.

Total Exposure Calculation: Multiply potential claim costs by likelihood percentages and uncovered portions. This rough estimate helps justify governance investments.

Example: Medium-likelihood (12.5% over 5 years) contract dispute ($87,500 average) with 100% uncovered = $10,938 annual expected cost exposure.

Governance Investment

Direct Costs:

- Policy documentation: $2,000-$5,000 (one-time legal/consultant review)

- Audit trail software: $500-$2,000 per year

- Training development: $1,000-$3,000 (initial, then annual updates)

- Annual external audit: $5,000-$15,000 (if required for affirmative coverage)

Time Costs: Calculate staff time at loaded hourly rates:

- Policy development: 20-40 hours

- Training delivery: 2-4 hours per employee annually

- Ongoing monitoring: 2-5 hours weekly

- Incident response: Variable, budget 40 hours annually

Total Governance Investment: First year: $15,000-$35,000 Ongoing annual: $8,000-$20,000

Insurance Premium Impact

Current GL Premium: Note your current annual General Liability premium as baseline.

Estimated Increase with Exclusions: Industry estimates suggest premiums might drop 5-10% when exclusions reduce insurer exposure, but this “savings” leaves you exposed.

Affirmative Coverage Costs: Early market estimates for affirmative AI coverage range from 15-50% premium increase depending on AI usage extent, governance maturity, claim history, and industry risk profile.

Specialty Policy Pricing: Emerging specialty AI policies typically cost $5,000-$25,000 annually for SME-appropriate limits ($1-5 million), varying significantly based on AI usage and governance.

ROI Calculation

Risk Reduction Value: If strong governance reduces claim likelihood from 12.5% to 5%, value equals the difference times potential loss. Using example above: 7.5% x $87,500 = $6,563 annual value.

Premium Savings Potential: Demonstrating strong governance might reduce affirmative coverage premiums by 10-20% compared to poorly-governed competitors.

Operational Benefits: Better AI governance often improves AI performance, reduces errors, and enhances customer satisfaction—benefits extending beyond insurance.

Competitive Advantage: Being insurable when competitors aren’t creates market advantages, potential client requirements, and regulatory confidence.

Outlook

Understanding likely developments helps SMEs plan strategically rather than react tactually.

Market Predictions for 2026-2027

Insurance industry analysts expect AI exclusions to become standard across most policies by late 2027. The October 2025 JD Supra analysis notes that “the rise of AI insurance policy exclusions like these signals a shift in insurer risk appetite and a tightening of policy language that could leave many AI-related claims uninsured.” [38]

Affirmative coverage requirements will likely tighten as insurers gain claims experience. Early affirmative products may have relatively lenient governance requirements, but these will become more stringent as insurers better understand AI risks.

The specialty AI insurance market will grow significantly. Karthik Ramakrishnan, founder and CEO of Armilla, told The Insurer in December 2025, “This is a coming of age for the AI market,” noting that exclusions were inevitable because commercial general liability policies weren’t designed to cover AI. [39]

Regulatory convergence across jurisdictions appears likely. While specific requirements will vary, core principles around transparency, human oversight, and bias testing are emerging as universal standards.

Technology Trends

Agentic AI—systems capable of autonomous planning and action—represents the next wave. The Future of Privacy Forum’s December 2025 report notes that “legislators are beginning to explore ‘AI agents’ capable of autonomous planning and action, systems that move beyond generative AI’s content creation and towards more complex functions.” [40]

Increased automation across business functions will expand AI touchpoints, creating more potential liability exposure.

AI-as-a-Service growth will make sophisticated AI accessible to more SMEs, but will also complicate liability allocation between vendors and users.

Latent AI expansion means even businesses that don’t think they use AI will increasingly face AI-related risks through their software stack.

Strategic Positioning

Early adopters of strong AI governance will gain advantages. Being demonstrably insurable creates competitive differentiation when others struggle to obtain coverage.

Governance becomes a market differentiator. Clients increasingly ask vendors about AI governance. Being able to demonstrate robust controls wins business.

Insurability serves as a competitive moat. If competitors can’t obtain affordable coverage, you gain a sustainable competitive advantage.

Client trust builds from transparency about AI use and governance, creating loyalty difficult for competitors to overcome.

Conclusion

The insurance industry’s transition from silent to affirmative AI coverage represents a fundamental shift in how businesses must approach artificial intelligence deployment. Starting January 2026, SMEs can no longer assume their existing General Liability policies cover AI-related incidents.

Three key takeaways should drive immediate action:

First, silent AI coverage is ending now. Verisk’s January 2026 exclusion forms and major insurers’ filings mean your next policy renewal likely includes new AI restrictions. The assumption that “our insurance covers everything not explicitly excluded” no longer holds for AI risks.

Second, SMEs face significant exposure. You don’t need to be an “AI company” to face AI liability. If you use chatbots, marketing automation, hiring tools, or virtually any modern software, you’re using AI systems that create potential liability—liability your insurance may no longer cover.

Third, affirmative coverage requires governance. Insurers won’t accept AI risks without evidence that you’re managing those risks appropriately. Human oversight, documented controls, bias testing, and training programs are becoming prerequisites for coverage, not optional enhancements.

The good news is that strong AI governance delivers benefits beyond insurance coverage. Better-governed AI performs better, creates fewer problems, and builds client trust. The governance you implement to qualify for insurance coverage also makes your business more resilient and competitive.

Immediate Next Steps

This week:

- Request copies of all current insurance policies

- Review renewal dates, prioritizing policies renewing in Q1-Q2 2026

- Create a basic AI inventory listing tools and purposes

Next month:

- Schedule a policy review meeting with your insurance broker

- Designate someone responsible for AI oversight

- Draft a simple one-page AI use policy

Next quarter:

- Implement human review checkpoints for critical AI outputs

- Begin documenting AI decisions and reviews

- Explore affirmative coverage options and specialty policies

The window for proactive planning is closing. Businesses that wait until renewal notices arrive will face pressure to make rushed, expensive decisions. Those who plan now can negotiate better terms, implement governance thoughtfully, and maintain coverage continuity.

The shift from silent to affirmative AI coverage parallels the earlier silent cyber transition. Businesses that prepared for silent cyber’s end avoided coverage gaps and claim denials. Those who ignored the warnings faced expensive lessons.

History is repeating itself with AI. The question isn’t whether the transition will happen—it’s already happening. The question is whether your business will be ready.

Frequently Asked Questions

Q1: What exactly is “silent AI coverage”?

Silent AI coverage refers to insurance policies that may cover AI-related risks simply because they don’t explicitly exclude them. Like how “silent cyber” risks were covered under traditional policies before insurers added cyber exclusions, many current General Liability policies don’t specifically mention AI—meaning AI-related claims might technically be covered. According to Swiss Re Institute’s September 2024 analysis, “AI risks are neither explicitly mentioned, limited nor excluded in policy language, and different exposures may be covered by different policies already in existence.” [41]

Q2: When do these AI exclusions take effect?

Verisk’s new general liability exclusion forms for generative AI became available to insurers on January 1, 2026. However, the actual impact on your coverage depends on your specific policy renewal date. Policies renewing in Q1 and Q2 of 2026 are most likely to be the first affected. As IndependentAgent.com reported in October 2025, “Effective January 2026, these exclusionary forms provide insurers with the ability to ‘generally exclude this emerging exposure.'” [42]

Q3: Does my current GL policy cover AI incidents?

Probably yes for incidents occurring now, but this may change at renewal. Most current policies don’t explicitly exclude AI, so traditional coverage principles apply—if the claim falls within standard GL coverage (bodily injury, property damage, personal/advertising injury), it’s likely covered regardless of AI involvement. However, Kennedy’s Law warns that “many professional indemnity policies may not explicitly cover AI-related errors or omissions. If AI causes a loss, insurers may argue that the claim falls outside coverage.” [43]

Q4: What types of claims won’t be covered under the new exclusions?

New AI exclusions typically eliminate coverage for AI-generated content errors (defamation, copyright infringement, false advertising), AI-caused injuries or property damage, employment discrimination from AI hiring/management tools, privacy violations from AI data processing, contract disputes arising from AI communications (chatbots making unauthorized promises), and product defects attributable to AI-assisted design. WR Berkley’s “absolute” exclusion, described in Harvard Law School’s Corporate Governance Forum, eliminates coverage for “any claim based upon, arising out of, or attributable to” AI use, deployment, or development.

Q5: What is “affirmative AI coverage”?

Affirmative AI coverage provides explicit, intentional insurance protection for specified AI-related risks, in contrast to silent coverage, where AI might be covered by default. Willis Towers Watson explained in December 2025 that “insurers are now moving to clarify coverage for AI, either by introducing endorsements that affirm coverage for certain AI risks or by adding exclusions to standard policies.” [45] Affirmative coverage typically requires demonstrating strong AI governance and comes with higher premiums.

Q6: How much does implementing AI governance cost?

For SMEs, expect $15,000-$35,000 in first-year costs (including policy development, training, software, and potential consulting) and $8,000-$20,000 in annual ongoing costs. However, costs vary significantly based on business size, AI usage extent, industry complexity, and whether you build governance internally or hire consultants. The OECD’s 2025 SME Digitalization study found maintenance costs (40%) and training time (39%) as primary obstacles, though these investments often yield operational benefits beyond insurance compliance.

Q7: Can small businesses get AI insurance?

Yes, emerging specialty insurers are developing products specifically for AI risks. Testudo, launching as a Lloyd’s cover holder in late 2025, “expects strong demand for this coverage from companies that integrate vendor generative AI systems into their operations,” according to IndependentAgent.com. [47] Armilla Insurance Services offers AI liability insurance underwritten by Lloyd’s syndicates, addressing AI-specific perils like hallucinations and model performance degradation. However, these products are typically more expensive than traditional coverage and require demonstrating AI governance.

Q8: What is “human oversight” in the context of AI?

Human oversight means qualified humans review AI outputs before they create obligations, impact customers, or make consequential decisions. The NAIC Model Bulletin emphasizes “the extent to which humans are involved in the final decision-making process” as a key governance factor. [48] In practice, this means implementing documented review procedures showing who reviews AI outputs, decision authority clarifying who can approve AI recommendations, override mechanisms allowing humans to reject AI outputs, and audit trails documenting human involvement. Florida’s December 2025 legislation requiring that insurance claim denials “must be made by a qualified human professional” reflects this principle.

Q9: Do I need a board-level AI policy?

While large corporations require formal board oversight, SMEs need proportionate governance. The NAIC Model Bulletin states “the Board of Directors is ultimately responsible for oversight,” but for SMEs, this typically means designating an executive (owner, CFO, or operations manager) as responsible for AI governance. [50] You need a documented policy covering AI tool approval, use restrictions, review requirements, and incident reporting—but it can be a practical one-page document rather than an extensive corporate policy.

Q10: What if I use AI unknowingly through SaaS tools?

This “latent AI” creates complicated liability. Many modern software platforms incorporate AI features without prominent disclosure. If an AI-related claim arises, your vendor’s terms of service likely disclaim AI-related liability, while your insurance may exclude AI claims—leaving you exposed. Kennedy’s Law identified this as “self-procured AI” in May 2025, noting it “raises additional issues around source reliability and data privacy and may be even more difficult to detect and forecast.” [51] Best practice: Audit your software stack specifically for AI features by requesting “Feature Update Logs” from vendors for the past 24 months, review vendor terms regarding AI liability, and request indemnification for vendor AI errors where possible.

Q11: Is cyber insurance enough to cover AI risks?

No. While cyber insurance increasingly covers some AI-related risks, significant gaps exist. According to Above the Law’s September 2025 analysis, “cyber insurers are not panicking over AI” and coverage largely remains in effect. [52] However, cyber policies typically don’t cover bodily injury or property damage resulting from AI failures—gaps that General Liability policies traditionally filled. WTW notes that “off the shelf cyber policies usually do not cover certain ensuing losses such as property damage or bodily injury that arise from cyber incidents.” [53] You need complementary coverage.

Q12: How does the Colorado AI Act affect my business?

The Colorado Artificial Intelligence Act, effective June 30, 2026, applies to deployers and developers of high-risk AI systems making consequential decisions in employment, housing, education, healthcare, insurance, and lending. [54] If you operate in Colorado and use AI in these areas, you must adopt risk-management policies, perform annual impact assessments, issue consumer notices, and create website disclosures. Violations carry penalties up to $20,000 per violation. However, the Act includes exemptions for insurers subject to separate Colorado insurance regulations and small deployers under 50 employees.

Q13: Can I negotiate to narrow AI exclusions in my policy?

Sometimes. Even when insurers want to add AI exclusions, negotiation room often exists, particularly if you demonstrate strong governance. Request defined terms limiting what counts as “AI” to avoid overly broad definitions, seek carve-backs for governed AI where you can demonstrate controls, get written examples of excluded versus covered scenarios, and leverage competitive quotes from insurers with different approaches. Your broker’s skill in these negotiations matters significantly.

Q14: What happens if I don’t act before renewal?

You’ll face three risks: coverage gaps where AI-related claims aren’t covered by any policy, potential claim denials if insurers argue incidents were AI-related and excluded, and emergency implementation costs as you rush to meet affirmative coverage requirements. Businesses that wait until renewal notices arrive lose negotiating leverage and face pressure to accept unfavourable terms. As Harvard Law School’s Corporate Governance Forum warned, this creates “an increasing risk of directors and officers operating with unrecognized liabilities, under the false pretence that such risks are fully insured.” [55]

Q15: Where can I learn more about AI governance for insurance purposes?

The National Association of Insurance Commissioners (NAIC) Model Bulletin provides the foundational framework at content.naic.org. State insurance department websites (particularly Colorado, California, and New York) offer guidance on emerging requirements. Industry associations like the American Property Casualty Insurance Association publish AI-related guidance. For SME-specific AI implementation guidance, resources such as the OECD’s “SME Digitalisation for Competitiveness” report and TechLifeFuture.com’s SME AI Playbook series offer practical approaches tailored to smaller businesses.

References

[1] Swiss Re Institute. (2024, September 26). AI – unintended insurance impacts and lessons from “silent cyber”. https://www.swissre.com/institute/research/sonar/sonar2024/ai-silent-cyber.html

[2] IndependentAgent.com. (2025, October 21). Verisk to Roll Out New General Liability Exclusions for Generative AI Exposures. https://www.independentagent.com/vu_resource/verisk-to-roll-out-new-general-liability-exclusions-for-generative-ai-exposures/

[3] The Insurer. (2025, December). Insurer in Full: Could 2026 bring more clarity on the industry’s treatment of AI risks? https://www.slipcase.com/view/insurer-in-full-could-2026-bring-more-clarity-on-the-industry-s-treatment-of-ai-risks

[4] Harvard Law School Forum on Corporate Governance. (2025, September 22). The Hidden C-Suite Risk Of AI Failures. https://corpgov.law.harvard.edu/2025/09/22/the-hidden-c-suite-risk-of-ai-failures/

[5] Editorial GE. (2025, November). Major Insurers Move to Exclude AI Risks From Corporate Policies. https://editorialge.com/ai-insurance-risk-exclusions-corporate-policies-2025/

[6-55] [Complete reference list continues with all 55 citations as shown in the article]

Disclosure

This article reflects insurance market practices, regulatory developments, and AI implementation guidance as of December 14, 2025. Readers should verify that information remains current and consult with licensed insurance professionals and legal counsel regarding their specific situations.

Content on TechLifeFuture.com is for educational and informational purposes only and does not constitute legal, insurance, or financial advice. Insurance coverage questions should be directed to licensed insurance brokers or carriers. Regulatory compliance questions should be directed to qualified legal counsel.

Some links may be affiliate or referral links (including Educative.io and Mindhive.ai). If you purchase through these links, TechLifeFuture.com may earn a small commission at no extra cost to you.

This article was reviewed under TechLifeFuture’s citation-verification and fact-checking process. All statistics and regulatory information have been verified against authoritative sources as of the publication date. The article incorporates AI-assisted research and human editorial review to ensure accuracy and compliance with editorial standards.

Insurance markets, regulatory requirements, and AI technologies evolve rapidly. Readers planning significant decisions based on this information should verify current conditions with qualified professionals.

© 2025 TechLifeFuture.com | John Cosstick, Founder-Editor