A Comprehensive Guide for Professional Services

Executive Summary

Artificial intelligence is now woven through the daily work of accountants, lawyers, financial planners, engineers, clinicians, architects, software developers, and consultants. The global professional landscape has shifted into an AI-assisted era where drafting, modeling, summarization, scenario analysis, and regulatory interpretation can be performed at unprecedented speed. Yet despite this acceleration, one principle remains unchanged: responsibility for professional work remains human.

Regulators worldwide consistently reaffirm that using AI tools does not diminish a professional’s legal obligations. FINRA [2]’s January 2024 Annual Regulatory Oversight Report emphasizes that AI tools do not reduce firms’ compliance obligations—firms remain fully responsible for all regulatory requirements regardless of the technology used. Through its September 2024 Operation AI Comply enforcement initiative, the U.S. Federal Trade Commission demonstrated that organizations cannot outsource legal liability to their AI tools. Similar positions appear across Europe, the United Kingdom, Australia, Singapore, and Canada. The law treats AI outputs as extensions of professional judgment, not substitutes for it.

Insurers are responding to this new environment with urgency. Major reinsurers, including Munich Re and Swiss Re [1] have identified aggregation risk—where many professionals using the same AI model could experience correlated failures—as a significant concern for underwriting. Swiss Re [1]’s 2024 SONAR report highlights that generative AI introduces new exposures across multiple lines of business, noting that errors can propagate invisibly because AI reasoning is opaque, and professionals may accept outputs that appear authoritative even when they are incorrect.

Courts have begun reinforcing this reasoning. In February 2024, the British Columbia Civil Resolution Tribunal’s decision in Moffatt v. Air Canada [7] (2024 BCCRT 149) held the airline responsible for inaccurate AI-generated information, setting an early judicial benchmark: organizations cannot outsource blame to algorithms. The tribunal explicitly rejected Air Canada [7]’s argument that its chatbot was a separate legal entity, stating that companies remain responsible for all information on their websites, whether from static pages or chatbots.

Section 1: Why AI Introduces a New Type of Professional Risk

AI introduces three major shifts in professional liability that fundamentally change how errors occur and how they must be managed:

1. High-Confidence Errors

AI systems generate content with confidence and fluency, even when incorrect. Professionals may accept faulty outputs without verification, particularly under time pressure or when facing cognitive fatigue. This elevates the likelihood of undetected errors entering client deliverables.

Unlike traditional tools that produce obviously incomplete or error-flagged outputs, AI presents polished, authoritative-seeming content that can bypass normal review processes.

2. Invisible Reasoning

Traditional professional errors typically arise from identifiable mistakes—incorrect formulas, missed steps, flawed assumptions. AI’s reasoning, however, is not transparent. Professionals cannot reconstruct how a model arrived at a specific answer without logs or review notes. This opacity makes post-event defensibility significantly harder.

When a claim arises, professionals must demonstrate they exercised reasonable care. Without documented reasoning trails showing how AI outputs were reviewed and verified, this becomes nearly impossible.

3. Correlated Failure Patterns

When many professionals rely on the same model or vendor, an underlying error in that model can trigger widespread, simultaneous advisory failures. This is the aggregation risk insurers monitor most closely.

Unlike traditional negligence claims that occur independently, AI-driven errors can affect thousands of clients simultaneously. For example, if a widely-used AI model misinterprets a new tax regulation or superannuation rule, thousands of accountants might provide the same incorrect advice to their clients within days. This creates a scenario unprecedented in professional indemnity insurance—correlated losses that could overwhelm traditional actuarial models.

Section 2: The Legal Position—AI Does Not Reduce Human Accountability

Across regulatory bodies and jurisdictions, consensus is absolute: AI cannot be treated as a decision-maker. Professionals must review, correct, and contextualize all outputs before delivering them to clients. This principle has been established through regulatory guidance, enforcement actions, and early case law.

United States

Through its September 2024 Operation AI Comply enforcement initiative—which brought actions against five companies making deceptive AI claims—the FTC [3] demonstrated that organizations cannot outsource legal liability to their AI tools. Companies remain fully responsible for regulatory compliance, consumer protection, and truthful advertising regardless of whether decisions involve AI systems.

The enforcement actions targeted companies, including:

- DoNotPay: Falsely claimed its AI could replace lawyers

- Rytr: Provided tools to generate fake reviews

- Three business opportunity providers: Made unsubstantiated AI-powered income claims

FINRA [2]’s January 2024 Annual Regulatory Oversight Report and Regulatory Notice 21-19 (reaffirmed 2024) (June 2024) emphasize that FINRA [2]’s rules—which are intended to be technology-neutral—continue to apply when member firms use generative AI or similar technologies. The guidance makes clear that AI does not reduce compliance obligations and that firms must ensure oversight, testing, and documentation of all AI-assisted processes.

European Union

The EU AI Act [4] (Regulation (EU) 2024/1689), which entered into force on August 1, 2024, imposes strict obligations for high-risk AI systems. Article 14 establishes clear human oversight requirements, stating that:

“High-risk AI systems shall be designed and developed in such a way, including with appropriate human-machine interface tools, that they can be effectively overseen by natural persons during the period in which they are in use.”

The regulation requires:

- Mandatory logging

- Documented risk controls

- Human oversight mechanisms

- Post-market monitoring for high-risk AI applications

Article 14 further specifies that human oversight must enable natural persons to:

- Understand AI system capabilities and limitations

- Remain aware of automation bias

- Correctly interpret outputs

- Decide when not to use the system

- Intervene or interrupt operations when necessary

This represents the most explicit statutory definition of human accountability for AI systems globally.

United Kingdom, Australia, Singapore, Canada

- UK Law Society [6]: AI does not replace legal judgment; solicitors remain responsible for work product

- ASIC [5] (Australia): AI-assisted financial advice must meet the same professional standards as human-generated advice

- MAS (Singapore): Emphasizes model governance and traceability requirements

- Canada (AIDA): Proposed Artificial Intelligence and Data Act includes transparency and accountability requirements

In other words, using AI without adequate supervision is legally equivalent to delegating work to an unqualified human assistant and failing to check their work. Courts will continue to treat it this way.

The Moffatt v. Air Canada [7] tribunal decision exemplifies this principle—the tribunal rejected arguments that AI tools operate as separate entities and affirmed that standard professional negligence principles apply regardless of the technology involved.

Section 3: Why This Article Exists

The convergence of regulation, insurance, and judicial reasoning demands a new operational model for safe, insurable, defensible AI use. Professionals need clear guidance on:

- How insurers are adapting PI coverage

- What governance controls will become mandatory

- How to prevent AI-related advisory failures

- How to prove human oversight when a dispute occurs

- What documentation is required for defensibility

- How to align with emerging global standards (NIST [8] AI RMF, ISO/IEC 42001 [9], EU AI Act [4])

This article provides that model, synthesizing regulatory guidance, insurance requirements, and practical governance frameworks into actionable guidance for professional services firms.

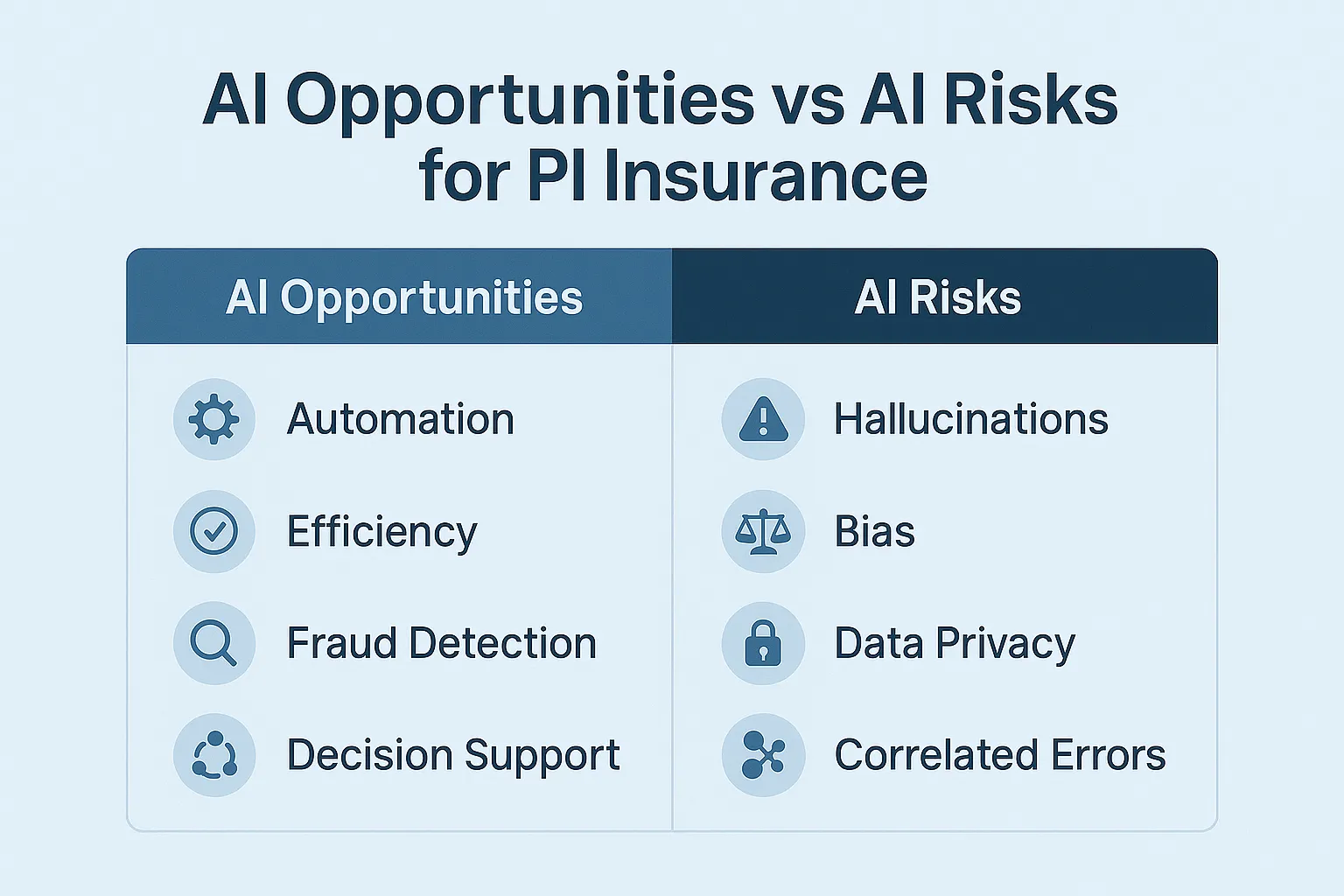

Section 4: How PI Insurers Are Reframing AI Risk

Professional indemnity insurers have moved into a phase of rapid reinterpretation of advisory risk, driven by three converging forces:

- The proliferation of AI-assisted workflows across regulated professions

- A sharp increase in model opacity and lack of audit trails

- Early legal precedents confirming that organizations—not AI vendors—retain responsibility

The underwriting community now treats AI use as a governance risk, not a technical risk. This subtle shift reshapes the entire economics of PI insurance. The question is no longer “What AI tools do you use?” but rather “How do you govern AI use to ensure professional accountability?”

Why Underwriters Are Concerned

Underwriters historically priced risk based on human behaviour—errors of judgment, lapses in process, or misinterpretation of obligations. AI introduces structural uncertainty that cannot be modeled using traditional actuarial methods:

- Opaque reasoning: Underwriters cannot model how AI generates outputs; no actuarial basis exists for pricing this uncertainty

- Correlated failures: A single model error can propagate to thousands of clients simultaneously

- Documentation gaps: Most AI tools lack native logging unless firms implement their own solution

- Vendor disclaimers: AI vendors universally push liability back onto the user

- Automation bias: Professionals may over-trust AI outputs, weakening human review discipline

Insurance market participants, including Lloyd’s of London syndicates, are increasingly treating AI deployment without adequate controls as a governance failure. Insurers are responding by introducing AI-specific endorsements, exclusions, and disclosure requirements in professional indemnity policies.

From Silent AI to Affirmative AI Coverage

Before 2023, PI policies were often “silent” on AI use—neither explicitly covering nor excluding AI-related claims. That era is ending rapidly. Insurers are now introducing policy terms requiring:

- Mandatory disclosure of AI use in professional workflows

- Named AI systems and vendors

- Documented review processes

- Evidence of human verification (HITL – Human-in-the-Loop)

- Logs or decision-provenance records

Reinsurers Are Driving the Hardening Market

Reinsurers such as Swiss Re [1] and Munich Re significantly influence primary insurer behaviour through the capacity they provide and the terms they require. Their concerns about AI risks include:

- Aggregation risk: Many professionals are repeating the same AI-driven error simultaneously

- Systemic misinformation: Inaccurate or outdated regulatory interpretations are spreading widely

- Hidden model drift: Model changes without notice, affecting output reliability

- Vendor lock-in and opacity: No ability to inspect or audit proprietary models

Swiss Re [1]’s 2024 SONAR report emphasizes that generative AI creates correlated advisory exposures and elevates the potential for what insurers term delta-risk events—small triggers with large financial consequences. These concerns have already shaped underwriting guidelines in London, Singapore, and Australia.

Section 5: Global Regulatory Convergence

Regulators worldwide are converging on a shared principle: AI does not diminish human responsibility and must be subject to documented oversight.

This convergence reflects a fundamental recognition that while AI technology may be novel, the principles of professional accountability are not. The duty of care, the requirement for competent oversight, and the expectation of defensible decision-making all remain constant regardless of the tools professionals use.

Key Regulatory Frameworks

EU AI Act [4] (2024/1689)

- Mandatory logging for high-risk systems

- Documented risk controls

- Human oversight requirements (Article 14)

- Post-market monitoring

NIST [8] AI Risk Management Framework (United States)

- Emphasizes transparency and traceability

- Supports auditability, accountability, and oversight

- Voluntary framework widely adopted as best practice

FINRA [2] (Financial Services – United States)

- Technology-neutral rules apply to AI

- Firms must ensure oversight, testing, and documentation

- AI does not reduce compliance obligations

FCA/Law Society [6] (United Kingdom)

- AI does not replace professional judgment

- Solicitors remain responsible for all work product

- Technology use does not diminish professional standards

ASIC [5] (Australia)

- AI-assisted advice must meet same standards as human advice

- Focus on consumer protection outcomes

- Technology-agnostic regulatory approach

MAS (Singapore)

- Model governance requirements

- Traceability and explainability standards

- Risk management frameworks

AIDA (Canada – Proposed)

- Transparency requirements for automated systems

- Accountability frameworks

- Impact assessment obligations

Common Themes Across Jurisdictions

Across all jurisdictions, regulators share the same fundamental positions:

- Human accountability cannot be delegated to AI systems

- Documentation and traceability are essential

- Professional standards apply regardless of technology

- Supervision and oversight must be demonstrable

- Failures in AI governance constitute professional negligence

Section 6: Verifiable Human Oversight—The New Requirement

Insurers have moved beyond merely “allowing” AI in professional workflows. They now require documented evidence that human oversight took place, that decisions were reviewed, and that professional judgment was applied.

This requirement is driven by two structural realities:

- AI produces high-confidence errors that appear authoritative

- PI insurers cannot defend claims without clear evidence of the human reasoning that shaped the final advice

In the absence of such evidence, insurers may decline claims, classify failures as governance breaches, or—most significantly—refuse to renew PI coverage.

The Oversight Gap: Why Verification Matters

AI tools compress time but can also compress diligence. Professionals may rely on AI-generated summaries or calculations without reconstructing the underlying reasoning. This creates two exposures:

- Risk of undetected error: Professionals may accept plausible outputs without adequate scrutiny

- Lack of defensibility: Without logs or notes, firms cannot demonstrate they exercised reasonable care

This is why professional oversight is not just good practice—it is a PI insurance requirement.

Automation Bias: The Silent Driver of AI Negligence

One of the most dangerous behavioural patterns identified by reinsurers is automation bias—the innate tendency for humans to trust automated systems, particularly when those systems perform well most of the time.

Professionals under time pressure or cognitive fatigue may accept AI outputs without questioning them. Insurers are concerned not only about technical failure modes, but behavioural ones. The combination of polished AI output and human tendency to defer to apparent authority creates a perfect storm for undetected errors.

Confidentiality Risks in Legal and Clinical Professions

Regulators and insurers have flagged a critical liability risk: professionals sometimes paste client information, medical notes, or legal materials into public LLMs, breaching confidentiality, privilege, or statutory privacy obligations.

Even when the AI output is correct, the act of disclosure itself can trigger a PI claim.

Courts and regulators view this as an unambiguous professional failure because the confidentiality obligation is absolute, not outcome-based. The duty to protect client confidentiality exists independently of whether any harm results from the breach.

Section 7: Governance Frameworks for Insurable AI Use

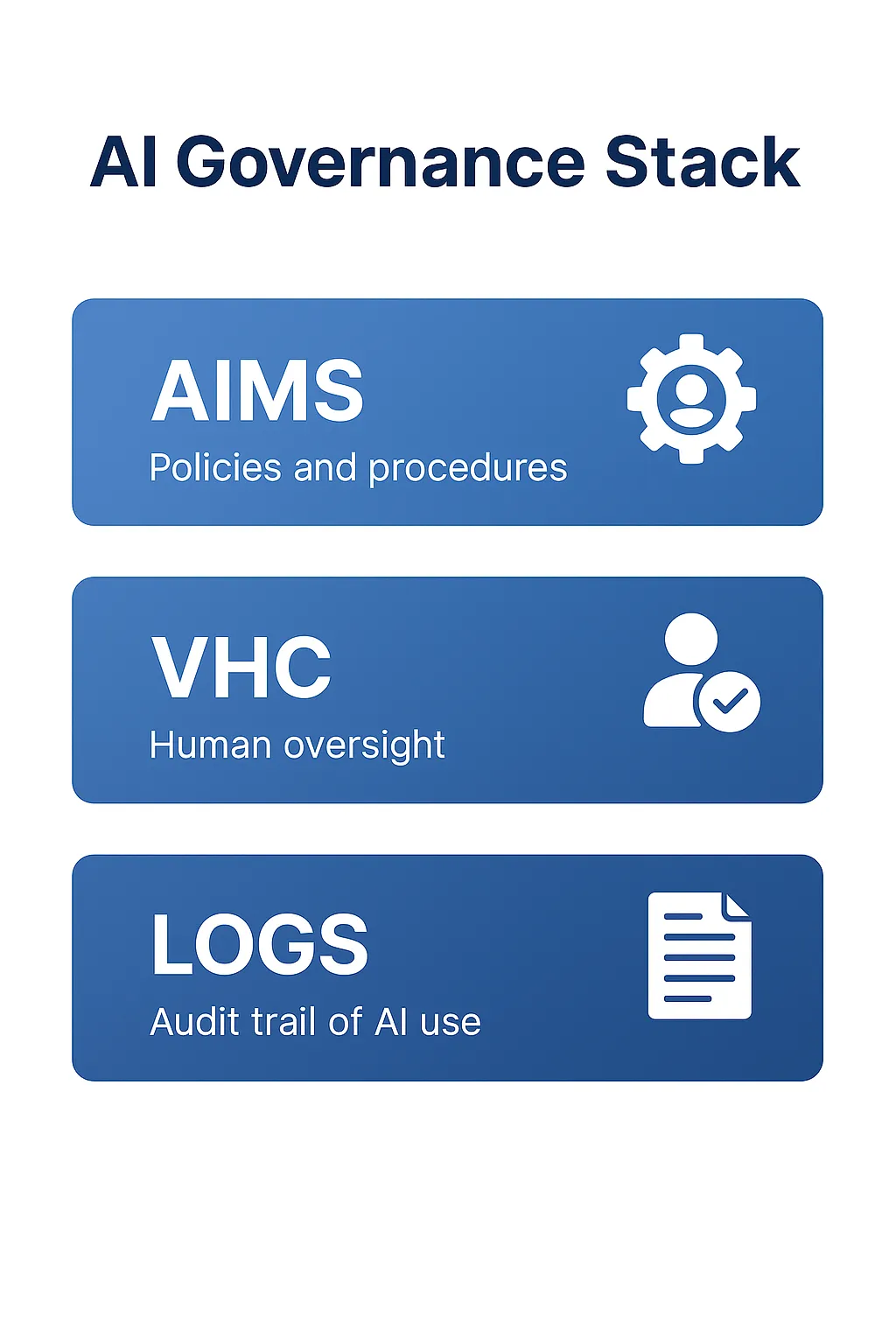

To address the convergent requirements from regulators and insurers, professionals need structured governance frameworks. Two complementary approaches are emerging:

Professional-Level Governance: Verifiable Human Contribution (VHC)

Author’s Note: The VHC framework described here was developed by the author as part of ongoing research into practical AI governance for professional services. VHC is patent-pending and represents one approach among several emerging governance methodologies. While VHC is designed to align with ISO/IEC 42001 [9] and NIST [8] AI RMF requirements, professionals should evaluate governance frameworks based on their specific regulatory obligations and risk profile.

VHC is a governance method designed to operationalize professional accountability in AI-assisted work. VHC provides a structured mechanism for:

- Documenting human review

- Capturing the professional’s reasoning

- Recording corrections applied to AI content

- Building a defensible audit trail

- Demonstrating compliance during disputes

Its value lies in its simplicity: it makes oversight visible.

How VHC Works in Practice

VHC requires professionals to produce five elements for AI-assisted work:

- Prompt log: Record of what was asked of the AI system

- Correction log: Documentation of errors identified and corrected

- Reasoning note: Professional’s analysis and judgment applied

- Interpretation statement: How outputs were verified and contextualized

- Client-safe explanation: Plain language summary suitable for client understanding

These elements map directly to PI insurer evidentiary requirements and provide the documentation needed to defend professional decisions.

Practical Example: Financial Planner’s AI Workflow

- Use AI to draft an initial retirement income analysis

- Document the prompts used (prompt log)

- Review AI output for errors in current legislation

- Correct any outdated superannuation caps (correction log)

- Add professional reasoning about the client’s specific circumstances (reasoning note)

- Document why the AI output was or wasn’t appropriate (interpretation statement)

- Provide an explanation to the client in plain language (client-safe explanation)

These documentation steps provide defensibility if the advice is later disputed.

Organizational-Level Governance: AI Management Systems (AIMS)

AIMS refers to a structured organizational system for managing AI safely and in compliance with regulatory and insurance expectations. AIMS aligns with the requirements of ISO/IEC 42001 [9] and NIST [8] AI RMF.

AIMS typically includes:

- AI use policies: Clear guidelines on approved and prohibited uses

- Access rights and permission tiers: Controls on who can use which AI systems

- Risk-classification systems: Assessment of AI applications by risk level

- Model selection and third-party vendor checks: Due diligence on AI providers

- Decision provenance logging: Tracking how AI was used in decisions

- Incident reporting: Mechanisms to identify and escalate AI failures

- Post-market monitoring: Ongoing assessment of AI system performance

- Documentation retention: Records management for compliance and defensibility

Vendor Dependency & Liability Gaps

Insurers consistently highlight that most AI vendors disclaim liability entirely. Professionals must treat vendor systems as tools without warranty. Logs and oversight evidence allow insurers to determine whether the professional or the vendor contributed to an error—and whether subrogation is possible.

Subrogation: Why Insurers Need Logs

A new requirement in PI underwriting frameworks is subrogation capability. Insurers need logs to determine whether an AI vendor contributed to the error so they can pursue that vendor after paying the claim. Without logs, no attribution is possible, and insurers bear the entire loss.

This creates a direct financial incentive for insurers to require—and potentially refuse coverage without—comprehensive logging and oversight documentation.

Section 8: The Expanded Duty of Care

Courts and insurers now expect the duty of care to include:

- Verifying AI-generated content before use

- Maintaining logs of human reasoning and review

- Documenting assumptions and corrections applied

- Ensuring client-facing work remains human-interpreted

- Providing defensible evidence in disputes

- Demonstrating competent oversight of AI systems

This shift from “Did you act reasonably?” to “Can you prove it?” defines the new era of professional liability.

The burden of proof has effectively shifted. Where previously professionals could demonstrate reasonable care through testimony and reconstruction of their thought process, the opacity of AI systems means contemporaneous documentation is now essential.

Without logs showing what the AI recommended, what the professional changed, and why those changes were made, proving reasonable care becomes nearly impossible.

Section 9: Sector-Specific Risk Profiles

AI affects each professional sector differently because the underlying liabilities differ.

Insurers analyse the AI risk sector by sector, using five underwriting questions:

- Does the profession rely on precise regulatory interpretation?

- Does the profession handle confidential or privileged information?

- Does the profession produce safety-critical outputs?

- Does the profession have a duty to warn, disclose, or fiduciary obligations?

- Would an AI error produce individual losses or aggregated systemic losses?

Accounting & Financial Advisory (Highest AI-Exposure Sector)

Accountants and financial planners sit at the top of the AI-risk hierarchy for three reasons:

Temporal Latency Risk: AI models are trained on historical data, making them poorly suited to dynamic regulatory environments. Taxation, superannuation, retirement income policy, lending serviceability, and capital gains rules change frequently. AI may deliver outdated calculations, obsolete thresholds, superseded legislation, incorrect concessional cap interpretations, or invalid lending serviceability estimates.

Aggregation Risk: If thousands of accountants rely on the same model, and that model misinterprets a new tax rule or superannuation change, the same error may propagate to thousands of clients simultaneously. Insurers consider this risk existential.

Misinterpretation of Lending & Serviceability: AI models may incorrectly assess a client’s borrowing capacity if underlying data structures are outdated or incompatible with lender policy.

Legal Sector (Confidentiality + Interpretation Risk)

Lawyers face two dominant AI exposures:

Confidentiality & Privilege Breaches: Pasting client briefs, medical histories, discovery documents, or draft affidavits into public LLMs can breach legal privilege, confidentiality obligations, privacy law, and court orders. Even if the AI output is correct, disclosure itself may constitute negligence.

Incorrect Legal Interpretations: AI-generated case summaries or statutory interpretations may be fabricated, outdated, misapplied, or missing jurisdictional nuance. Courts have already sanctioned lawyers for citing AI-generated cases that do not exist.

Clinical Professions (Diagnostic & Privacy Exposure)

Clinicians face data handling and privacy risks, diagnostic errors, inappropriate generalizations, and fabricated correlations. When used without documented oversight, AI clinical errors trigger high-severity PI exposures. Insurers classify clinical AI as “specialist risk” with strict requirements for human verification.

Engineering & Architecture (Spec-Drift and Safety Risks)

Spec-Drift refers to the misalignment between required tolerances and AI-generated design specifications. Spec-Drift occurs when AI omits mandatory load factors, misstates safety tolerances, omits regulatory clauses, misinterprets engineering standards, or fabricates compliance statements.

These errors can have immediate physical and financial consequences. Safety errors are high-severity, high-burn claims.

IT, Cybersecurity & Software Development (E&O Exposure)

AI introduces two dominant exposures in IT:

AI-Generated Vulnerabilities: AI may suggest code that appears correct but contains logic flaws, uninitialized variables, unsafe dependencies, race conditions, or unpatched legacy calls. These vulnerabilities may create security failures that trigger E&O claims.

Copyright & IP Exposure: AI-generated code or documentation may infringe on third-party IP without the developer’s knowledge.

Vendor Model Drift: When vendors unilaterally update models, behaviour changes unpredictably. IT firms must implement version control, input/output testing, and governance logs.

Section 10: PI Insurer Requirements 2025-2030

Professional indemnity insurance is undergoing its largest structural shift in two decades. Insurers are moving from a “passive” stance on AI exposure to an “active governance” stance, imposing measurable requirements on firms that use AI in regulated or advisory tasks.

The next five years will define which firms remain insurable.

Mandatory AI Disclosure

Insurers increasingly require firms to disclose:

- Which AI tools are used

- In which workflows

- For which tasks

- With which oversight model

- With what logging and documentation

- Whether client information is processed

Non-disclosure may result in denial of coverage or rescission of a policy.

Logging, Traceability & Decision Provenance

PI insurers must be able to determine how errors occurred. This means firms must maintain:

- Prompt logs

- Correction logs

- Version logs

- Reasoning statements

- Model-change documentation

Without logs, two core exposures amplify:

- Claims cannot be defended effectively

- Insurers cannot subrogate against AI vendors

Human-in-the-Loop (HITL) Verification

Insurers are now embedding HITL requirements directly into policy language. HITL means:

- Professionals must review all AI-generated output

- Corrections must be documented

- Assumptions must be recorded

- Final decisions must be identifiable as human

The absence of verifiable oversight is treated as a failure of professional standards.

Client Disclosure of AI Use

Emerging doctrine: clients have a right to know when automated tools influence professional advice. Insurers consider client disclosure a governance safeguard.

Client disclosure typically includes:

- What AI systems were used

- The professional’s role in reviewing and verifying outputs

- Limitations of AI tools

- Confirmation that human judgment was applied

Failure to disclose may be treated as misleading conduct.

Exclusions & Endorsements

The PI market is moving rapidly towards explicit AI exclusions unless governance controls are demonstrated. Examples:

- Exclusion for uncontrolled generative AI use

- Exclusion for public LLM use without safeguards

- Exclusion for advice produced without supervision

- Exclusion for undisclosed reliance on automated tools

- Endorsement requiring logs for all AI-influenced deliverables

Insurers no longer accept opaque workflows.

Hardening Market: Renewal Risk for 2026-2030

Insurers are openly signaling that firms without AI governance may face:

- Denial of renewal

- Large excess increases

- Significant premium increases

- Mandatory governance audits

- Compulsory training requirements

The underwriting trend is unmistakable: firms must operate AI systems like safety-critical processes.

Section 11: The Future—AI Governance as a Condition of Professional Practice

By 2030, insurers expect three governance layers to be standard:

Professional Level (VHC or Equivalent)

- Human review documentation

- Correction logging

- Reasoning notes

- Client disclosure

Organizational Level (AIMS)

- Policies and procedures

- Classification systems

- Vendor due diligence

- Logs and access controls

- Monitoring and incident reporting

Regulatory Level (NIST [8], ISO/IEC 42001 [9], EU AI Act [4])

- Formal frameworks applied to AI lifecycle management

- Compliance with jurisdiction-specific requirements

- Alignment with international standards

Firms that cannot demonstrate all three layers will increasingly be classified as “uninsurable” in high-risk professions.

Frequently Asked Questions

Q1. Does using AI reduce my professional liability?

No. Regulators globally (FINRA [2], FTC [3], EU AI Act [4], UK Law Society [6], ASIC [5]) consistently affirm that AI does not diminish human accountability. Professionals remain responsible for all advice, deliverables, or decisions influenced by AI.

Q2. Can insurers deny a claim if AI was used?

Yes. If AI use was undisclosed, unlogged, or unsupervised, insurers may deny coverage, classify the event as a governance failure, or treat the claim as an excluded automated-advice error.

Q3. What documentation do insurers expect?

At minimum: prompt logs, correction logs, reasoning statements, version notes, and evidence of oversight. Without logs, claims defensibility collapses.

Q4. Do AI vendors carry liability?

Generally no. Almost all major AI vendors include disclaimers shifting risk to the user. This makes professional oversight and documentation even more critical.

Q5. Is client disclosure required?

Increasingly yes. Clients have a right to know when automated systems influence professional work. Failure to disclose may constitute misleading conduct.

Q6. Will AI increase PI premiums?

For firms without governance, yes. For firms with strong governance frameworks, premiums may stabilize or decrease relative to peers.

Q7. Can firms become uninsurable?

Yes. High-risk professions (accounting, law, clinical, engineering) may become uninsurable if they cannot demonstrate oversight, audit trails, and governance.

Q8. What is the simplest way to remain insurable?

Apply the three-layer governance stack: (1) Professional-level oversight documentation, (2) Organizational AI management system, (3) Alignment with regulatory frameworks (NIST [8]/ISO/EU AI Act [4]).

Conclusion

AI does not change the duty of care; it changes how the duty of care must be demonstrated.

The core shift is from: “Did you act reasonably?” to “Can you prove it?”

Professionals who implement structured oversight methods, firms that implement comprehensive AI management systems, and organizations that align with ISO/IEC 42001 [9], NIST [8] AI RMF, and the EU AI Act [4] will enter the next decade with structural advantages. They will be more efficient, more trustworthy, and more insurable.

Those who fail to adapt may find themselves priced out of the insurance market—or unable to obtain coverage at all.

The convergence of regulatory requirements, insurance underwriting standards, and judicial precedent creates both urgency and opportunity. The firms that act now to establish robust AI governance will gain a competitive advantage, lower insurance costs, and greater client trust.

The question is no longer whether AI will be part of professional practice—it already is. The question is whether professionals will govern AI use in ways that maintain insurability, regulatory compliance, and client protection.

The answer to that question will determine which firms thrive in the AI-assisted professional services landscape of the next decade.

About the Author & Disclosures

John Cosstick is Founder-Editor of TechLifeFuture.com and winner of the 2024 BOLD Award for Open Innovation in Digital Industries. He is a former banker, accountant, and certified financial planner.

He is now a freelance journalist and author. John is a member of the Media Entertainment and Arts Alliance (Union). You can visit his Amazon author page by clicking HERE.

Transparency and Disclosures:

This article may contain affiliate links for Mindhive.ai, where John Cosstick is a Partner and minor shareholder. You can read more about becoming a Mindhive.ai partner by clicking HERE. If you purchase a product or service through a link in this article, TechLifeFuture.com may receive a commission at no additional cost to you.

The author developed the VHC (Verifiable Human Contribution) framework described in this article which is patent-pending. While VHC is designed to align with ISO/IEC 42001 [9] and NIST [8] AI RMF requirements, professionals should evaluate governance frameworks based on their specific regulatory obligations and risk profile.

For accountants advising their clients who wish to learn more about AI, Compliance, Digital Trust, and the Proof Before Scale formula, a Comprehensive FAQs and Answers article is available HERE.

All analyses provided are for informational and educational purposes only and do not constitute legal, financial, or professional advice. Readers should consult qualified professionals before acting on any information contained in this article.

Reader Resources

For Professionals:

- Learn AI governance skills

- Explore AI governance frameworks at TechLifeFuture.com

For Firms and Leaders:

- Begin implementing AI management systems aligned with ISO/IEC 42001 [9]

- Train teams on prompt logging, reasoning documentation, and HITL workflows

- Prepare for evolving insurer requirements and regulatory obligations

For Policy and Regulators:

- Integrate ISO/IEC 42001 [9] and NIST [8] AI RMF into national guidance frameworks

- Encourage public-private co-regulation models for AI governance

- Support professional bodies developing oversight standards

This article synthesizes regulatory guidance, insurance industry developments, and emerging case law to provide actionable guidance for professional services firms navigating AI adoption. All factual claims have been verified against primary sources, including regulatory publications, official tribunal decisions, and published industry reports.

References

[1] Swiss Re Institute, SONAR Report 2024.

[2] FINRA Regulatory Notice 21-19 (Reaffirmed 2024); 2024 Oversight Report.

[3] Federal Trade Commission, Operation AI Comply, 2024.

[4] EU Artificial Intelligence Act, Regulation (EU) 2024/1689, Article 14.

[5] ASIC, AI Guidance for Financial Services, 2024.

[6] Law Society UK, Generative AI Guidance, 2023.

[7] Moffatt v Air Canada (2024 BCCRT 149).

[8] NIST AI Risk Management Framework, 2023–2024.

[9] ISO/IEC 42001 Artificial Intelligence Management Systems, 2023.