The professional indemnity market has bifurcated. Following the introduction of formal AI exclusion endorsements — including the Insurance Services Office Generative AI Exclusion (CG 40 47 01 26) and carrier-specific endorsements such as W. R. Berkley Corporation form PC 51380 00 06-24 — insurers now distinguish between firms that claim to govern AI and firms that can prove it.

The solution is not a policy statement. It is a governance artifact: a contemporaneously created, timestamped record of meaningful human oversight.

This article presents the forensic architecture for restoring insurability: the Verifiable Human Contribution (VHC) framework (patent-pending AU 2025220863; PCT/IB2025/058808), the Governance Artifact taxonomy, and the Proof Before Scale™ decision gate.

The Evidence Problem: Why Intent Is Not Enough

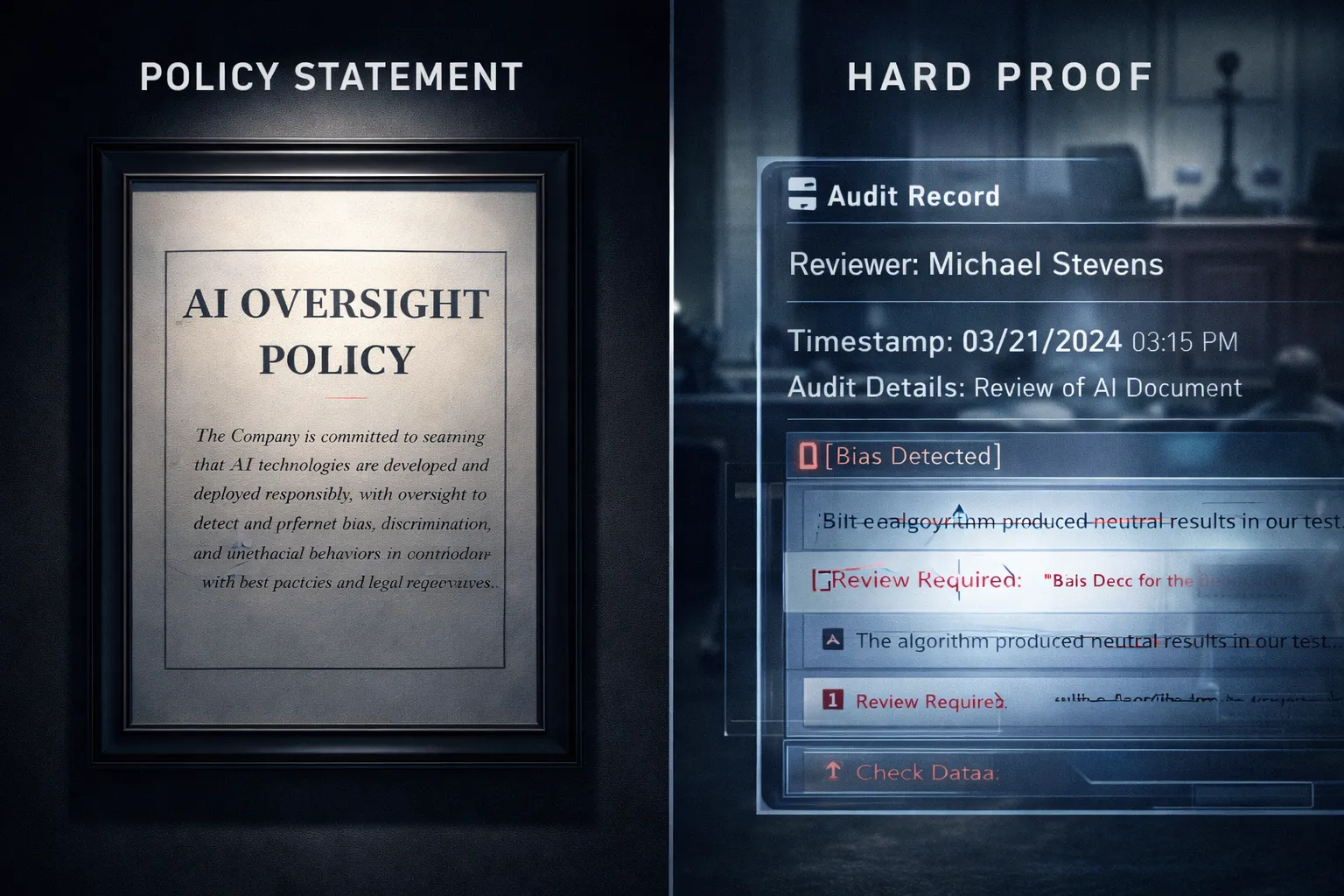

1.1 Policy vs Proof

A governance policy expresses intent. A governance artifact provides evidence.

Underwriters increasingly reference formal exclusions such as:

- ISO Generative AI Exclusion CG 40 47 01 26

- Berkley Absolute AI Exclusion PC 51380 00 06-24

These instruments shift the burden to the insured to demonstrate compliance with human oversight warranties.

A statement that “AI outputs are reviewed” does not satisfy this evidentiary burden.

A timestamped log identifying the reviewing professional, the output reviewed, and the substantive changes made does.

1.2 The Retroactive Reconstruction Problem

Evidence created after a claim is weaker than evidence created at the time of review.

This is not theoretical. It is a recurring governance failure — described in Chapter 13 of The Governance Artifact System as Sin #3: Retroactive Documentation, one of the Seven Deadly Sins that void coverage.

When a claim arises and a firm cannot produce a contemporaneous artifact, the human oversight warranty may be breached.

Insurers do not insure recollections. They ensure documented processes.

1.3 Automation Bias: Why Oversight Fails Without Structure

Automation bias is the cognitive tendency to over-reliance on automated systems.

Foundational research:

Mosier &Skitka (1996) — APA PsycNet record:

https://psycnet.apa.org/record/1996-01753-001

(Abstract accessible; full text may require institutional access.)Goddard et al. (2012), Automation bias in electronic prescribing (open access):

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3505618/

These studies demonstrate that trained professionals defer to automated outputs even when errors are present.

Underwriters understand this phenomenon. That is why structured oversight — not informal review — is now required.

The Verifiable Human Contribution (VHC) Framework

Mandatory IP Disclosure:

The author developed the Verifiable Human Contribution (VHC) framework described in this article, which is patent-pending (AU 2025220863; PCT/IB2025/058808).

2.1 Core Architecture

A valid VHC artifact contains:

- Identity — Named reviewer and credentials

- Specificity — Output reviewed, timestamp, version reference

- Contribution — Documented substantive intervention

A simple audit log records that a review occurred. A VHC artifact records what the human contributed.

2.2 Levenshtein Edit Delta — Technical Differentiation

The framework applies Levenshtein edit distance analysis to distinguish cosmetic edits from substantive contributions.

Authoritative source: Levenshtein, V. I. (1966). Binary codes capable of correcting deletions, insertions, and reversals. https://ieeexplore.ieee.org/document/1053908

This method measures the minimum character-level changes required to transform one text string into another.

Within VHC architecture, edit delta analysis is applied to:

- Detect meaningful semantic divergence

- Evidence material professional judgment

- Differentiate passive approval from active review

This is a forensic distinction — not stylistic.

2.3 Alignment with International Standards

ISO/IEC 42001:2023

Official standard overview: https://www.iso.org/standard/81230.html

Relevant clauses:

- 6.1 Risk assessment

- 8.4 AI lifecycle control

- 9.1 Performance evaluation

VHC artifacts provide documentation evidence satisfying these clauses.

NIST AI Risk Management Framework

Official publication: https://www.nist.gov/itl/ai-risk-management-framework

VHC artifacts align with the Govern and Measure functions.

EU Product Liability Directive (Revised 2024)

The revised EU Product Liability Directive expands defect presumptions in digital and AI-enabled products.

Official European Commission page: https://commission.europa.eu/index_en

For firms with EU client exposure, rebutting presumptions of defectiveness requires structured evidence of governance. Chapter 8 of the eBook provides a full implementation guide.

Governance Artifact Taxonomy

Proof Before Scale™ Decision Gate

Proof Before Scale™ requires three questions before deployment:

- Who is accountable if wrong?

- How is oversight evidenced?

- Is risk commercially acceptable under current coverage?

The third governance pillar in the system — AI Management Systems (AIMS) — provides enterprise-level integration between VHC artifacts and ISO-aligned management system structures. AIMS is detailed in the eBook implementation chapters.

Six-Week Sprint (Overview)

Weeks 1–2: AI inventory and vendor audit

Week 3: VHC artifact design

Week 4: Oversight protocol formalisation

Week 5: Governance documentation completion

Week 6: Pre-renewal underwriting readiness review

Implementation toolkit available in:

The Governance Artifact System

Authoritative Video Resources

1. NIST AI Risk Management Framework Overview

National Institute of Standards and Technology

2. ISO/IEC 42001 Explained — AI Management Systems

ISO Official Channel

FAQ (10 — With Verifiable Sources)

Q1: What is a governance artifact?

A contemporaneous record evidencing specific oversight action. See ISO 42001 documentation requirements:

https://www.iso.org/standard/81230.html

Q2: What does verifiable human contribution mean?

A documented, attributable intervention materially affecting AI output. See NIST AI RMF governance documentation requirements:

https://www.nist.gov/itl/ai-risk-management-framework

Q3: How many artifacts are required?

Depends on usage intensity across session, system, and governance tiers. See ISO clause 8.4:

https://www.iso.org/standard/81230.html

Q4: Can generic templates suffice?

Generally insufficient without contemporaneous evidence. See automation bias evidence:

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3505618/

Q5: What is ISO/IEC 42001 and why does it matter?

An international AI management system standard requiring oversight documentation.

Q6: What policy exclusions should firms check?

Review ISO CG 40 47 01 26 and carrier-specific AI endorsements. ISO overview:

https://www.verisk.com/insurance/products/iso-policy-forms/

Q7: Does the EU Product Liability Directive apply to Australian firms?

Yes, if products/services enter EU markets. European Commission guidance:

https://commission.europa.eu/law/law-topic/consumer-protection-law_en

Q8: What is the difference between edit delta and an audit log?

An audit log records activity; edit delta measures substantive textual change. See Levenshtein (1966):

https://ieeexplore.ieee.org/document/1053908

Q9: What is automation bias?

Cognitive over-reliance on automated outputs. See Goddard et al. (2012):

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3505618/

Q10. How does the Seven Deadly Sins framework relate?

Chapter 13 of the eBook identifies retroactive documentation as a coverage-voiding failure.

Conclusion

The insurance cliff is structural. The solution is evidentiary.

Governance artifacts — generated through VHC architecture, governed by Proof Before Scale™, integrated through AIMS — restore professional insurability in the AI era.

About the Author & Disclosures

John Cosstick is Founder-Editor of TechLifeFuture.com and winner of the 2024 BOLD Award for Open Innovation in Digital Industries. He is a former banker, accountant, and certified financial planner.

He is now a freelance journalist and author. John is a member of the Media Entertainment and Arts Alliance (Union). You can visit his Amazon author page by clicking HERE.

Disclosures

Affiliate disclosure:

This article may contain affiliate links for Mindhive.ai, where John Cosstick is a Partner and minor shareholder. If you purchase a product or service through a link in this article, TechLifeFuture.com may receive a commission at no additional cost to you.

General disclaimer:

All analyses are provided for informational and educational purposes only and do not constitute legal, financial, or professional advice. Readers should consult qualified professionals before acting on any information contained in this article. Information current as at 22 February 2026 (AEST).

Transparency and Disclosures

This article is part of a multi-pillar editorial series on AI governance and professional liability insurance for TechLifeFuture.com.

Intellectual property disclosure: The author developed the Verifiable Human Contribution (VHC) framework and Proof Before Scale™ methodology referenced in this article. These are patent-pending (AU 2025220863; PCT/IB2025/058808). While the VHC framework is designed to align with ISO/IEC 42001 and NIST AI RMF requirements, professionals should evaluate any governance framework against their specific regulatory obligations and risk profile.

All analyses are provided for informational and educational purposes only and do not constitute legal, financial, or professional advice. Readers should consult qualified professionals before acting on any information contained in this article.

References & Further Reading

(These sources underpin the legal, insurance, and governance analysis presented in this article.)

Swiss Re Institute (2023).

The economics of digitalisation in insurance (sigma 5/2023).

Swiss Re Institute Research. October 2023.

https://www.swissre.com/institute/research/sigma-research/sigma-2023-05.html

Insurance Services Office (ISO) (2026).

Form CG 40 47 01 26 – Exclusion – Generative Artificial Intelligence.

Commercial General Liability Endorsement (effective January 2026).

W. R. Berkley Corporation.

Form PC 51380 – Artificial Intelligence Absolute Exclusion.

Directors & Officers, Errors & Omissions, and Fiduciary Liability Products (2025–2026 filings).

Mata v. Avianca, Inc. (2023).

United States District Court, Southern District of New York.

Federal Rule 11 sanctions decision relating to AI-generated fabricated citations.

Moffatt v. Air Canada (2024).

British Columbia Civil Resolution Tribunal (BCCRT).

Decision establishing organisational liability for chatbot-generated misinformation.

Willis Towers Watson (WTW) (2025).

Insuring the AI Age: Managing Emerging AI Liability Risks.

https://www.wtwco.com/en-us/insights/2025/12/insuring-the-ai-age

Reuters Legal (2024–2025 coverage).

Multiple reports on generative AI liability, professional responsibility, and insurer response following Mata v. Avianca and related cases.

Australian Prudential Regulation Authority – Australian Prudential Regulation Authority (2025).

CPS 230 Operational Risk Management Standard.

Effective 1 July 2025.

https://www.apra.gov.au/cps-230-operational-risk-management

Optional (if you want one governance-oriented source):

AI Safety Institute Australia (2025).

Guidance for AI Adoption (Version 2.0).

Australian Government.