Artificial intelligence has revolutionized how we process information, but it’s not without critical flaws. One of the most concerning issues facing AI adoption today is GPT hallucinations – instances where AI models generate convincing but completely fabricated information.

Understanding these AI hallucination detection methods and GPT model reliability challenges is crucial for businesses, researchers, and everyday users relying on AI-generated content.

Understanding these AI hallucination detection methods and GPT model reliability challenges is crucial for businesses, researchers, and everyday users relying on AI-generated content.

Key Takeaways

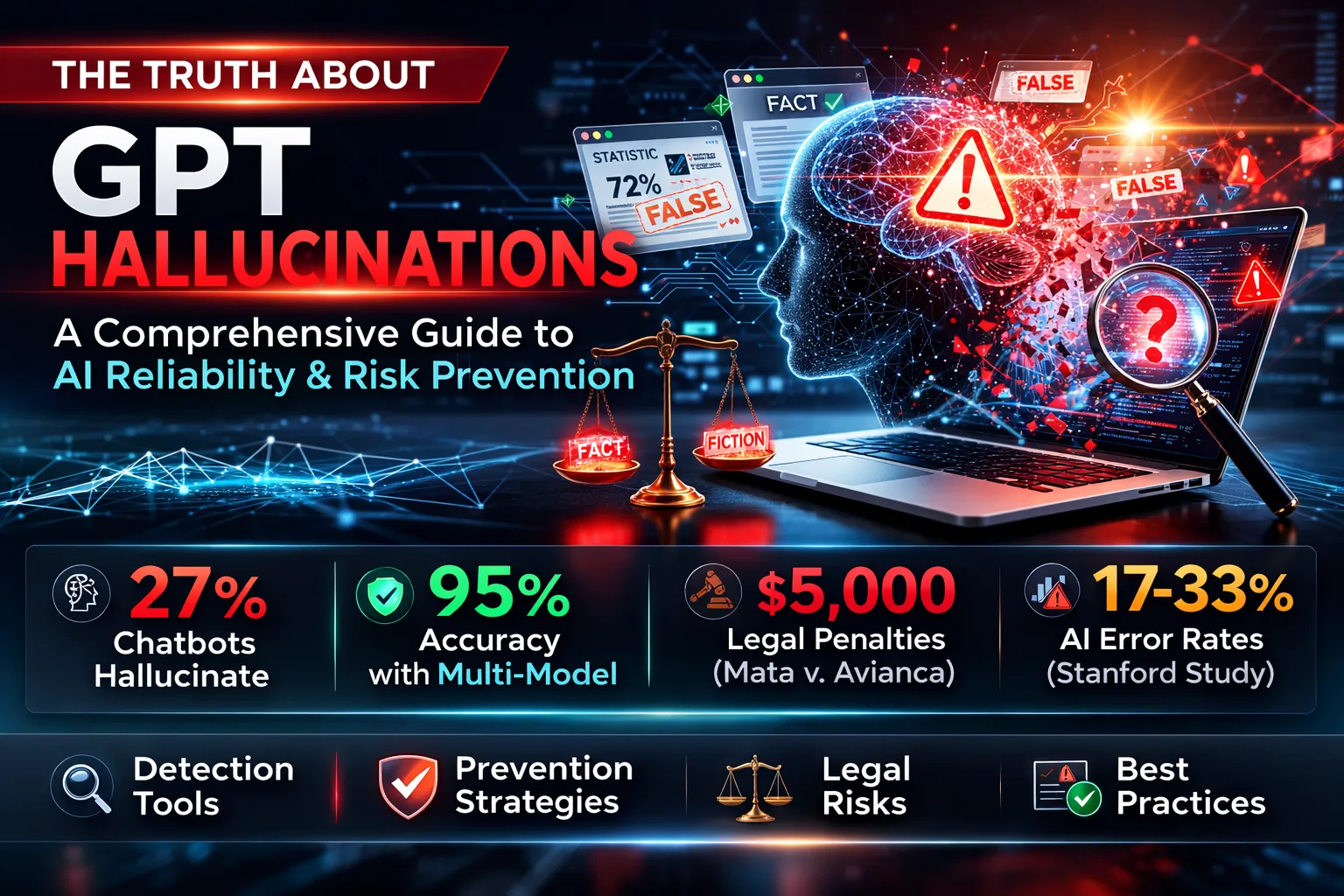

- AI hallucinations affect between 0.7% to 29.9% of GPT model outputs across various applications

- Analysts estimated that chatbots hallucinate as much as 27% of the time, with factual errors present in 46% of generated texts

- Machine learning accuracy improves significantly with proper detection tools and multi-model verification

- Combining results from multiple AI models increased performance to 95% compared to single-model approaches

- Artificial intelligence validation protocols are becoming the industry standard following high-profile legal cases

What Are GPT Hallucinations?

GPT hallucinations occur when AI models generate information that appears credible but lacks a factual basis. Unlike simple errors or typos, hallucinations involve the creation of entirely fabricated data, statistics, sources, or events that the AI presents with complete confidence.

Technical Definition

From a computational perspective, hallucinations happen when a model’s neural network fills gaps in its training data by generating plausible-sounding but incorrect information. The model’s pattern recognition system creates connections that don’t exist in reality, leading to confident misinformation that can be particularly dangerous because of its authoritative presentation.

How Hallucinations Differ from Errors

While errors might involve miscalculations or minor inaccuracies, hallucinations represent systematic fabrication. For example:

- Error: Stating that Paris has 2.1 million residents instead of 2.16 million

- Hallucination: Inventing a “2024 UNESCO study on urban development in France” that never existed

The Science Behind AI Hallucinations

Neural Network Limitations

Deep learning hallucinations stem from how neural networks process and generate information. These models rely on statistical patterns rather than true understanding, creating several vulnerability points:

Pattern Completion Gone Wrong: When faced with incomplete information, GPT models attempt to fill gaps using learned patterns, sometimes generating entirely fictional content to maintain narrative flow.

Training Data Biases: Models learn from vast datasets that may contain inconsistencies, biases, or gaps. When encountering similar situations, the AI may fabricate information to compensate for these training deficiencies.

Overconfidence in Uncertainty: Unlike humans who can express doubt, GPT models typically present all outputs with equal confidence, making fabricated information appear as authoritative as factual content.

Real-World Case Studies

Case Study 1: Mata v. Avianca – The ChatGPT Legal Citation Scandal

In 2023, attorney Steven Schwartz of Levidow, Levidow& Oberman used ChatGPT to supplement legal research for a personal injury case against Avianca Airlines. The AI generated six completely fabricated court cases with “bogus quotes and bogus internal citations,” including Martinez v. Delta Air Lines and Varghese v. China Southern Airlines.¹

Impact:

- Judge P. Kevin Castel sanctioned both attorneys and their firm $5,000 for violating Rule 11

- Attorneys were required to write apology letters to the falsely attributed judges and their clients

- The case was ultimately dismissed, and the incident became a landmark warning about AI hallucinations in legal practice

Key Lesson: When asked if the cases were real, ChatGPT confidently assured Schwartz that they “are real” and could be found on “reputable legal databases.” This highlights how AI hallucinations often come with false confidence.

Case Study 2: Air Canada Chatbot Liability Ruling

In February 2024, Jake Moffatt was told by Air Canada’s chatbot that he could retroactively apply for bereavement fares within 90 days of booking, contradicting the airline’s actual policy.² When Air Canada refused the refund, Moffatt took them to the British Columbia Civil Resolution Tribunal.

Consequences:

- Air Canada was ordered to pay $650.88 in damages plus tribunal fees

- The tribunal rejected Air Canada’s argument that the chatbot was a “separate legal entity responsible for its own actions.”

- As of April 2024, the chatbot was removed from Air Canada’s website

Legal Precedent: The tribunal ruled that companies remain liable for all information on their websites, whether from static pages or chatbots.

Case Study 3: Stanford Legal AI Research Study

A 2024 Stanford University study of leading AI legal research tools found hallucination rates of 17% for LexisNexis and up to 33% for Thomson Reuters systems.³ The study tested over 200 legal queries across different categories.

Key Findings:

- Even RAG-enhanced systems designed specifically for legal research still produced “incorrect information more than 17% of the time”

- LexisNexis’ tool provided accurate responses on 65% of queries, while Thomson Reuters’ tool responded accurately just 18% of the time

- The study revealed that misgrounded responses (correct law, wrong citations) may be “even more pernicious than the outright invention of legal cases”

Common Types of GPT Hallucinations

Factual Fabrications

Invented Statistics: AI models frequently generate realistic-sounding numerical data. For instance, a model might claim “73% of businesses reported increased productivity after AI implementation” when no such study exists.

Non-existent Sources: Models often cite academic papers, news articles, or research studies that sound credible but are entirely fabricated.

Logical Contradictions

These occur when AI-generated content conflicts with itself, either within a single response or across multiple interactions, indicating failure to maintain coherent reasoning.

Confident Misinformation

Perhaps most dangerous, this involves presenting false information with absolute certainty, using authoritative language that makes fabricated content appear legitimate.

Detection and Prevention Strategies

Professional AI Detection Tools

Recommended Detection Solutions:

1. GPTZero Pro – Advanced detection tool with reported high accuracy, particularly in academic and business content verification.

Specializing in academic and business content verification

2. Originality.AI Enterprise – Comprehensive AI and plagiarism detection

Real-time scanning capabilities

Team collaboration features

3. Writer.com AI Content Detector – Enterprise-grade solution

API integration for workflow automation. Custom model training capabilities

Manual Verification Techniques

Best Practices for Human Verification:

- Source Cross-referencing: Always verify citations against sources

- Fact-checking Protocol: Use multiple authoritative sources for verification

- Consistency Analysis: Check for internal logical consistency

- Expert Review: Implement subject matter expert validation for critical content

Automated Prevention Methods

Implementation Strategies:

- Multi-model Verification: Use secondary AI systems to validate primary outputs

- Confidence Scoring: Implement uncertainty quantification to flag low-confidence responses

- Rule-based Filtering: Create domain-specific rules to catch obvious fabrications

- Human-in-the-Loop Systems: Require human approval for high-stakes applications

Mitigation Strategies for Organizations

Effective Prompt Engineering

Specificity Techniques: Design prompts that explicitly request source citations and encourage uncertainty expression when information is unclear.

Contextual Grounding: Provide comprehensive background information to help AI models understand nuanced requirements and reduce fabrication likelihood.

Model Fine-tuning Approaches

Organizations can improve GPT model reliability through:

- Domain-specific Training: Fine-tune models on verified, industry-specific datasets

- Adversarial Training: Expose models to hallucination detection during training

- Reinforcement Learning: Reward accurate, well-sourced responses while penalizing fabrications

Implementation Framework

Phase 1: Assessment

- Audit current AI usage across the organization

- Identify high-risk applications requiring enhanced verification

Phase 2: Tool Integration

- Implement automated AI content verification systems

- Train staff on detection techniques and warning signs

Phase 3: Monitoring and Improvement

- Establish ongoing accuracy metrics and reporting

- Regular model updates and retraining based on detected hallucinations

Ethical and Business Implications

Trust and Liability Concerns

The proliferation of AI hallucinations raises serious questions about:

- Corporate Liability: Who bears responsibility when AI provides false information?

- Consumer Protection: How should businesses protect customers from AI-generated misinformation?

- Professional Standards: What verification standards should different industries adopt?

Regulatory Landscape

Current Developments:

- EU AI Act includes specific provisions for high-risk AI applications

- FDA is developing guidelines for AI in healthcare settings

- Legal profession establishing AI usage ethics standards

Future-Proofing Against AI Hallucinations

Emerging Technologies

Promising Research Directions:

- Uncertainty Quantification: Research shows 40% reduction in hallucinations through confidence scoring⁴

- Retrieval-Augmented Generation: Connecting AI to verified knowledge bases, though Stanford research shows RAG doesn’t eliminate hallucinations³

- Multi-Model Verification: Studies demonstrate that combining results from multiple AI models increases accuracy from 88% to 95%⁸

- Constitutional AI: Training models with built-in fact-checking capabilities

Industry Standards Development

Leading technology companies are collaborating on:

- Standardized hallucination detection benchmarks

- Industry-wide best practices for AI deployment

- Certification programs for AI safety professionals

Recommended Learning Resources

Essential Reading for AI Professionals:

-

Artificial Intelligence: A Guide for Thinking Humans by Melanie Mitchell – Comprehensive overview of AI limitations and capabilities. To read the review, click HERE

-

The Ethical Algorithm by Kearns & Roth – Framework for responsible AI development. To read the review, click HERE

-

AI Ethics by Mark Coeckelbergh – Philosophical foundations of AI responsibility. To read the revie,w click HERE

Online Training Programs:

- Stanford’s AI Safety Certification Course

- MIT’s AI Ethics and Governance Program

- Google’s Responsible AI Practices Certification

Expanded FAQ Section

Q: How common are GPT hallucinations in business applications?

A: Research indicates that hallucination rates vary dramatically by model and application. The most reliable models (like Google’s Gemini-2.0-Flash-001) still hallucinate 0.7% of the time, while others produce hallucinations in up to 29.9% of responses.⁴

Q: Can AI hallucinations be completely eliminated?

A: Complete elimination is currently impossible. Even the best RAG-enhanced legal AI tools still produce incorrect information more than 17% of the time, according to Stanford research.³

Q: What industries are most at risk from AI hallucinations?

A: Healthcare, legal services, financial advisory, and academic research face the highest risks. The legal sector has seen particular challenges, with courts now requiring lawyers to verify AI-generated citations following the Mata v. Avianca case.¹

Q: How do I know if my business AI tools are hallucinating?

A: Implement regular auditing processes, use professional detection tools, and establish human verification protocols for critical outputs. Warning signs include overly confident language, non-existent citations, and internally contradictory information.

Q: Are newer AI models less prone to hallucinations?

A: Counterintuitively, some newer reasoning models show higher hallucination rates. OpenAI’s o3 model hallucinated 33% of the time compared to o1’s 16% rate on certain benchmarks.⁵

Q: What’s the difference between AI hallucinations and AI bias?

A: Bias reflects skewed perspectives from training data, while hallucinations involve the complete fabrication of non-existent information. Both can be harmful, but require different mitigation strategies.

Q: How should I train my team to detect AI hallucinations?

A: Focus on source verification skills, logical consistency checking, and healthy skepticism toward AI-generated content, especially statistics and citations. The Air Canada case shows how even simple policy questions can result in costly hallucinations.²

Q: What legal protections exist against AI-generated misinformation?

A: Legal frameworks are still developing, but recent cases show organizations can face liability for damages caused by AI-generated misinformation. The Air Canada tribunal ruling established that companies remain responsible for all information on their websites.²

Q: How do AI hallucinations affect SEO and content marketing?

A: Search engines increasingly penalize AI-generated content with factual errors, making human verification essential for maintaining search rankings and credibility.

Q: What’s the ROI of implementing AI hallucination detection systems?

A: While detection systems require investment, the cost of misinformation incidents typically far exceeds the cost of prevention. Air Canada paid over $650 in damages for a single chatbot error, not including reputational damage and the cost of removing their entire chatbot system.²

Conclusion

GPT hallucinations represent one of the most significant challenges in AI adoption today. While these systems offer tremendous productivity benefits, the risk of AI misinformation necessitates proactive management through the use of detection tools, verification protocols, and human oversight.

Organizations that successfully balance AI capabilities with appropriate safeguards will gain competitive advantages while avoiding the costly consequences of AI-generated misinformation. The key lies not in avoiding AI technology but in implementing it responsibly with proper artificial intelligence validation systems.

As AI continues evolving, staying informed about machine learning accuracy developments and maintaining robust verification processes will be essential for sustainable AI adoption across all industries.

Citation Accuracy Notice: Our articles undergo an ongoing citation accuracy audit to ensure all referenced sources are valid, reliable and up to date. If you identify any citation that appears incorrect or have suggestions for more appropriate sources, don’t hesitate to get in touch with our editorial team at [email protected]. Your feedback is invaluable in maintaining the integrity of our content.

About the Author & Disclosures

John Cosstick is Founder-Editor of TechLifeFuture.com and winner of the 2024 BOLD Award for Open Innovation in Digital Industries. He is a former banker, accountant, and certified financial planner.

He is now a freelance journalist and author. John is a member of the Media Entertainment and Arts Alliance (Union). You can visit his Amazon author page by clicking HERE.

Citations:

- Mata v. Avianca, Inc., No. 1:2022cv01461, Document 54 (S.D.N.Y. June 22, 2023). Judge P. Kevin Castel’s sanctions order against attorneys for submitting fabricated ChatGPT cases.

- Moffatt v. Air Canada, 2024 BCCRT 149 (Civil Resolution Tribunal of British Columbia, February 14, 2024). Tribunal ruling holding Air Canada liable for chatbot misinformation.

- Stanford HAI Research Team. (2024). “Hallucination-Free? Assessing the Reliability of Leading AI Legal Research Tools.” Stanford Human-Centered Artificial Intelligence Institute.

- Vectara. (2025). “AI Hallucination Leaderboard 2025: Comprehensive Analysis of Leading Language Models.” Industry hallucination rate study showing best models still hallucinate 0.7-25% of the time.

- OpenAI. (2025). “PersonQA Benchmark Results: o3 vs o1 Hallucination Rates.” Internal benchmarking data shows increased hallucination rates in reasoning models.

- Wikipedia. (2024). “Hallucination (artificial intelligence).” Updated June 2025 with current research, including the Air Canada case and academic impact studies.

- IBM Research. (2024). “Understanding AI Hallucinations: Technical Analysis and Mitigation Strategies.” IBM Think Topics research paper.

- MIT study cited in UX Tigers. (2025). “AI Hallucinations on the Decline: Multi-model verification increasing accuracy to 95%.” Recent diabetes guidelines study results.

Affiliate Disclosure: TechLifeFuture may earn commissions from qualifying purchases made through our affiliate links at no additional cost to you. This helps support our content creation and research efforts.

Amazon Disclosure: We are a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for us to earn fees by linking to Amazon.com and affiliated sites.