Picture the scene. It is renewal time. The underwriter’s email arrives before the broker meeting, and buried in the schedule is a clause the firm has not seen before – a human oversight warranty.

It requires the partner signing the declaration to confirm, with evidence, that a qualified professional reviewed every AI-assisted client deliverable produced in the past twelve months before it left the firm. Not approved in principle. Not assumed to have been checked. Reviewed, with a documented record of what that review involved.

The managing partner reads the clause twice. Then sits back.

The article stops there, because that is where the exposure lives – not in the claim that follows, not in the litigation, not in the regulatory notice, but in that single moment of honest uncertainty. Whether the firm’s AI-assisted work over the past year was genuinely overseen, or whether it only appeared to be. Whether the governance architecture can withstand scrutiny, or whether it was built for appearances.

That gap – between how AI governance looks and how it functions – is the subject of this article.

Professional services firms across accounting, legal, financial planning, and consulting have adopted AI-assisted workflows at a pace. Most of them believe their governance is adequate. A specific, documented pattern of seven structural defects tells a different story. Insurers’ claims teams are identifying these defects in post-loss investigations. Courts are using them to assess whether warranted oversight conditions were genuinely satisfied.

These are not theoretical risks. They are drawn from published exclusion language – ISO Form CG 40 47 (January 2026) and Berkley Insurance Company Form PC 51380 (June 2024) – and from the case law record established in Mata v. Avianca and Moffatt v. Air Canada. The seven patterns are a proprietary taxonomy from the Governance Artifact System™ (GAS) framework. This article names them.

Why the Insurance Landscape Changed – and When

The shift was not gradual. There was a period – call it the silent AI era – when AI-related losses were absorbed by general professional indemnity policies without any specific conditions attached. If an AI tool contributed to a claim, that claim moved through the same channels as any other professional error. Insurers priced it broadly. Firms did not think about it specifically.

That period ended. ISO Form CG 40 47, issued January 2026, and Berkley Insurance Company Form PC 51380, issued June 2024, represent the two most significant published instruments marking the transition. Neither form reproduces easily into a paragraph – their operative effect is what matters here: they exclude AI-related losses unless the insured can demonstrate that specific governance conditions were met at the time the loss-generating work was performed. The exclusion is not triggered by AI use. It is triggered by AI use without verifiable governance.

The second change is about evidence. A checkbox on a renewal form that says ‘we have adequate AI oversight’ is no longer sufficient in markets that have adopted these endorsements. What some underwriters now want is documentation – logs, review records, policy frameworks – that show what oversight looked like in practice. The declaration is still required. It is just no longer the whole answer.

The third point is the most practical one for a firm reading this before its next renewal. The seven sins described below are not abstract risk categories. They are the patterns appearing in post-loss claim investigations. Knowing them before a claim arises is the only way to close the exposure before it costs the firm something.

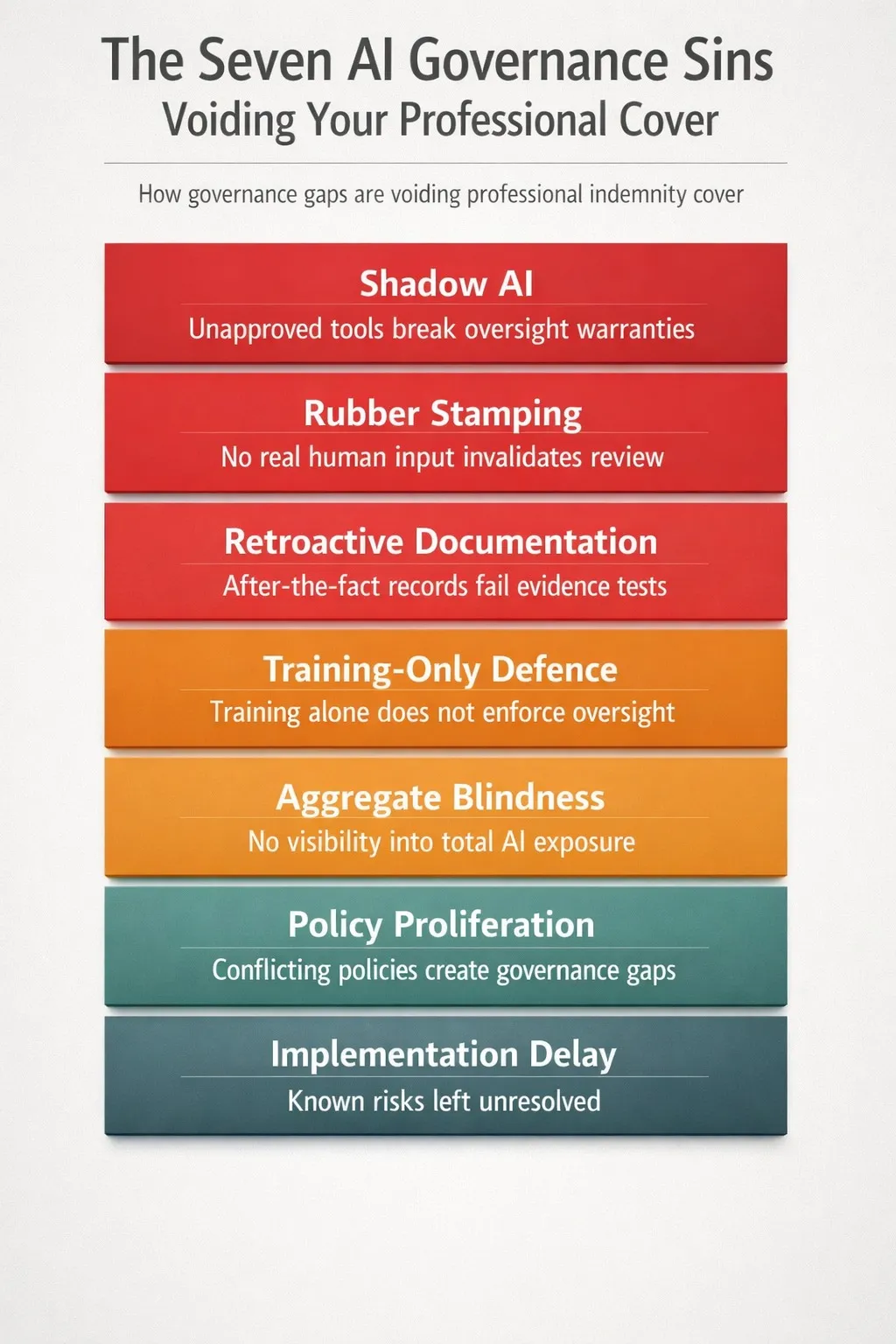

The Seven Governance Sins

Each sin follows the same structure: a definition, the mechanism by which it creates a coverage gap, and a brief remedy signal. The full remediation framework – including the AIMS architecture, the VHC standard, the Six-Week Sprint, and the board-level telemetry dashboard – is in the GAS Executive Edition. What follows is the diagnostic layer.

SIN 1 – SHADOW AI

Definition: Staff uses AI tools that have not been approved, registered, or governed by the firm, generating client-facing output without the firm’s knowledge.

The Mechanism

Consider the junior adviser who has discovered that a browser-based summarisation tool cuts her research time in half. She uses it on client files. She does not think of it as ‘using AI’ – she thinks of it as working faster. Her firm has an approved AI stack. That stack does not include her browser extension. The firm does not know the extension exists.

This is Shadow AI, and its insurance consequence follows a specific logic. Human oversight warranty language requires that the firm’s AI tools and workflows be known and documented. When the insurer’s claims team investigates a loss event involving that adviser’s work and discovers the unapproved tool, the firm’s warranty declaration – that it maintains adequate AI oversight – becomes retrospectively false. The firm was not necessarily negligent about the specific deliverable. But the structural condition it warranted – that its AI use was known and governed – was not met.

Coverage void does not require malice. It requires a gap between what was warranted and what was true.

Remedy Signal

The firm must maintain a current AI tool register covering every application capable of producing or informing client output – including browser extensions, productivity integrations, and personal subscriptions. Unregistered usage must be blocked or detected, not merely discouraged. A policy that says ‘do not use unapproved tools’ does not satisfy this requirement. A system that makes unapproved tool use visible does.

SIN 2 – RUBBER STAMPING

Definition: A human reviewer signs off on AI-generated output without making a substantive, documented contribution to its content.

The Mechanism

This is the most prevalent sin, and the most dangerous, precisely because it looks like compliance. The reviewer exists. The approval exists. The log entry exists. What is absent is evidence that the reviewer exercised independent professional judgement.

A financial planner who receives a 47-page AI-generated statement of advice, reviews it in twelve minutes, and approves it without a single documented amendment has not provided verifiable human contribution – regardless of what the review log records. The question an insurer or court will ask is not whether review occurred, but whether the reviewer’s involvement changed the output in a way that required expertise. The GAS framework calls this the Edit Delta test.

The research basis for this concern is well-established. Parasuraman and Manzey (2010) documented human deference to automated output at rates of 20–40% in professional contexts – a phenomenon they term automation complacency. That deference is exactly what rubber-stamping looks like in practice. When a claims assessment reveals no reviewer-driven change across multiple deliverables, the evidentiary inference is that oversight was ceremonial.

Parasuraman, R. & Manzey, D.H. (2010). Complacency and bias in human use of automation: An attentional integration. Human Factors, 52(3), 381–410.

Remedy Signal

Every AI-assisted deliverable must carry a record of what the reviewing professional changed, queried, or verified – not just that the review occurred. The GAS framework’s Verifiable Human Contribution (VHC) standard (Cosstick, 2026, ISBN 978-0-6483326-5-7) defines what meaningful review looks like in evidentiary terms. The standard is not burdensome in practice; it is, however, specific about what counts as evidence.

SIN 3 – RETROACTIVE DOCUMENTATION

Definition: Governance records – logs, review notes, AI usage declarations – are created after the fact rather than contemporaneously with the work.

The Mechanism

This sin sits at the intersection of administration and fraud risk, which is why it tends to surface quietly until a claim investigation brings it into sharp relief.

When an insurer’s forensic team examines a loss event, they examine metadata. File timestamps. Email chains. System logs. Version histories. Post-hoc documentation is often detectable through exactly these channels – a review note dated three weeks after the deliverable, a governance log that begins the week of a policy renewal rather than when AI use began, records that cluster around audit dates rather than work dates.

Beyond the forensic dimension, retroactive records cannot satisfy a warranty condition that required contemporaneous oversight. The warranty is forward-looking – it requires that oversight existed at the time the work was performed. Documentation reconstructed afterwards cannot create oversight that was absent when it mattered.

This sin is particularly common in firms that introduced formal AI governance in response to a renewal conversation. They have the policy. They have the intent. But the records trail begins from the policy date, not from when their staff started using AI tools.

Remedy Signal

Session-level logging must be automated, not manual. This is not a staff discipline question – it is a system architecture question. The governance record must be generated by the workflow itself, not reconstructed from memory.

SIN 4 – TRAINING-ONLY DEFENCE

Definition: The firm treats staff AI training as its primary governance mechanism, assuming that trained staff will self-govern their AI use without structural controls.

The Mechanism

Training is necessary. It is not sufficient. The distinction matters, and the research supporting it is more specific than a general caution about over-reliance on education.

Cummings (2004) examined automation bias in time-critical decision support systems and found that supervisory control failures occur even among trained professionals operating under normal – not exceptional – conditions. High workloads accelerate the effect; tight deadlines accelerate it further. The AI output looks authoritative. The professional is busy. The training says ‘apply independent judgement,’ but the conditions are not structured to support it.

Cummings, M.L. (2004). Automation bias in intelligent time-critical decision support systems. AIAA 1st Intelligent Systems Technical Conference. DOI: 10.2514/6.2004-6313.

An insurer assessing a claim will ask not only whether staff were trained, but whether the firm’s systems made it structurally difficult to bypass human oversight without detection. If a trained professional could – and did – approve AI output without a documented independent contribution, and the system recorded no flag, the training becomes a secondary record rather than a primary defence.

Remedy Signal

Technical controls – authorisation tiers, approval gates, audit logging that cannot be skipped – must sit beneath the training layer. Governance architecture should make it structurally difficult to bypass human oversight, not merely against policy.

SIN 5 – AGGREGATE BLINDNESS

Definition: The firm manages AI governance at the level of individual transactions or deliverables without visibility into the aggregate volume and pattern of AI use across the practice.

The Mechanism

This sin tends not to surface until renewal, and by then it reads as a capacity restriction rather than a governance failure – which is exactly how underwriters intend it.

Aggregate risk is the reinsurance sector’s primary concern about AI in professional services. When multiple clients receive advice that shares a common model, a common prompt template, or a common data source, and that source contains a systematic error or embedded bias, the loss is not isolated – it correlates across the portfolio. A firm’s insurer prices for that scenario. Its reinsurer prices it more acutely.

A firm that can demonstrate transaction-level oversight but cannot answer the question ‘what percentage of client deliverables in the past quarter were AI-assisted, across how many discrete systems?’ is demonstrating aggregate blindness to an underwriter at renewal. That inability to quantify its own exposure translates directly into premium loading. Sometimes it translates into a polite decline.

Remedy Signal

Governance telemetry must be aggregated at the practice level. The firm needs a board-level dashboard that shows AI use concentration, incident frequency, and drift variance across the full client portfolio – not just a file-by-file log that no one has synthesised.

SIN 6 – POLICY PROLIFERATION

Definition: The firm has produced multiple AI governance policies – acceptable use, data privacy, client disclosure, IT security – that address AI tangentially and inconsistently, without a single integrated governance framework.

The Mechanism

More policies do not equal better governance. When an insurer’s legal team examines a firm’s documentation following a loss event, they are looking for coherence – evidence that warranted conditions were consistently defined, consistently assigned, and consistently applied. Multiple policies that each address AI in passing but define it differently create the opposite of that coherence.

If the IT security policy’s definition of an approved AI tool differs from the acceptable use policy’s definition, which controls? If the data privacy policy assigns oversight responsibility to a different role than the client disclosure policy, who was actually accountable? These interpretive gaps are exactly where insurer’s counsel finds grounds to characterise the firm’s governance as nominal rather than substantive.

Courts have applied analogous reasoning in duty of care cases: the existence of a safety policy does not satisfy a care obligation if the policy was internally contradictory or practically unenforced. The logic translates directly to warranty conditions.

Remedy Signal

A single AI Management Systems (AIMS) framework – as specified in the GAS Executive Edition (Cosstick, 2026) – should supersede and integrate all subsidiary AI-related policies. One framework. One set of definitions. One accountability structure. One reference point for compliance assessment.

SIN 7 – IMPLEMENTATION DELAY

Definition: The firm has identified its governance gaps, approved a remediation plan, and failed to implement it – often because implementation has been deferred pending a better moment, a technology upgrade, or a budget cycle.

The Mechanism

This is the sin with the most direct fiduciary dimension, and it is the one that managing partners most commonly underestimate – because it penalises knowledge, not ignorance.

The Caremark line of cases, refined most directly in Marchand v. Barnhill, 212 A.3d 805 (Del. 2019), established that directors must maintain adequate oversight of mission-critical regulatory risks. The Marchand decision extended this obligation to operational failures – not just financial controls – and courts have begun applying its logic to AI governance. A board that is aware of a material governance gap and has not acted to close it is in a more exposed position than a board that has not yet conducted the audit.

Marchand v. Barnhill, 212 A.3d 805 (Del. 2019). Available at: https://law.justia.com/cases/delaware/court-of-chancery/2019/2018-0602-jrs.html

The documentation problem is acute. A firm that has conducted an internal AI governance audit, identified specific gaps, and recorded those gaps in a board paper or partner meeting minute has created a document that becomes Exhibit A in a post-claim investigation if remediation did not follow. Awareness plus inaction is a harder position to defend than no awareness at all.

Remedy Signal

Governance remediation must be treated as a time-bound project with a board mandate, not an ongoing aspiration. The GAS framework’s Six-Week Sprint (Appendix F, Cosstick, 2026) provides a structured implementation sequence. The starting point is less important than the commitment to a completion date.

Why the Sins Compound

These seven patterns do not operate in isolation. They cluster, and the clustering is not random.

A firm that commits Sin 2 – rubber-stamping – almost certainly also commits Sin 3, because the absence of genuine review leaves nothing to document contemporaneously. A firm that commits Sin 6 – policy proliferation – typically also commits Sin 4, because inconsistent policy infrastructure leaves training as the only coherent governance layer. Sin 1 and Sin 5 reinforce each other: shadow AI tools are, by definition, absent from aggregate reporting.

The compounding effect is not just additive. A firm that has simultaneously committed three or four of these sins has not created three or four separate exposures. It has created a governance posture that an insurer’s claims team will characterise holistically – as the absence of a genuine governance regime. At that point, the question shifts. It is no longer necessary to determine which warranty condition was breached. It is whether any warranted condition was genuinely satisfied.

This is not an alarmist framing. It is the logical consequence of how warranty language operates in an exclusion-sensitive environment. The GAS Executive Edition performs a full compounding analysis and provides a remediation sequence that addresses all seven sins within a single integrated framework.

Seven Questions for Your Next Renewal Conversation

Before the underwriter asks these questions, ask them yourself. For each one, the answer must be ‘yes’ with evidence – not ‘yes, I believe so’ or ‘yes, in principle.’ If any answer is no, or if certainty is absent, you have identified a governance gap that your current policy may not protect.

1. Can you name every AI tool currently used to produce or inform client deliverables at this firm, including tools used by individual staff that were not centrally approved? (Shadow AI)

2. For every AI-assisted deliverable in the past twelve months, do you have a contemporaneous record of what the reviewing professional changed or verified? (Rubber Stamping)

3. Are your AI governance records created at the time the work is done, or reconstructed periodically? (Retroactive Documentation)

4. If you removed your AI training programme entirely, would your systems still prevent unauthorised or unreviewed AI output from reaching clients? (Training-Only Defence)

5. Can you state, today, what percentage of client deliverables in the past quarter were AI-assisted? (Aggregate Blindness)

6. Do all of your AI-related policies use the same definitions and assign accountability to the same roles? (Policy Proliferation)

7. If your firm identified a governance gap more than 90 days ago, has it been fully remediated? (Implementation Delay)

Where This Goes From Here

The seven sins described above are not unfamiliar failures. They are the default state of most professional firms that adopted AI tools quickly, without pausing to build the governance architecture that the current insurance and regulatory environment requires.

That default state is becoming expensive. At renewal, it translates into premium loading, capacity restrictions, and warranty conditions that existing workflows cannot satisfy. At the claim stage, it translates into an investigation exposure that a firm’s coverage was never designed to handle. And for firms with European clients or operations, the emerging EU AI Act obligations add a regulatory layer that intersects directly with the governance gaps identified above.

The GAS Executive Edition provides the full remediation framework: the AIMS architecture, the VHC standard, the Golden Triangle decision filter, the authorisation tier model, the Six-Week Sprint implementation sequence, and the board-level telemetry dashboard. It is written for the managing partner and their professional indemnity broker – not for the IT team. The framework is designed to be implemented by the people who sign the warranty declarations, not by the people who manage the servers.

Frequently Asked Questions

Q1. What is an AI governance failure in professional services?

An AI governance failure is any gap between the oversight conditions a firm has warranted to its insurer and the oversight it actually provides. The seven most common patterns are Shadow AI, Rubber Stamping, Retroactive Documentation, Training-Only Defence, Aggregate Blindness, Policy Proliferation, and Implementation Delay – as documented in the GAS Executive Edition (Cosstick, 2026).

Q2: Can an AI governance failure void professional indemnity insurance?

Yes. Human oversight warranties in AI-specific endorsements – including ISO CG 40 47 (January 2026) and Berkley PC 51380 (June 2024) – create conditions that must be met for coverage to apply. If a claim investigation reveals that a warranted condition was not met, the insurer has grounds to deny the claim.

Source: ISO Form CG 40 47; Berkley Insurance Company Form PC 51380.

Q3: What is Shadow AI and why does it matter to insurers?

Shadow AI refers to AI tools used by staff that have not been approved, registered, or governed by the firm. It matters to insurers because a warranty declaring adequate AI oversight is retrospectively false if the firm did not know what AI tools its staff were using.

Source: Cosstick, J. (2026). The Governance Artifact System™, Executive Edition. ISBN 978-0-6483326-5-7.

Q4: What does automation bias mean for professional liability?

Automation bias is the documented tendency of humans to defer to AI output rather than apply independent judgment, at rates of 20–40% in professional contexts. For liability purposes, a reviewer who approves AI output without independent analysis may not have satisfied the human oversight requirement in their policy warranty.

Source: Parasuraman, R. & Manzey, D.H. (2010). Human Factors, 52(3), 381–410.

Q5: Is staff AI training enough to satisfy a human oversight warranty?

No. Research on automation bias in time-critical professional settings establishes that trained professionals still defer to AI output under normal operating conditions. Technical controls – authorisation tiers, approval gates, automated logging – must sit beneath the training layer to satisfy a warranty.

Source: Cummings, M.L. (2004). DOI: 10.2514/6.2004-6313.

Q6: What is the Caremark obligation for AI governance?

The Caremark/Marchand doctrine – most directly established in Marchand v. Barnhill, 212 A.3d 805 (Del. 2019) – holds that directors must maintain adequate oversight of mission-critical risks. Courts have begun applying this doctrine to AI governance failures, meaning boards aware of governance gaps but delaying remediation may face personal fiduciary liability-

source: https://law.justia.com/cases/delaware/court-of-chancery/2019/2018-0602-jrs.html

Q7: What is the difference between a governance policy and a governance framework?

A governance policy states what the firm intends to do. A governance framework – such as the AI Management Systems (AIMS) architecture in the GAS Executive Edition – specifies the technical controls, accountability structures, telemetry requirements, and audit trails that make the policy enforceable and evidentially verifiable. Policies alone do not satisfy warranty conditions; frameworks do.

Source: Cosstick, J. (2026). ISBN 978-0-6483326-5-7.

Q8: How do courts assess whether human oversight was genuine?

Courts and claims investigators look for evidence that the reviewing professional’s involvement changed the output – documented edits, queries, or verification steps. The absence of any reviewer-driven change is treated as evidence that oversight was ceremonial rather than substantive.

Source: Mata v. Avianca, No. 1:22-cv-01461 (S.D.N.Y. June 22, 2023). Available at: https://law.justia.com/cases/federal/district-courts/new-york/nysdce/1:2022cv01461/575368/54/

Q9: What is the ISO CG 40 47 AI exclusion, and who does it affect?

ISO CG 40 47 is a commercial general liability endorsement (edition date January 2026) published by Insurance Services Office that excludes AI-related losses unless specific governance conditions are met. It affects any professional services firm whose general liability or professional indemnity policy has been endorsed with this form, and its prevalence is increasing at renewal across Australian and international markets.

Source: ISO Form CG 40 47, January 2026.

Q10: Where can I find the full GAS governance framework?

The full Governance Artifact System™ framework – including the AIMS architecture, VHC standard, Golden Triangle, authorisation tier model, and Six-Week Sprint implementation sequence – is documented in the GAS Executive Edition by John Cosstick, available on Amazon: https://www.amazon.com/dp/0648332659

Key References

Cosstick, J. (2026). The Governance Artifact System™, Executive Edition. TechLifeFuture.com. ISBN 978-0-6483326-5-7.

Cummings, M.L. (2004). Automation bias in intelligent time-critical decision support systems. AIAA 1st Intelligent Systems Technical Conference. DOI: 10.2514/6.2004-6313.

ISO Form CG 40 47 – AI Exclusion (January 2026). Insurance Services Office.

Berkley Insurance Company Form PC 51380 00 06-24 – Absolute AI Exclusion (June 2024).

Marchand v. Barnhill, 212 A.3d 805 (Del. 2019). Available at: https://law.justia.com/cases/delaware/court-of-chancery/

Parasuraman, R. & Manzey, D.H. (2010). Complacency and bias in human use of automation: An attentional integration. Human Factors, 52(3), 381–410.

About the Author

John Cosstick is the Founder-Editor of TechLifeFuture.com and a 2024 BOLD Award Winner for Open Innovation in Digital Industries. A retired Certified Financial Planner and former bank compliance manager, he has contributed to the UK Money and Pensions Service Debt Review and UN AIforGood initiatives.

He is the inventor of the Verifiable Human Contribution (VHC) framework, with patent applications pending with IP Australia (AU 2025220863) and WIPO (PCT/IB2025/058808). The Governance Artifact System™ is his flagship AI governance publication.

Authority Reference Links

- https://hai.stanford.edu/ai-index/2025-ai-index-report/policy-and-governance

- https://www.wiley.law/article-12233

- https://www.jdsupra.com/legalnews/ai-update-the-growing-trend-of-ai-3672112/

- https://dwfgroup.com/en/news-and-insights/insights/2025/9/professional-liability-risks-in-the-age-of-artificial-intelligence

- https://blogs.law.ox.ac.uk/oblb/blog-post/2026/01/fiduciary-duties-and-business-judgment-rule-20-ai-act-age

- https://corpgov.law.harvard.edu/2025/09/22/the-hidden-c-suite-risk-of-ai-failures/

- https://www.swept.ai/post/ai-insurance-liability-cgl-exclusions-coverage-gaps

- https://www.hunton.com/hunton-insurance-recovery-blog/the-continued-proliferation-of-ai-exclusions

- https://aiproductivity.ai/news/ai-liability-insurance-coverage-exclusions-2026/

Required Editorial Disclosures

Citation Accuracy & Verification Statement

At TechLifeFuture, every article undergoes a multi-step fact-checking and citation audit process. We verify technical claims, research findings, and statistics against primary sources, authoritative journals, and trusted industry publications. Our editorial team adheres to Google’s EEAT (Expertise, Experience, Authoritativeness, and Trustworthiness) principles to ensure content integrity. If you have questions about any references used or would like to suggest improvements, please contact us at [email protected] with the subject line: Citation Feedback.

Amazon Affiliate Disclosure

We are a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for us to earn fees by linking to Amazon.com and affiliated sites. If you click on an Amazon link and make a purchase, we may earn a small commission at no extra cost to you.

General Affiliate Disclosure

Some links in this article may be affiliate links. This means we may receive a commission if you sign up or purchase through those links-at no additional cost to you. Our editorial content remains independent, unbiased, and grounded in research and expertise. We only recommend tools, platforms, or courses we believe bring real value to our readers.

Legal and Professional Disclaimer

The content on TechLifeFuture.com is for educational and informational purposes only and does not constitute professional advice, consultation, or services. AI technologies evolve rapidly and vary in application. Always consult qualified professionals-such as data scientists, AI engineers, or legal experts-before implementing any strategies or technologies discussed. TechLifeFuture assumes no liability for actions taken based on this content.

This article reflects AI governance, insurance market, and professional services practices as of [DATE OF PUBLICATION] (AEST). Readers should confirm whether subsequent guidance has been issued by their professional bodies or relevant regulators.

Content on TechLifeFuture.com is for educational and informational purposes only and does not constitute legal, insurance, or financial advice. Readers should consult qualified professionals before making decisions about insurance coverage, governance implementation, or regulatory compliance.

The Governance Artifact System™, Verifiable Human Contribution (VHC), Proof Before Scale™, and related framework terms are trademarks and patent-pending intellectual property of John Cosstick. Some links in this article may be affiliate or referral links. If you purchase through these links, TechLifeFuture.com may earn a small commission at no extra cost to you.

This article was researched and written by a human author and reviewed under TechLifeFuture’s citation-verification and EEAT-aligned editorial process.

© 2026 TechLifeFuture.com | Creative Commons BY-NC 4.0.