I. The Death of ‘I Reviewed It’

Three words are quietly wrecking AI governance programmes across professional services firms right now. They are not buried in some obscure regulatory instrument – they are sitting in boardroom minutes, compliance manuals, and insurance warranty declarations. The three words? “I reviewed it.”

That phrase was already inadequate in 2023. By 2025, it had become a liability. The reason is straightforward: courts, insurers, and regulators do not accept assertions. They accept evidence. From the Lloyd’s syndicates writing professional indemnity in London, to the SEC and state bar associations scrutinising AI-assisted advice in the United States, to ASIC and APRA in Australia, the same pattern is emerging: warranty language is tightening, evidentiary thresholds are rising, and “I reviewed it” no longer survives scrutiny.

The following scenario is a fictional but representative illustration of the claim-denial pattern now emerging across the international PI market, including in Australia.

Consider a firm like Ashford & Whitmore Advisory – a hypothetical mid-size financial planning firm operating across three Australian states, with cross-border engagements in the United Kingdom and Singapore. Their professional indemnity (PI) policy contained – as nearly all PI policies now do – a warranty clause requiring “meaningful human review” of all AI-generated client advice. The firm had a written review policy. Partners signed off. Checklists were ticked.

But when a client dispute triggered an insurance claim, the firm faced an insurer who asked a simple question: “Where is your contemporaneous proof?” There was none. The policy documents described the intent of the review. No cryptographic record existed showing what the AI produced, what a human changed, and who accepted final responsibility at the exact moment of delivery.

In scenarios of this type, coverage is denied in full. The claim in this illustration: $12 million. This is the Oversight Illusion – the dangerous gap between institutional intent and forensic reality. A firm believes it has a robust AI governance programme because it has written a policy. The insurer disagrees because policy is an aspiration, not proof.

What this article delivers: the VHC (Verifiable Human Contribution) protocol, the Edit Delta metric, cryptographic session records, and a clear framework for converting everyday oversight into a high-trust governance asset that satisfies insurers, regulators, and – if it comes to it – courts.

This matters to directors, compliance officers, licensed professionals, and platform operators equally. If your firm uses AI to generate, draft, or inform advice delivered to a client, the frameworks described here are not optional enhancements. They are the minimum defensible standard for 2026 and beyond.

II. Why Insurance Warranties Demand Proof, Not Policy

Insurance professionals use the phrase “condition precedent” for a reason. A warranty in a professional indemnity or errors and omissions (E&O) policy is not aspirational language – it is a threshold condition that must be satisfied for coverage to exist at all. If the condition is not met, coverage does not apply, regardless of whether the underlying claim is valid.

The interpretive trap here is subtle but severe. Terms like “meaningful human review” are deliberately elastic. Insurers write them that way because it preserves their discretion at claim time. In the absence of objective, structured evidence of what “meaningful” actually looked like in practice, the insurer’s interpretation governs – and that interpretation will be narrow.

Think of the contrast this way. A qualitative assertion says: “Our policy requires senior professional review of all AI outputs before client delivery.” A quantitative record says: “At 14:23:07 AEST on 14 March 2025, professional ID 0047 modified 312 characters of a 1,248-character AI-generated advice document, achieving a 25% Edit Delta, and cryptographically signed the final version. Session hash: SHA-256 [hash value].”

The second version is not just more credible – it is forensically irrefutable. It is the difference between telling a court you reviewed something and proving it happened.

Australian PI and E&O policies issued since 2023 increasingly include AI-specific warranty clauses. UK and US analogues – particularly in the Lloyd’s market – have adopted parallel language.

For guidance on conduct and disclosure obligations for financial product advisers, see ASIC Regulatory Guide 175. For APRA-regulated entities’ information security obligations, see APRA CPG 234

III. Introducing VHC — From Assertion to Measurement

Verifiable Human Contribution is a structured protocol that produces a mathematically auditable record of professional engagement with AI-generated output. It is not a philosophy or a checklist. It is a system – one that generates contemporaneous evidence capable of satisfying the evidentiary standards applied by insurers, regulators, and courts.

The difference between VHC and traditional “human-in-the-loop” assertions is precisely the difference between a checkbox and a proof. A checkbox says a human was present. A VHC record demonstrates what that human actually did – quantitatively, verifiably, and with cryptographic integrity.

Who needs VHC? Any regulated professional using AI to generate, draft, or inform advice delivered to a client. This encompasses financial planners operating under ASIC licensing conditions, legal practitioners generating client-facing documents, tax professionals using AI to draft ATO correspondence, and any organisation using agentic AI systems to produce outputs that carry professional liability.

The VHC protocol has been filed for patent protection (AU 2025220863; PCT/IB2025/058808). This reflects the novelty of the approach – structuring human oversight as a measurable, auditable, and cryptographically secured governance artefact.

For those involved in the development of AI governance standards, VHC’s architecture is directly relevant to two active international workstreams. ISO/IEC 42001:2023 (Artificial Intelligence Management Systems) establishes governance requirements for AI systems but does not specify how “meaningful human oversight” should be measured or evidenced at the transaction level.

VHC is designed to fill precisely that gap – providing the measurable, auditable evidence layer that ISO/IEC 42001 requires but does not itself define. Similarly, the EU AI Act’s Article 14 human oversight requirements and Article 12 logging mandates describe outcomes without specifying the evidentiary mechanism.

VHC is a candidate compliance instrument for both. The OECD AI Policy Observatory provides a useful international context for the broader governance landscape in which these standards are evolving.

IV. The Edit Delta — The Mathematics of Meaningful Review

The Edit Delta is a quantitative metric measuring the distance between raw AI output and the final human-authorised version. It is calculated using Levenshtein Distance – the same algorithm that powers your spell-checker, applied here at the whole-document level.

Here is the accessible version: imagine two documents side by side. Levenshtein Distance counts the minimum number of character-level operations – insertions, deletions, substitutions – needed to transform one document into the other. Divide that count by the total character length of the original AI output, and you have the Edit Delta as a percentage.

Why does this matter? Because a near-zero Edit Delta is the mathematical signature of rubber-stamping. If a professional reviews a 1,500-character AI-generated tax advice document and changes three characters, the Edit Delta is 0.2%. That is not a review. That is the Oversight Illusion in numeric form. Conversely, a 25% Edit Delta on the same document means at least 375 characters were substantively altered – evidence of active professional engagement.

A practical illustration: a 400-word AI draft on superannuation contribution strategies. Raw output: 2,100 characters. The advising accountant adds specific client context, removes two generic paragraphs, adjusts the contribution cap figure, and inserts a relevant ruling reference. Final character count of changes: 540. Edit Delta: 25.7%. This record, timestamped and hashed, is a defensible proof of expert engagement.

VHC Edit Delta Compliance Thresholds

| Use Case Risk Category | Minimum Edit Delta | Forensic Purpose |

| Low Risk (Scheduling / Admin) | 5% | Evidence of baseline attention |

| Standard Risk (Correspondence) | 15% | Proof of professional tailoring |

| High Risk (Legal / Tax / Financial) | 25% | Proof of substantive expert review |

| Agentic AI (Knowledge Bases) | 25% | Verification of autonomous logic |

Source: Governance Artifact System Protocol (VHC patent-pending AU 2025220863)

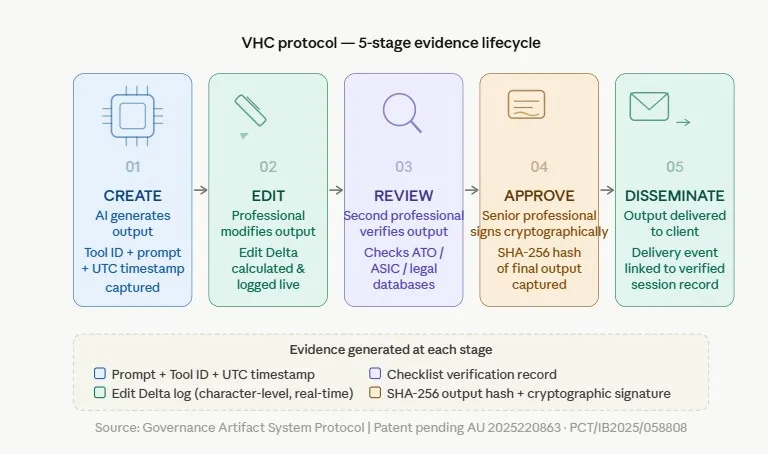

Each stage generates a distinct forensic artifact. Together they form the tamper-evident chain of custody for professional AI-assisted work.

V. The 5-Stage VHC Workflow — Building the Evidence Lifecycle

The VHC protocol comprises five sequential stages, each generating a distinct forensic record. Together, they form a tamper-evident chain of custody for professional AI-assisted work.

Stage 1 – CREATE

AI generates the initial output. The system automatically captures the Tool ID, the full prompt submitted, and a UTC timestamp. This contemporaneous record establishes what the AI actually produced before any human modification – the baseline against which the Edit Delta is later calculated.

Stage 2 – EDIT

The responsible professional modifies the AI output. Edit Delta is calculated and logged in real-time as changes are made. This stage is where the quantitative proof of engagement is generated – every insertion, deletion, and substitution is recorded as it occurs, not reconstructed afterward.

Stage 3 – REVIEW

A second professional verifies the edited output against use-case-specific checklists. For financial advice, this means cross-referencing ATO guidance and ASIC RG 175. For legal documents, it means checking against relevant case law and statutes. The review stage creates a second layer of human accountability separate from the primary author.

Stage 4 – APPROVE

A senior professional provides a cryptographic signature. At this moment, the final output hash is captured – a SHA-256 fingerprint of the document as approved. This is the point of no return: the record is now forensically sealed.

Stage 5 – DISSEMINATE

The output is delivered to the client or published. The delivery event is linked to the verified session record, creating a complete, traceable chain from AI generation through to client receipt.

VI. Cryptographic Integrity — Why the Timestamp Is the Evidence

SHA-256 hashing is often described in technical terms that obscure its practical significance. Here is a plain-language explanation that matters for governance professionals: a SHA-256 hash is a unique digital fingerprint of a document at a precise moment in time. Change a single character in that document – add a full stop, delete a space – and the hash changes entirely.

Why Hashing Matters in Legal Evidence

The forensic implication is profound. A SHA-256 hash generated at the millisecond of professional approval is proof that the document in its final approved form existed at that exact moment. It cannot be retroactively altered without the hash changing, and any change to the hash is immediately detectable.

This distinction is everything in a legal dispute: contemporaneous logs – created during the workflow in real time – are legitimate evidence. Retroactive logs – created after a claim to reconstruct what supposedly happened – are not evidence. They are potentially fraudulent. Insurers and regulators understand this distinction intimately.

The Three-Part Evidential Structure

The SHA-256 hash is not, in the VHC protocol, a standalone security mechanism. It functions as the binding element in a three-part evidential record: the CREATE timestamp establishes what the AI produced and when; the EDIT log establishes what a named human changed; and the APPROVE hash seals both into a single tamper-evident artefact.

The inventive contribution is the structured combination and sequencing of these three elements, not any individual component.

The VHC Session Record

The VHC Session Record brings all of this together: a tamper-evident document chain showing what the AI produced (the CREATE record and prompt), what the human changed (the EDIT log and Edit Delta), and who accepted final responsibility (the APPROVE signature and output hash). In an e-discovery request or regulatory investigation, this chain is production-ready.

From Session Records to Governance Ledger

At the enterprise level, individual Session Records aggregate into a Governance Ledger — a complete, immutable, cryptographically chained record of every AI-assisted professional output within a firm’s operation.

The Ledger is not simply a log. It is the evidentiary substrate from which TCOR analysis, insurer telemetry reporting, regulatory disclosure, and board-level governance dashboards are all derived. The existence of a complete, unbroken Ledger is what transforms twelve months of individual VHC session records into the “clean telemetry track record” that enables Preferred Risk Status negotiation with PI underwriters.

Regulatory and Legal Implications

It is also what produces the evidence chain required to rebut the EU Product Liability Directive’s black box presumption at a portfolio level, rather than claim by claim. The Governance Ledger architecture is a component of the AIMS Governance patent family currently in active development.

In the Australian context, the Electronic Transactions Act 1999 (Cth) and the Evidence Act 1995 (Cth) establish the framework for treating contemporaneous digital records as admissible evidence. VHC session records are designed to satisfy these legislative requirements.

VII. Automation Bias — Why “Good Intentions” Are Not Enough

There is a reason the EU AI Act Article 14 specifically names automation bias as a governance concern. Research published in peer-reviewed cognitive science literature consistently shows that humans working under time pressure fail to catch AI errors at significantly elevated rates.

A 2025 systematic review published in AI & Society, covering 35 studies across healthcare, law, and public administration, found that high workload conditions reliably increase the risk of delayed or failed detection of automation errors.

This is not a character flaw—it is a documented cognitive pattern. The Springer Nature systematic review on automation bias in human-AI collaboration (2025) describes it precisely: under pressure, users reallocate attention away from AI outputs toward other manual tasks, creating systematic blind spots in their oversight.

Fiduciary duty extends to AI governance in operational terms, not just in principle. Boards can convert that duty into evidence by applying the Board VHC Integrity Checklist in Section X — a five-question self-assessment that translates abstract oversight obligations into a documented, reviewable artefact.

Why Training Alone Is Not Enough

The governance response to automation bias cannot rely on training alone. Training helps—but human fatigue, workload, and cognitive shortcuts are not problems that training permanently resolves. The architectural response is a Control Plane. A Control Plane is a technical gate—software architecture that physically prevents a high-stakes AI output from being sent, printed, or published until a VHC record is cryptographically signed and complete.

The Three Layers of AIMS Governance

The Control Plane is the third structural layer of the AIMS Governance architecture:

- The first layer is the governance policy (what humans must do)

- The second layer is the VHC protocol (how that human action is measured and recorded)

- The third layer—the Control Plane—is the enforcement mechanism that makes the first two layers non-optional

It enforces governance rules at the system level, regardless of operator intent, workload, or time pressure. The Control Plane concept is the subject of active IP development within the AIMS Governance patent family.

Governance as Code: Enforcing Compliance by Design

Think of it as a dual-key authorisation system used in banking: just as no single individual can authorise a large wire transfer without a second key-holder’s action, no AI-generated client advice leaves the system until the VHC workflow is complete. The rule is enforced by the architecture, not by individual willpower.

This is Governance-as-Code—the shift from governance as a manual behaviour that humans can forget or shortcut, to governance as an architectural requirement that the system enforces regardless of workload or time pressure.

Control Plane vs Enforcement Layer

The Enforcement Layer within AIMS Governance extends this concept beyond the individual output event. Where the Control Plane acts at the point of dissemination, the Enforcement Layer operates across the system lifecycle—monitoring compliance rates, flagging Edit Delta outliers, detecting patterns of governance theatre (the systematic production of compliant-looking records without genuine substantive review), and triggering escalation pathways when configured thresholds are breached.

The distinction between a Control Plane and an Enforcement Layer is the distinction between a gate and a surveillance system:

- The gate stops a single non-compliant event

- The surveillance system identifies whether the gates are being systematically bypassed or gamed over time

The Control Plane does not replace human judgment. It ensures human judgment is exercised before dissemination can occur.

VIII. International Rebuttal — Defeating the EU “Black Box” Presumption

Australian firms serving European clients, or operating in European markets, face a regulatory dimension that most local compliance programmes have not yet factored in: the EU Product Liability Directive 2024/2853.

The Global Standards Architecture

Before addressing the specific EU Product Liability Directive, it is worth framing the broader standards architecture into which VHC fits. At the international level, two bodies are actively developing AI governance requirements that create demand for precisely the kind of measurement instrument VHC provides.

The EU AI Act, now in phased implementation, places human oversight and contemporaneous logging at the centre of high-risk AI compliance without specifying how those outcomes should be measured.

ISO/IEC 42001, the international AI management systems standard, creates a governance framework that similarly requires evidence of meaningful oversight without defining the evidentiary mechanism.

VHC’s five-stage workflow, Edit Delta metric, and cryptographic session record are architecturally consistent with the requirements of both instruments – and are designed to be the practical implementation layer that converts their abstract obligations into operational compliance evidence.

The Shift in EU Product Liability

The Directive, which entered into force on 8 December 2024 and must be transposed by EU member states by 9 December 2026, fundamentally restructures product liability for AI outputs. Under the old framework, a claimant had to prove defectiveness.

Under the new Directive, if the technical complexity of an AI system makes proof “excessively difficult,” a rebuttable presumption of defectiveness arises. The firm must then demonstrate the AI output was not defective – and that verification activities were performed.

The Black Box Problem and VHC Rebuttal

As Gibson Dunn’s analysis of the Directive notes, this is particularly relevant for AI as a black box – precisely the opacity that makes proving non-defectiveness difficult without contemporaneous records. The VHC hash is the rebuttal: it proves exactly what was delivered, at what moment, following what verification steps.

Alignment with EU AI Act Requirements

Separately, the EU AI Act Article 14 requires that high-risk AI systems be designed to allow effective human oversight, with explicit acknowledgement of automation bias as a systemic risk. Article 12 mandates automatic, immutable logging of events throughout a high-risk AI system’s operation. VHC is not merely compatible with these obligations – it is a structural compliance mechanism for them.

Further Reading and Professional Commentary

For firms with cross-border European exposure, the EUR-Lex text of Directive 2024/2853 and the EU AI Act Article 14 full text are essential reading. The Taylor Wessing analysis of the new Directive provides accessible professional commentary.

IX. Financial Engineering — TCOR, Premium Reduction, and M&A Value

The business case for VHC is not limited to risk avoidance. Firms that can demonstrate structured AI governance generate measurable financial advantages across three distinct dimensions.

Total Cost of Risk (TCOR) Compression

TCOR – the full economic cost of risk, including premiums, retentions, claims costs, and administrative overhead – is directly affected by what underwriters call Denial Sensitivity: the probability that a valid claim is denied on evidentiary or procedural grounds.

Every dollar spent resolving a denied claim that should have been covered is a pure TCOR loss. VHC narrows Denial Sensitivity to near zero for AI-related disputes, because the contemporaneous record eliminates the insurer’s primary ground for denial.

Preferred Risk Status and Premium Reduction

Twelve months of clean VHC telemetry – a complete, unbroken record of compliant AI oversight across every client engagement – creates a track record that structured PI insurers can underwrite with confidence. This positions firms to negotiate from a basis of demonstrated performance rather than promises.

Directionally, firms achieving Preferred Risk Status through documented governance track records have pursued multi-year rate guarantees and premium reductions in the range of 8–22%. These figures are illustrative of the market direction rather than guaranteed outcomes for any individual firm.

M&A Valuation Premium

The M&A landscape for professional services firms is shifting noticeably. Sophisticated acquirers assessing AI-integrated firms now treat governance documentation as a due diligence category in its own right. An ungoverned AI programme – one that can generate advice but cannot prove it was properly reviewed – represents a contingent liability that acquirers discount.

A VHC-documented programme, by contrast, demonstrates that the firm’s AI capability is not only valuable but defensible. In a 2026–2027 acquisition context, that distinction is beginning to appear in enterprise valuations.

X. Board VHC Integrity Checklist

Directors should table this self-assessment at the next governance review. Five questions. Honest answers required.

- Do we have a mathematical definition of ‘meaningful human review’ that satisfies our insurer’s warranty language? (Not a policy statement – a quantitative threshold with documented compliance evidence.)

- Are our VHC logs created contemporaneously during the workflow — or reconstructed after the fact?

- Do we have technical Control Planes – gates that physically block high-stakes AI outputs until a VHC record is cryptographically signed? (Or does our governance rely on human willpower under workload pressure?)

- Can we produce a SHA-256 hash that rebuts the ‘Black Box’ presumption in a regulatory investigation or court proceeding? (If the answer is no, assess your European client exposure and your insurer’s position on that gap.)

- Have our directors formally acknowledged VHC as a governance risk category in board minutes? (Without board-level acknowledgement, a regulator or insurer can argue the firm’s leadership was unaware of the risk – which is worse than knowing and acting.)

XI. Proof Is the New Policy

There is a broader significance to what VHC represents beyond individual firm compliance. The governance economy that is now forming around AI — driven by the EU AI Act, ISO/IEC 42001, the EU Product Liability Directive, and emerging national equivalents — requires a measurable standard for human oversight.

From Policy to Measurable Standards

Policy frameworks and legislation have, to date, described what that standard must achieve without specifying how it should be operationalised at the transaction level. VHC is a candidate answer to that open specification. The Edit Delta gives a “meaningful review” a number. The SHA-256 hash gives the moment of approval a fingerprint. The Session Record gives regulators, insurers, and courts a chain they can follow from AI generation to client delivery without a single gap.

A framework with those properties is not merely a product – it is a potential international standard. That is the conversation this protocol is designed to enter, and the firms that bring the best proof will define the terms of it.

Beyond Policy: The End of the Oversight Illusion

Policy documents, written review procedures, and attestation checklists are, at this point, table stakes — minimum expectations that every regulated firm should have had years ago.

VHC converts the aspiration of human oversight into a forensically defensible artefact – the operational companion to Proof Before Scale™, the principle that governed AI adoption must be validated before scaled deployment. Where Proof Before Scale governs the decision to adopt, VHC governs the evidence of every output the adopted system produces.

The Rise of the AI Governance Economy

We are entering what might fairly be called the governance economy for AI – a market in which proof-ready firms attract better insurers, better clients, and better valuations. The firms that recognise this early will not just avoid the losses that come with the Oversight Illusion. They will convert their governance investment into a competitive asset.

From Assertion to Evidence

Start with the five board questions. Then build the system that turns your answers from assertions into evidence.

Frequently Asked Questions

Q1. What is Verifiable Human Contribution?

VHC is a structured protocol that produces a mathematically auditable record proving professional engagement with AI-generated output. Unlike a checkbox or written review policy, it generates quantitative, cryptographically secured evidence. Source: Governance Artifact System™ (AU 2025220863).

Q2. What is the Edit Delta metric?

A character-level variance score measuring the distance between raw AI output and the final human-authorised version, calculated using Levenshtein Distance. A higher Edit Delta signals more substantive human engagement. Source: VHC Patent Protocol.

Q3. Why do insurers care about VHC?

Because ‘meaningful human review’ is a warranty condition in most PI and E&O policies. Without contemporaneous proof that review occurred – not just a policy stating it should – an insurer can deny a claim even when review genuinely took place.

Q4. What is a SHA-256 hash in this context?

A cryptographic digital fingerprint of the advice document at the exact millisecond of professional approval. It is tamper-evident – any post-approval change produces a completely different hash – making it forensically admissible as proof of the document’s state at approval.

Q5. What is EU Product Liability Directive 2024/2853?

A 2024 EU law that entered force in December 2024 and places the burden of rebuttal on the deploying firm for AI-related product liability claims where technical complexity makes proof excessively difficult. Member states must implement by 9 December 2026. Source: EUR-Lex.

Q6. What is Automation Bias?

The documented tendency for humans to under-scrutinise AI outputs under pressure. A 2025 systematic review in AI & Society found that high workload conditions reliably increase the risk of delayed or failed detection of AI errors. EU AI Act Article 14 explicitly names it as a systemic governance concern. Source: Springer Nature.

Q7. What is a Control Plane in AI governance?

A technical gate – software architecture – that physically prevents a high-stakes AI output from being disseminated until a VHC record has been cryptographically signed. It enforces the governance rule architecturally rather than relying on human compliance.

Q8. What is TCOR?

Total Cost of Risk – the full economic burden, including premiums, retentions, claims costs, and administrative overhead. VHC reduces TCOR by compressing Denial Sensitivity: the risk that a valid claim is denied because the firm cannot produce adequate contemporaneous evidence.

Q9. Does VHC apply to agentic AI systems?

Yes. Agentic AI systems operating on knowledge bases require a minimum 25% Edit Delta threshold to verify that autonomous logic has been reviewed by a qualified human expert before client delivery.

Q10. Is VHC relevant outside Australia?

Directly. VHC addresses EU AI Act Article 14 logging obligations, EU AI Act Article 12 record-keeping requirements, and the rebuttal burden under EU Product Liability Directive 2024/2853 – making it essential for any firm with European client exposure or cross-border operations.

Authority Reference Links

- https://eur-lex.europa.eu/legal-content/EN/TXT/PDF/?uri=CELEX:32024L2853

- https://artificialintelligenceact.eu/article/14/

- https://artificialintelligenceact.eu/article/12/

- https://asic.gov.au/regulatory-resources/find-a-document/regulatory-guides/rg-175-licensing-financial-product-advisers-conduct-and-disclosure/

- https://www.apra.gov.au/sites/default/files/cpg_234_information_security_june_2019_1.pdf

- https://link.springer.com/article/10.1007/s00146-025-02422-7

- https://www.gibsondunn.com/eu-product-liability-directive-responding-to-software-ai-and-complex-supply-chains/

- https://www.taylorwessing.com/en/insights-and-events/insights/2025/01/di-new-product-liability-directive

- https://iapp.org/news/a/eu-ai-act-shines-light-on-human-oversight-needs

- https://oecd.ai/en/

- https://www.legislation.gov.au/C2004A04858/latest/text

About the Author

John Cosstick is the Founder-Editor of TechLifeFuture.com and a BOLD Awards VII winner in the InsurTech category for AIMS Governance™, with Verifiable Human Contribution (VHC) and Proof Before Scale™ (PBS) recognised as finalists. A retired Certified Financial Planner and former bank compliance manager, he has contributed to the UK Money and Pensions Service Debt Review and UN AIforGood initiatives.

He is the inventor of the Verifiable Human Contribution (VHC) framework, with patent applications pending with IP Australia (AU 2025220863) and WIPO (PCT/IB2025/058808). The Governance Artifact System™ is his flagship AI governance publication.

Disclosure: The author holds patent-pending applications covering the VHC protocol (AU 2025220863; PCT/IB2025/058808). This article constitutes a dated public disclosure of the frameworks described herein. Nothing in this article should be construed as a waiver of any intellectual property rights.

Disclosure

Citation Accuracy & Verification Statement

At TechLifeFuture, every article undergoes a multi-step fact-checking and citation audit process. We verify technical claims, research findings, and statistics against primary sources, authoritative journals, and trusted industry publications. Our editorial team adheres to Google’s EEAT (Expertise, Experience, Authoritativeness, and Trustworthiness) principles to ensure content integrity.

If you have questions about any references used or would like to suggest improvements, please contact us at [email protected] with the subject line: Citation Feedback.

Amazon Affiliate Disclosure

We are a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for us to earn fees by linking to Amazon.com and affiliated sites. If you click on an Amazon link and make a purchase, we may earn a small commission at no extra cost to you.

General Affiliate Disclosure

Some links in this article may be affiliate or referral links (including Amazon, Educative.io, and Mindhive.ai). If you purchase through these links, TechLifeFuture.com may earn a small commission at no extra cost to you. Our editorial content remains independent, unbiased, and grounded in research and expertise. We only recommend tools, platforms, or courses we believe bring real value to our readers.

Legal and Professional Disclaimer

The content on TechLifeFuture.com is for educational and informational purposes only and does not constitute professional advice, consultation, or services. AI technologies evolve rapidly and vary in application. Always consult qualified professionals—such as data scientists, AI engineers, or legal experts—before implementing any strategies or technologies discussed. TechLifeFuture assumes no liability for actions taken based on this content.

This article reflects AI governance, insurance, and regulatory practices as of 21 April 2026 (AEST). Readers should confirm whether subsequent guidance has been issued by their professional bodies or relevant regulators.

The Governance Artifact System™, Verifiable Human Contribution (VHC), Proof Before Scale™, and related framework terms are trademarks and patent-pending intellectual property of John Cosstick. Some links in this article may be affiliate or referral links. If you purchase through these links, TechLifeFuture.com may earn a small commission at no extra cost to you.

This article was reviewed under TechLifeFuture’s citation-verification and EEAT-aligned editorial process. Portions were AI-assisted and human-edited for accuracy and compliance.

© 2026 TechLifeFuture.com | Creative Commons BY-NC 4.0