A 2024 study by NewsGuard and Stanford Internet Observatory revealed that leading AI systems generated false or misleading content in nearly 75% of tested prompts, raising concerns about the reliability of AI-generated information. As artificial intelligence becomes deeply embedded in our daily lives, understanding the distinction between AI misinformation vs AI hallucinations is essential for safeguarding information integrity.

As AI becomes increasingly integrated into our daily lives, distinguishing between these concepts is crucial. This article aims to clarify the differences between them and explore their impact on information accuracy.

Key Takeaways

- Understanding the distinction between AI misinformation and AI hallucinations is vital.

- AI misinformation refers to incorrect information generated by AI systems, often with human intent.

- AI hallucinations involve AI systems producing information not based on actual data.

- The implications of both phenomena on information integrity are significant.

- Recognizing these differences can help in developing strategies to mitigate their effects.

- Awareness and digital literacy are critical in navigating AI-driven information landscapes.

- AI misinformation refers to false information generated or spread by AI, often with human intent.

The Growing Challenge of AI-Generated Falsehoods

The rapid advancement of AI technology has led to an explosion in AI-generated content, raising concerns about the spread of false information. As machine learning models become more sophisticated, they are increasingly being used to create content that can be difficult to distinguish from that produced by humans.

The Explosion of AI Content Creation

The accessibility of AI content generation tools—ranging from text generators to image and video creation platforms—has led to a significant increase in AI-produced material. However, this growth brings unintended consequences, including the dissemination of inaccurate or fabricated information.

Why Distinguishing AI Errors Matters

Distinguishing between different types of AI errors, such as AI hallucinations technology and other forms of AI-generated misinformation, is crucial for developing effective countermeasures. Understanding the nuances of these errors can help in creating more accurate detection tools and mitigating their impact on users and society.

Defining AI Misinformation

Defining AI misinformation requires understanding its complex and multifaceted nature. AI misinformation refers to the spread of false or misleading information through artificial intelligence systems, which can occur intentionally or unintentionally.

Core Characteristics of AI Misinformation

AI misinformation has several key characteristics that distinguish it from other forms of misinformation. It often involves the use of sophisticated AI algorithms to generate convincing but false content.

- Often facilitated by AI algorithms trained on biased or inaccurate data.

- May involve the deliberate crafting of false narratives.

- Includes AI-generated deepfakes, manipulated text, or misleading statistics.

Intentional vs. Unintentional Spread

The spread of AI misinformation can be either intentional or unintentional. Intentional spread involves the deliberate use of AI to deceive or manipulate people, while unintentional spread can result from flaws in AI systems or their training data.

The Role of Human Actors

Human actors play a significant role in AI misinformation, as they are often responsible for designing, training, and deploying AI systems that can spread false information.

How AI Misinformation Propagates

AI misinformation can propagate rapidly through various channels, including social media, online news outlets, and other digital platforms. Understanding how AI misinformation spreads is crucial for developing effective strategies to detect and mitigate it.

Defining AI Hallucinations

Understanding AI hallucinations is crucial for developing more accurate and trustworthy AI systems. AI hallucinations refer to the phenomenon where AI models, particularly those involved in natural language processing, generate information that is not based on actual data or facts.

The Nature of AI Hallucinations

Hallucinations often manifest as: – Fictitious citations or academic references. – Incorrect historical events or scientific claims. – Fabricated images or video content.

Confabulation in Language Models

Confabulation occurs when language models fill gaps in their knowledge by making educated guesses, which can sometimes result in entirely fabricated information. This can lead to the creation of ai-generated illusions that are misleading or false. [4]

Pattern Recognition Errors

Pattern recognition errors happen when AI models misinterpret or overgeneralize patterns in the data they were trained on, leading to hallucinatory outputs. These errors can be challenging to detect without proper hallucination recognition techniques.This often occurs when models encounter ambiguous or incomplete information.

Triggers for AI Hallucinations

Several factors can trigger AI hallucinations, including incomplete or biased training data, model architecture limitations, and the complexity of the tasks assigned to the AI. Understanding these triggers is essential for mitigating the occurrence of hallucinations in AI outputs.

By recognizing the causes and characteristics of AI hallucinations, developers can improve the reliability and trustworthiness of AI systems, particularly in applications involving natural language processing.

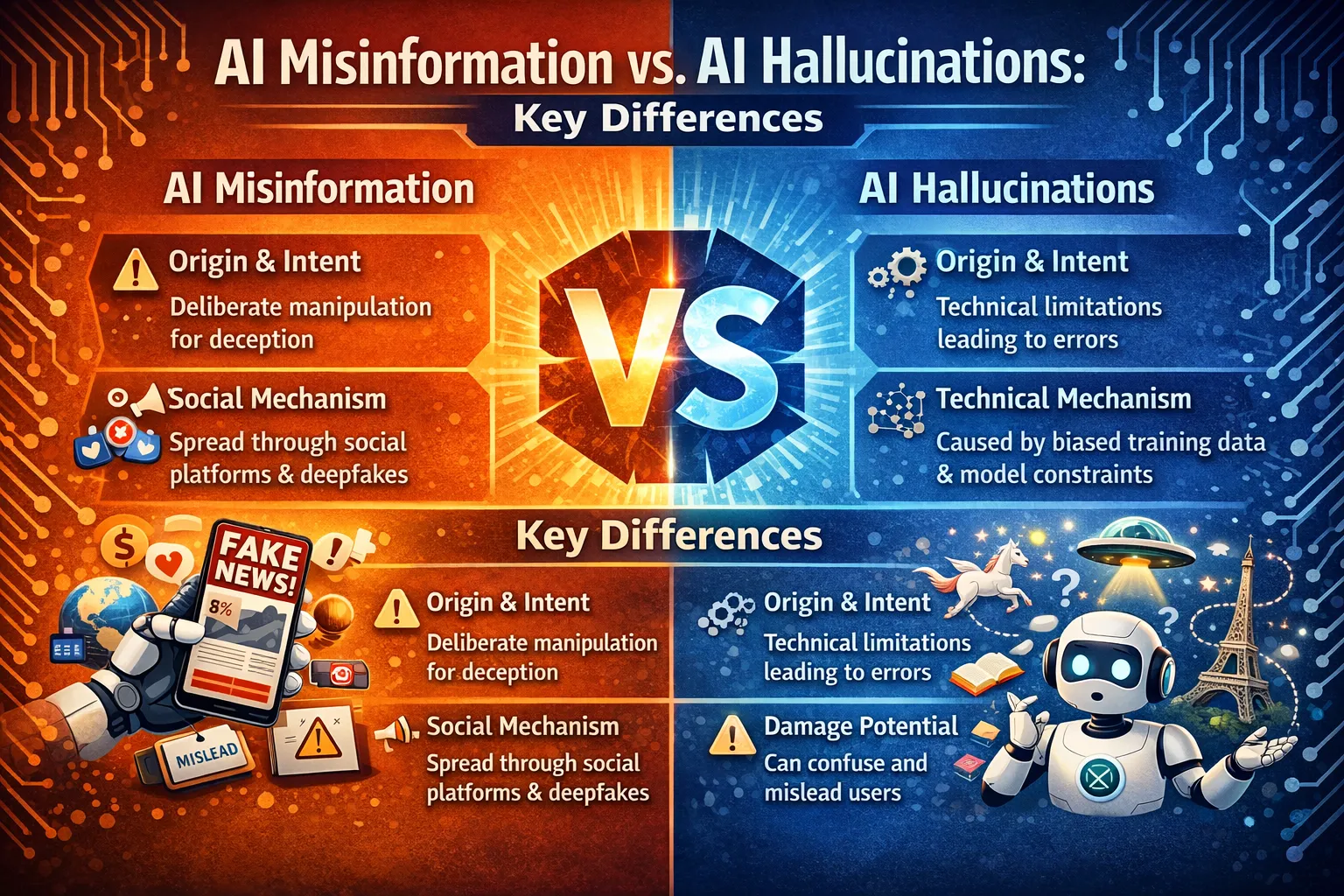

AI Misinformation vs. AI Hallucinations: Key Differences

As AI-generated content proliferates, distinguishing between AI misinformation and AI hallucinations is vital. While both phenomena involve the dissemination of false information, their origins, mechanisms, and impacts differ significantly.

Origin and Intent Differences

AI misinformation often originates from deliberate manipulation or inaccurate ai algorithms designed to deceive. In contrast, AI hallucinations result from the inherent limitations of AI models, generating false information without malicious intent. Understanding these differences is crucial for developing effective countermeasures.

Technical vs. Social Mechanisms

AI hallucinations are primarily driven by technical limitations, such as biases in training data or model architecture constraints. On the other hand, AI misinformation is often spread through social mechanisms, including the deliberate creation and dissemination of ai deepfake content to manipulate public opinion.

Varying Impacts on Users and Society

Both AI misinformation and hallucinations can have significant impacts on users and society, influencing perceptions and decisions. However, AI misinformation can be particularly damaging as it exploits cognitive biases, potentially leading to widespread misinformation and societal harm.

In conclusion, while AI misinformation and AI hallucinations share some similarities, their differences in origin, intent, and impact necessitate distinct approaches to mitigation and management.

The Technical Underpinnings of AI Hallucinations

Delving into the technical foundations of AI hallucinations reveals a multifaceted problem that is crucial for understanding how these falsehoods are generated. At the heart of this issue are several key technical factors that contribute to the phenomenon.

Training Data Limitations and Biases

One of the primary technical underpinnings of AI hallucinations lies in the limitations and biases of the training data. Deep learning models are only as good as the data they’re trained on. If the training data contains biases or inaccuracies, the model is likely to learn and replicate these flaws, potentially generating hallucinations.

For instance, if a dataset used for training a language model contains a disproportionate amount of biased or incorrect information, the model may produce hallucinatory content that reflects these biases.

Model Architecture Constraints

The architecture of deep learning models also plays a significant role in the generation of AI hallucinations. Certain model architectures are more prone to hallucinations due to their design and the way they process information. For example, models that rely heavily on probabilistic generation are more likely to produce hallucinations, as they may generate content based on patterns rather than actual data.

Probabilistic Generation Problems

Probabilistic generation is a double-edged sword in deep learning. While it allows models to generate novel and contextually appropriate content, it also introduces the risk of generating hallucinations. When models generate content based on probability rather than certainty, there’s a risk of producing information that isn’t grounded in reality. This is particularly relevant in the context of AI ethics, as it raises questions about the responsibility and accountability of AI systems.

Understanding these technical underpinnings is crucial for developing strategies to mitigate AI hallucinations. By addressing the limitations in training data, model architecture constraints, and the challenges of probabilistic generation, we can work towards reducing the occurrence of hallucinations and improving the reliability of AI systems.

The Ecosystem of AI Misinformation

The spread of AI misinformation involves multiple interconnected components: – Deliberate Manipulation Techniques: Malicious actors use prompt engineering or jailbreak methods to bypass AI safeguards and produce deceptive content. – Distribution Networks: Social media platforms, bot networks, and influencers amplify AI-generated misinformation [6].

Understanding these dynamics is essential for developing effective countermeasures.

Deliberate Manipulation Techniques

Deliberate manipulation involves using AI to create and disseminate false information. This can be achieved through:

- Prompt Engineering for Deception: Crafting inputs to AI models to produce misleading outputs.

- Model Jailbreaking Methods: Bypassing AI safety protocols to generate harmful or false content.

These techniques exploit the capabilities of AI models, making it challenging to distinguish between accurate and misleading information.

Distribution Networks and Amplification

Once misleading AI data is generated, it needs to be distributed and amplified to have a significant impact. This is often achieved through:

- Social media platforms, where content can quickly go viral.

- Bot networks that automatically spread misinformation.

- Influencer networks that unwittingly or intentionally amplify deceptive AI narratives.

The combination of these factors creates a complex ecosystem that can be difficult to navigate and mitigate. Understanding these elements is crucial for developing effective strategies against AI misinformation.

Case Studies: Notable AI Hallucinations

Real-world examples highlight the risks of AI hallucinations: – Legal Citations: AI-generated legal documents containing fabricated case law have been submitted to courts, jeopardizing legal integrity. – Academic References: AI tools have produced non-existent journal articles or studies, misleading researchers and readers. – Historical Errors: AI chatbots have provided incorrect historical facts, undermining public trust in AI-generated knowledge.

Language Model Fabrications

Language models, a type of AI designed to generate human-like text, can produce fabrications that range from benign to highly misleading. These fabrications can occur in various contexts, including but not limited to, legal and academic citations, as well as historical and factual recounting.

Legal and Academic Citations

In the realm of legal and academic writing, AI-generated citations can be particularly problematic. For instance, AI systems have been known to fabricate legal precedents or academic references that sound plausible but are entirely fictional. This can lead to significant issues in legal and academic integrity.

Historical and Factual Errors

AI models can also hallucinate when recounting historical events or stating facts. These errors can range from minor inaccuracies to significant distortions of historical truth, potentially misleading readers or users who rely on the information provided.

Visual Generation Hallucinations

Visual generation AI, such as that used in image and video creation, can also produce hallucinations. These can include entirely fabricated images or videos that are often difficult to distinguish from reality. The implications of such visual hallucinations are vast, potentially impacting areas such as news reporting, advertising, and even legal evidence.

Key challenges in addressing AI hallucinations include improving hallucination recognition and developing more robust AI systems that can minimize the occurrence of such fabrications.

- Enhancing training data to reduce biases and inaccuracies

- Implementing more sophisticated fact-checking mechanisms within AI systems

- Increasing transparency about the limitations and potential for hallucinations in AI outputs

Case Studies: Impactful AI Misinformation Campaigns

Recent years have seen a dramatic increase in AI-driven misinformation, with various campaigns impacting public opinion and political landscapes. The sophistication of AI-generated content has made it challenging to distinguish between authentic and fabricated information.

Political Deepfakes and Their Consequences

Political deepfakes have emerged as a significant threat in the realm of artificial intelligence misinformation. These AI-generated videos or audio recordings can convincingly mimic political figures, potentially swaying public opinion or even influencing election outcomes. For instance, a deepfake video of a political leader making inflammatory statements can cause widespread unrest.

The consequences of such deepfakes are far-reaching, eroding trust in media and institutions. It’s crucial to develop effective countermeasures to detect and mitigate the impact of these AI-generated falsehoods.

Explore Ethics in AI Courses on Educative

AI-Generated Disinformation Operations

AI-generated disinformation operations involve the creation and dissemination of false information on a large scale. These operations can be orchestrated by state or non-state actors to influence public opinion or disrupt social cohesion. The use of AI in generating disinformation makes it difficult to identify the source and intent behind the false information.

Understanding the mechanisms behind these operations is crucial for developing effective countermeasures. By analyzing case studies of impactful AI misinformation campaigns, we can better comprehend the challenges and develop strategies to mitigate the effects of AI deepfake content and other forms of AI-generated disinformation.

Detection and Verification Methods

The proliferation of AI-generated falsehoods necessitates the development of robust detection and verification methods. As AI continues to evolve, so too must our strategies for identifying and mitigating its potential to mislead.

Technical Approaches to Identifying AI Falsehoods

Technical solutions are at the forefront of the battle against AI-generated misinformation. These include methods that can be applied directly to the content created by AI systems.

Watermarking and Fingerprinting

Watermarking involves embedding identifiable patterns within AI-generated content, allowing for its detection. Fingerprinting, on the other hand, analyzes the unique characteristics of AI outputs to trace their origin.

Statistical Analysis Tools

Statistical analysis tools examine the probability distributions and patterns within AI-generated content to identify anomalies or signs of manipulation. These tools can be particularly effective in detecting AI hallucinations.

Human-Centered Verification Strategies

While technical approaches are crucial, human judgment remains essential in verifying the accuracy of information. Human-centered strategies involve critical thinking, fact-checking, and media literacy programs to empower individuals against misinformation.

By combining technical and human-centered approaches, we can develop a comprehensive framework for detecting and verifying AI-generated content. This multifaceted strategy is key to mitigating the risks associated with AI misinformation and hallucinations.

Protecting Yourself in the Age of AI Deception

As AI-generated content becomes increasingly sophisticated, it’s crucial to develop strategies for protecting ourselves from AI deception. The proliferation of AI-generated false information has made it challenging for individuals to discern what’s real and what’s fabricated online.

Critical Evaluation of AI-Generated Content

Critically evaluating AI-generated content requires a combination of skepticism and knowledge about how AI works. It’s essential to be aware of cognitive biases that can influence our judgment, such as confirmation bias, where we tend to believe information that confirms our existing beliefs.

When encountering AI-generated content, ask yourself: Is the information consistent with other credible sources? Are there any red flags, such as grammatical errors or unnatural language patterns? Being vigilant and questioning the information presented can help mitigate the risks associated with AI misinformation.

Digital Literacy Best Practices

Enhancing digital literacy is key to navigating the complex online environment. This involves understanding the basics of natural language processing and how AI algorithms can manipulate information. Best practices include verifying information through multiple sources, being cautious of content that elicits strong emotions, and regularly updating your knowledge about AI capabilities.

- Be cautious of information that seems too good (or bad) to be true.

- Use fact-checking websites and tools to verify information.

- Stay informed about the latest developments in AI technology.

Tools and Resources for Verification

Several tools and resources are available to help verify the authenticity of online content. These include fact-checking websites, browser extensions designed to detect AI-generated content, and online courses that teach digital literacy skills. Leveraging these resources can significantly enhance your ability to distinguish between genuine and AI-generated content. Some examples are:

- Browser extensions detecting AI-generated content.

- Online courses are improving digital literacy skills.

- Fact-checking platforms (e.g., Snopes, FactCheck.org).

By combining critical evaluation skills, digital literacy, and the right tools, you can effectively protect yourself from AI deception and navigate the digital world with confidence.

The Future Landscape of AI Truth and Deception

The rapidly changing landscape of AI ethics is raising important questions about the potential for AI to spread misinformation. As artificial intelligence continues to advance, it’s becoming increasingly important to develop strategies for mitigating the risks associated with AI-generated falsehoods.

One key area of focus is the development of emerging safeguard technologies. These technologies have the potential to detect and prevent AI-generated misinformation, helping to maintain the integrity of information online.

Emerging Safeguard Technologies

Emerging safeguard technologies, such as deep learning-based detection systems, are being designed to identify AI-generated content. These systems can help to flag potentially false information, allowing for more effective moderation and fact-checking.

- AI-powered fact-checking tools

- Deep learning-based detection systems

- Blockchain-based verification methods

Policy and Regulatory Developments

In addition to technological solutions, policy and regulatory developments are crucial for addressing the issue of AI-generated misinformation. Governments and regulatory bodies are beginning to explore new frameworks for governing AI, with a focus on AI ethics and transparency.

Some potential regulatory approaches include:

- Stricter guidelines for AI development and deployment

- Increased transparency requirements for AI-generated content

- Collaboration between industry leaders and regulatory bodies

Explore Essentials of Large Language Models on Educative

FAQ

Q1: What is the main difference between AI misinformation and AI hallucinations?

A: AI misinformation refers to the spread of false information, often intentionally, whereas AI hallucinations occur when AI models generate false or inaccurate information due to limitations or biases in their training data or algorithms.

Q2: How do AI hallucinations occur in language models?

A: AI hallucinations in language models can occur due to confabulation, where the model generates text based on patterns learned from training data, but the generated text is not grounded in reality. This can be triggered by incomplete or biased training data, or by the model’s tendency to recognize patterns.

Q3: What are the implications of AI-generated falsehoods, and why is it crucial to distinguish between different types of AI errors?

A: AI-generated falsehoods can have significant impacts on users and society, including the spread of misinformation, erosion of trust in information, and potential harm to individuals or communities. Distinguishing between different types of AI errors is crucial to develop effective strategies for mitigating these impacts.

Q4: How can AI misinformation be propagated, and what role do human actors play in its spread?

A: AI misinformation can be propagated through various channels, including social media, online forums, and other digital platforms. Human actors can play a significant role in the spread of AI misinformation, often intentionally creating or disseminating false information to achieve specific goals or manipulate public opinion.

Q5: What are some technical approaches to identifying AI falsehoods, and how can they be used to verify the accuracy of AI-generated content?

A: Technical approaches to identifying AI falsehoods include watermarking and fingerprinting, statistical analysis tools, and other methods that can help detect AI-generated content. These approaches can be used in conjunction with human-centered verification strategies to verify the accuracy of AI-generated content.

Q6: What are some best practices for critical evaluation of AI-generated content, and how can individuals protect themselves from AI deception?

A: Critical evaluation of AI-generated content involves being aware of the potential for AI-generated falsehoods, verifying information through multiple sources, and being cautious when encountering suspicious or unverified content. Individuals can protect themselves from AI deception by developing digital literacy skills, using fact-checking tools, and staying informed about the latest developments in AI technology.

Q7: What are some emerging safeguard technologies and policy developments aimed at mitigating AI-generated falsehoods?

A: Emerging safeguard technologies include AI-powered fact-checking tools, digital watermarking, and other methods designed to detect and mitigate AI-generated falsehoods. Policy developments, such as regulations and guidelines for AI development and deployment, are also being explored to address the challenges posed by AI-generated falsehoods.

Conclusion

As AI technologies continue to evolve, the distinction between AI misinformation and AI hallucinations becomes increasingly important. AI misinformation refers to the spread of false information, often with the intent to deceive or manipulate. In contrast, AI hallucinations are a result of the technology’s limitations, generating false or inaccurate information without malicious intent.

The differences between AI Misinformation vs. AI Hallucinations are crucial in understanding the potential risks and consequences associated with AI-generated content. By recognizing the distinct characteristics of each, we can better navigate the complex landscape of AI-generated falsehoods and develop effective strategies to mitigate their impact.

As AI hallucination technology advances, it is essential to remain vigilant and adapt to the changing nature of AI-generated falsehoods. By doing so, we can harness the benefits of AI while minimizing its risks.

About the Author & Disclosures

John Cosstick is Founder-Editor of TechLifeFuture.com and winner of the 2024 BOLD Award for Open Innovation in Digital Industries. He is a former banker, accountant, and certified financial planner. He is now a freelance journalist and author. John is a member of the Media Entertainment and Arts Alliance (Union). You can visit his Amazon author page by clicking HERE.

Verified Citation List

[1] NewsGuard& Stanford Internet Observatory (2024). “AI Hallucination and Misinformation Testing Report.”

[2] BBC News (2024). “Deepfakes in U.S. Primaries: How AI is Disrupting Elections.”

[3] Pew Research Center (2024). “Public Perceptions of AI-Generated Content.”

[4] MIT Technology Review (2024). “The Hidden Dangers of AI Hallucinations.”

[5] Nature Machine Intelligence (2023). “Architectural Drivers of AI Hallucinations.”

[6] RAND Corporation (2024). “Combatting AI-Driven Misinformation Networks.”

[7] World Economic Forum (2024). “AI Governance and Misinformation Mitigation Strategies.”

Citation Accuracy Notice: Our articles undergo an ongoing citation accuracy audit to ensure all referenced sources are valid, reliable, and up to date. If you identify any citation that appears incorrect or have suggestions for more appropriate sources, please contact our editorial team at [email protected]. Your feedback is invaluable in maintaining the integrity of our content.